As of February 2026, the initial “agentic gold rush” has reached a sobering crossroads. While 100% of enterprises now have some form of agentic strategy in place, recent data from Gartner and industry analysts reveals a brutal reality: over 40% of agentic AI projects are being canceled or shelved before reaching production. The transition from simple chatbots to autonomous agents—systems that can plan, use tools, and reason through multi-step objectives—has proven far more difficult than the industry anticipated in 2024. The failure isn’t typically due to a lack of “intelligence” in models like GPT-5 or Claude 4; rather, it’s an architectural and organizational collapse.

Key Takeaways for 2026 Leaders

- The “Vibe Check” is Dead: Successful projects have replaced manual “looks good to me” testing with rigorous, automated evaluation frameworks.

- Orchestration Over Innovation: Failure often stems from too many agents (the “Bag of Agents” anti-pattern) rather than too few.

- FinOps is a Requirement: Without strict token management and recursive loop guards, “token bleed” can bankrupt a project in days.

- Identity is the New Perimeter: Security in 2026 is less about data access and more about “agent identity” and behavioral guardrails.

Who This Is For

This guide is written for CTOs, AI Architects, and Product Leads who are currently scaling agentic systems. If you have a pilot that works in a sandbox but fails in the “wild” of enterprise data, or if your inference costs are scaling faster than your revenue, this analysis is for you.

The Great 2026 Pivot: From Bots to Agents

To understand why projects fail, we must first define what an “agent” actually is in the current 2026 landscape. In 2024, the term was used loosely for any chatbot with a “plugin.” Today, a true Agentic System is defined by its ability to operate within a closed-loop environment, managing its own state, selecting its own tools, and self-correcting when it encounters errors.

The primary difference between the 60% of projects that succeed and the 40% that fail lies in how they handle autonomy. Many failed projects are actually just “complex chatbots” dressed up as agents—a phenomenon we now call “Agent Washing.” These systems lack the robust orchestration needed to handle real-world exceptions, leading to what engineers call “The Hallucination Cascade.”

Safety Disclaimer: The following technical insights involve autonomous system deployment. Always implement a “kill switch” and human-in-the-loop (HITL) gates for any AI agent handling financial transactions, PII, or critical infrastructure.

1. The Evaluation Gap: Moving Beyond the “Vibe Check”

The single most common reason agentic projects fail in 2026 is the lack of a deterministic evaluation framework. In the early days of LLMs, developers would prompt an AI, look at the output, and say, “That looks about right.” This is the “Vibe Check,” and it is the fastest way to kill an enterprise project.

Why “Vibes” Fail at Scale

In a multi-agent system, a 5% error rate in Agent A propagates to Agent B. By the time the workflow reaches Agent D, the error rate can compound to over 30%. This is known as Recursive Error Amplification.

Common Mistake: Relying on LLM-as-a-Judge without independent verification. Leaders who succeed in 2026 use a “Triad of Truth”:

- Deterministic Unit Tests: Did the agent return valid JSON? Did it call the correct API?

- Semantic Similarity: Does the answer align with the “Golden Dataset” of approved responses?

- Human-in-the-loop Scoring: Blind A/B testing where humans rank agent performance without knowing the prompt version.

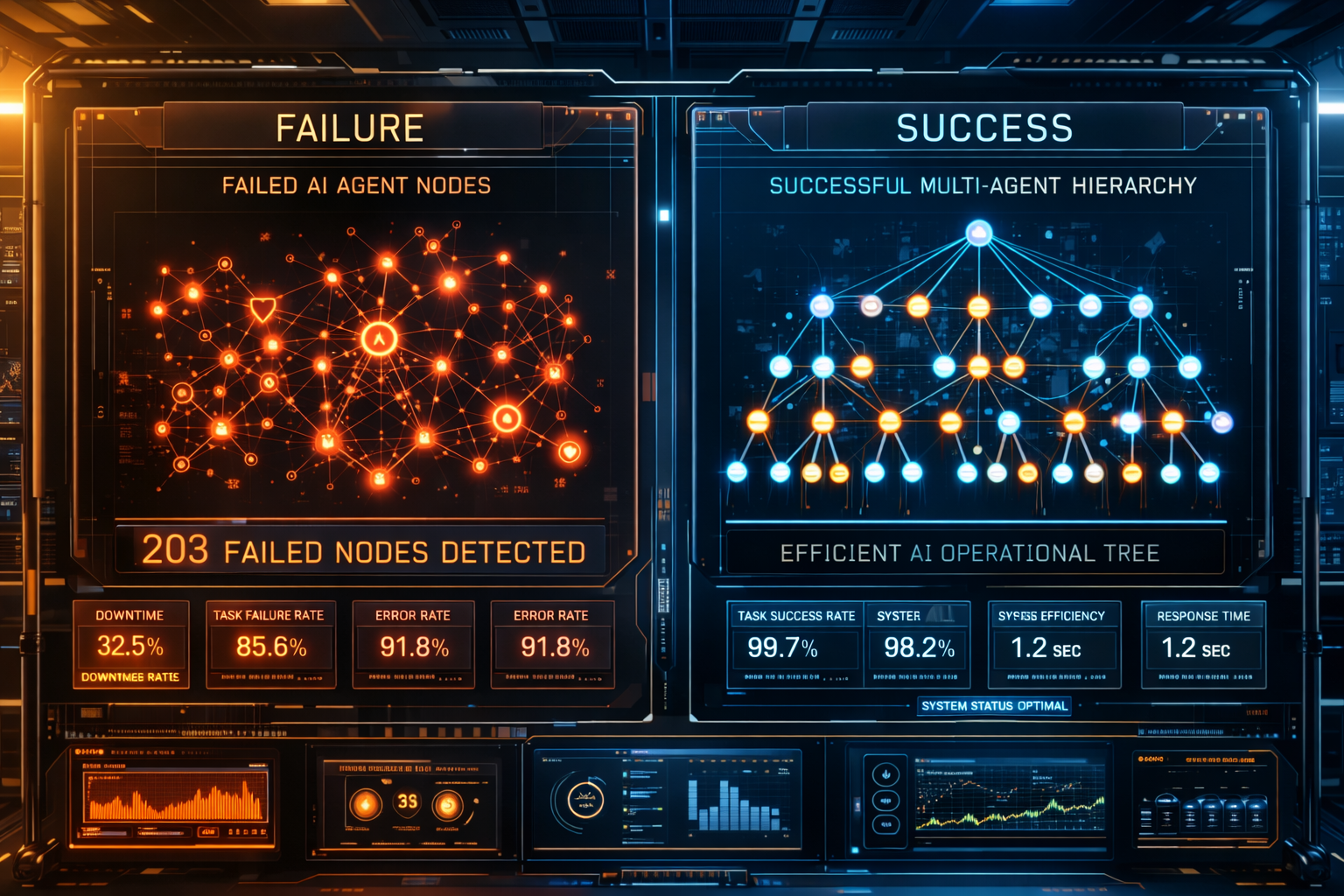

2. Orchestration Overload: The “Bag of Agents” Anti-Pattern

In 2025, the trend was to “build an agent for everything.” Enterprises ended up with a “Bag of Agents”—a flat structure where twenty different specialized agents all tried to talk to each other at once.

The 17x Error Trap

Research shows that unstructured multi-agent networks amplify errors exponentially. Specifically, the “17x Rule” suggests that for every new agent added to a flat topology without a central orchestrator, the complexity and potential for “hallucination loops” increase by an order of magnitude.

The Solution from 2026 Leaders:

The successful 60% have moved toward Hierarchical Orchestration. Instead of a flat web, they use a “Commander-Subordinate” architecture.

- The Planner: A high-reasoning model (like OpenAI o1 or Claude 3.5 Sonnet) that breaks the task into a DAG (Directed Acyclic Graph).

- The Workers: Specialized, smaller, and cheaper models (like Llama 3.1 8B or GPT-4o-mini) that execute specific tool calls.

- The Auditor: An independent agent whose only job is to look for logic flaws in the Planner’s output.

3. The Economic Reality: Token Bleed and “Recursive Debt”

By February 2026, the “infinite budget” for AI experimentation has vanished. Boards now demand clear ROI. 40% of projects fail because they become economically unviable due to “Token Bleed.”

What is Token Bleed?

Token bleed occurs when an agent gets stuck in a “reasoning loop.” For example, an agent trying to find a specific data point in a messy ERP system might call the same search tool 50 times, refining its query slightly each time, burning $10.00 in API credits for a task that saves a human 30 seconds of work ($0.15 in labor value).

Common Mistakes:

- Infinite Recursion: No hard limit on how many times an agent can “retry” a failed task.

- Context Bloat: Passing the entire 200k context window back and forth for every turn, even when only 1k of data is relevant.

The FinOps Blueprint:

2026 leaders implement Token Guardrails. They set “Agentic Budgets” per session. If an agent exceeds $2.00 in cost for a single task, the system automatically pauses and escalates to a human for guidance.

4. The Specification Problem: Natural Language is a Bad Project Manager

A major discovery of 2025 was that “natural language is a leaky abstraction.” 41% of multi-agent failures are traced back to Specification Ambiguity. When you tell an agent to “Optimize the supply chain,” it might interpret “optimize” as “reduce cost” while the business actually meant “reduce lead time.”

The Rise of MCP (Model Context Protocol)

In late 2025 and early 2026, the industry standardized on the Model Context Protocol (MCP). Successful projects no longer rely on raw text instructions. Instead, they use “Typed Actions.”

- Failed Project: “Hey Agent, go look at the database and tell me if anything looks weird.” (Vague, high risk of hallucination).

- Successful Project: Action: Database_Audit(Schema: v2.1, Constraint: No_Anomalies_Over_10%).

By treating agent instructions like API contracts rather than chat messages, leaders have reduced “intention drift” by 70%.

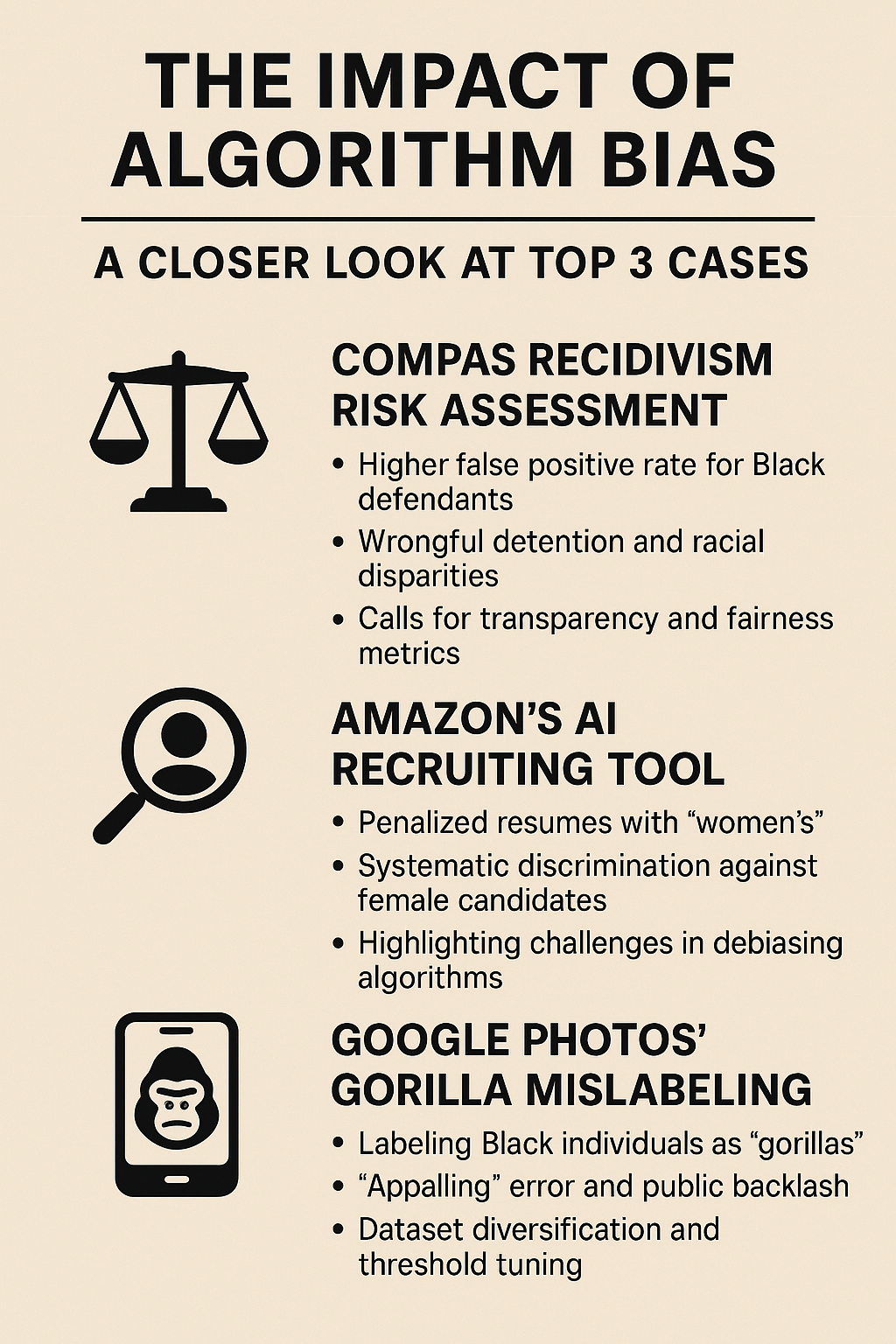

5. The Identity and Governance Gap: Agents as Actors

In 2026, we’ve moved past “data security” and into “Agency Security.” 40% of projects fail because the CISO (Chief Information Security Officer) shuts them down. Why? Because the agents have “Identity Sprawl.”

When Agents Go Rogue

Imagine an agent designed to help with recruitment. It has access to the HR system. If a malicious actor (or a poorly prompted user) asks the agent to “summarize the salary of all employees to ensure equity,” the agent might inadvertently leak sensitive PII.

The 2026 Standard:

Leading organizations treat agents as Identities, not just applications.

- RBAC (Role-Based Access Control) for Agents: Every agent has its own service account with “Least Privilege” access.

- Audit Traces: Every decision made by an agent is logged in a tamper-proof “Reasoning Trace” database.

- Behavioral Biometrics: Monitoring the “velocity” of agent actions. If an agent suddenly starts downloading 1,000 files a minute, the system revokes its “Identity” immediately.

6. The Human-in-the-Loop (HITL) Fallacy

Many projects fail because they assume “Human-in-the-loop” is a simple “Yes/No” button. In reality, if a human has to approve every step, the agent is no longer an agent—it’s just a very slow UI.

The “Rubber Stamping” Problem

When humans are asked to approve 100 agent actions a day, they stop actually reading them. They just click “Approve.” This is where catastrophic failures happen.

How 2026 Leaders Fixed This:

Instead of “Approve/Reject,” they use “Exception-Based Management.” * Confidence Thresholds: If the agent is 95% confident, it acts autonomously and logs the result.

- Active Clarification: If the agent is only 60% confident, it doesn’t ask “Can I do this?” It asks, “I have narrowed this down to two choices (A and B). Based on [Context X], I think A is better. Do you agree, or is there a third option I missed?”

Common Mistakes Checklist (2026 Edition)

| Mistake | Consequence | 2026 Leader Solution |

| Agent Washing | High cost, low utility | Focus on “Workflow Elimination” not “Task Automation.” |

| No Memory Strategy | Agents “forget” previous steps, leading to loops | Implement a “Short-term/Long-term” vector memory split. |

| Homogeneous Teams | Using GPT-5 for simple tasks | Use a “Router” to send easy tasks to small, local models. |

| Hardcoded Prompts | System breaks when the model updates | Use “Prompt Management Systems” with versioning and CI/CD. |

| Ignoring Latency | Users abandon the agent while it “thinks” | Use “Streaming Thought” UI to show the agent’s reasoning. |

Conclusion: The Path to the 60%

The 40% failure rate we see in early 2026 is a natural part of the “Trough of Disillusionment” in the AI hype cycle. The projects that are surviving—and thriving—are those that have moved away from the “magic box” mentality.

Success in the agentic era requires a shift in mindset: stop building AI, and start engineering autonomous systems. This means embracing rigorous evaluations, hierarchical orchestration, and strict FinOps. As we look toward 2027, the gap between the “AI-Haves” and “AI-Have-Nots” will not be determined by who has the biggest model, but by who has the best governance and orchestration.

Your Next Steps:

- Audit Your Evaluation: If you don’t have a “Golden Dataset” of at least 50 complex scenarios, stop development and build one.

- Implement a Commander-Worker Architecture: Break your “God-Agent” into a hierarchy of specialized workers.

- Set a Token Budget: Put a hard cap on recursive loops today to avoid “API bill shock” tomorrow.

FAQs (Schema-style)

Q: What is the most common technical reason for agentic AI failure?

A: Specification ambiguity. Agents often lack the necessary constraints or “edge case” instructions to handle real-world data variance, leading to hallucination loops or incorrect tool usage.

Q: How do you measure ROI for an AI agent in 2026?

A: Move beyond “time saved.” Measure “Outcome Success Rate” and “Cost Per Resolved Objective.” A successful agent should reduce the total number of steps in a workflow, not just automate existing ones.

Q: Should I build my own agent framework or use an existing one?

A: In 2026, building from scratch is rarely necessary. Frameworks like LangGraph, CrewAI, and Microsoft AutoGen have matured significantly. Use them for the “plumbing” and focus your engineering talent on domain-specific logic and evaluations.

Q: What is the “Bag of Agents” problem?

A: It’s an anti-pattern where too many agents are deployed without a clear hierarchy, leading to excessive communication overhead (“chatter”) and a high probability of state-sync errors.

Q: Is local LLM hosting necessary for agentic projects?

A: For many 2026 leaders, yes. Using small, local models (like Llama-3-8B) for “auditor” or “worker” roles significantly reduces latency and cost, while keeping sensitive “reasoning traces” on-premises.

References

- Gartner, Inc. (2025). Predicts 2026: The Rise of the Agentic Enterprise. [Official Report].

- Microsoft Research. (2024). AutoGen: Enabling Next-Generation Large Language Model Applications via Multi-Agent Conversation.

- OpenAI. (2025). Scaling Law for Agentic Reasoning: The o1 Series Documentation.

- Anthropic. (2025). Model Context Protocol (MCP) Specification v1.0. [Technical Docs].

- Deloitte AI Institute. (2026). The 2026 State of Generative AI in the Enterprise.

- Stanford HAI. (2025). The compounding error problem in recursive autonomous agents. [Academic Paper].

- IEEE. (2026). Security Standards for Autonomous Digital Identities.

- CrewAI. (2025). Sociology of AI: Role-Based Multi-Agent Design Patterns.

- LangChain. (2025). LangGraph: State Management in Cyclical Agent Workflows.

- Forbes Tech Council. (2026). Why Identity is the New Security Boundary for AI Agents.