The evolution of the automotive industry has reached a pivotal juncture. As of March 2026, the dream of fully autonomous vehicles (AVs) is no longer confined to science fiction or restricted testing zones. However, the transition from Level 2 (Partial Automation) to Level 4 (High Automation) and Level 5 (Full Automation) has necessitated a fundamental shift in how data is processed. This shift is defined by Edge Intelligence (EI).

In simple terms, Edge Intelligence is the deployment of machine learning algorithms and data processing capabilities directly onto the “edge” of the network—in this case, the vehicle itself—rather than relying on a centralized cloud server. In the context of autonomous driving, this allows for split-second decision-making that is vital for passenger safety and navigational efficiency.

Key Takeaways

- Latency is the Enemy: For a vehicle traveling at highway speeds, a 100-millisecond delay in processing can be the difference between a safe stop and a collision. Edge intelligence brings processing time down to microseconds.

- Bandwidth Efficiency: Modern AVs generate terabytes of data daily. Sending all this to the cloud is economically and technically unfeasible; EI filters and processes data locally.

- Enhanced Privacy: By keeping sensitive sensor data on-board, Edge Intelligence reduces the attack surface for hackers and protects passenger privacy.

- Reliability in Dead Zones: Autonomous vehicles must function in tunnels, rural areas, and “urban canyons” where 5G or satellite connectivity may be spotty.

Who This Article Is For

This deep dive is designed for automotive engineers, software developers, AI researchers, and policy makers who need to understand the technical architecture, safety implications, and future trajectory of edge-native autonomous systems.

1. Defining Edge Intelligence in the 2026 Landscape

To understand the role of Edge Intelligence (EI), we must first distinguish it from traditional Edge Computing. While Edge Computing provides the infrastructure (the hardware at the edge), Edge Intelligence refers to the algorithms and AI models optimized to run on that hardware.

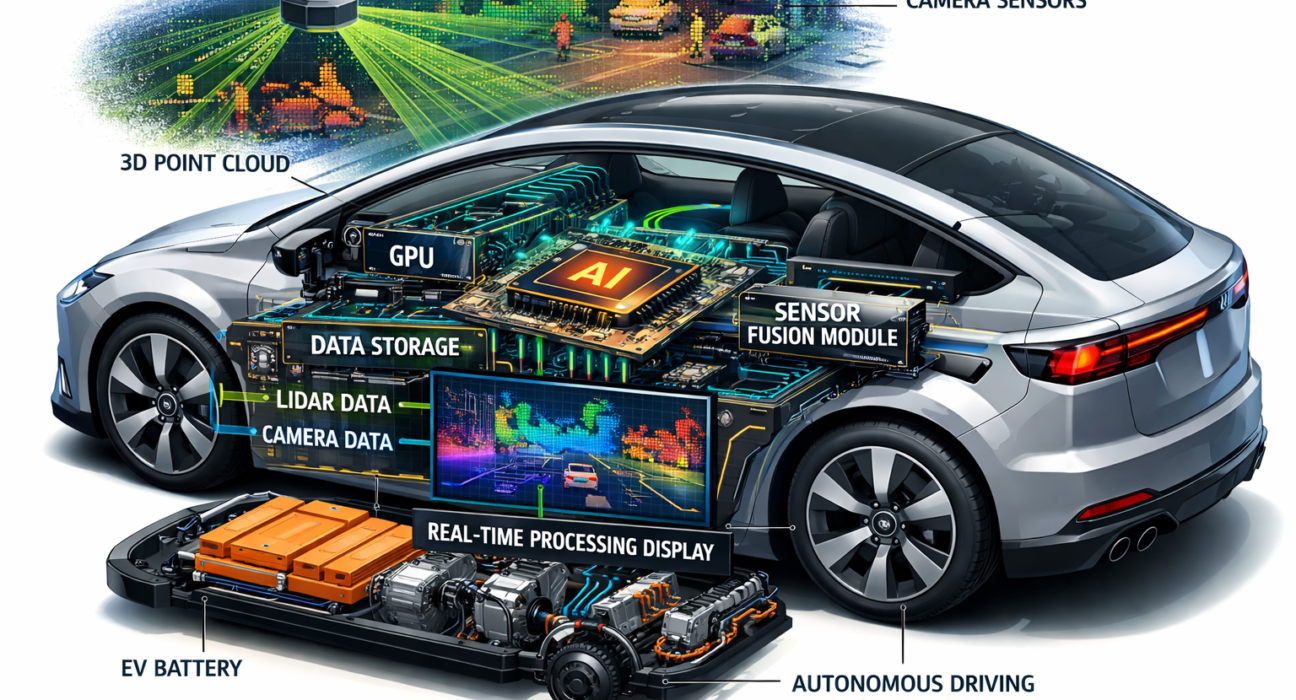

As of 2026, EI in vehicles is characterized by “On-device AI.” This involves a sophisticated stack of specialized hardware, such as Neural Processing Units (NPUs) and Graphics Processing Units (GPUs), working in tandem with lightweight, quantized neural networks. The goal is to perform inference—the process of the AI making a prediction based on new data—locally, without needing a “handshake” from a remote server.

The Shift from Cloud-Centric to Edge-Native

In the early 2020s, many autonomous features relied on “Cloud-to-Vehicle” communication for heavy lifting, such as complex path planning or global map updates. However, as the number of sensors per vehicle increased (often exceeding 20 per car), the “pipe” to the cloud became a bottleneck.

Today, we use a Fog Computing model. The vehicle handles immediate safety-critical tasks (Edge), local neighborhood infrastructure handles traffic coordination (Fog), and the cloud handles long-term model training and global map management.

2. The Critical Need: Latency and Real-Time Processing

The primary driver for Edge Intelligence is the physical limitation of the speed of light and network congestion. In autonomous driving, we operate within the realm of Hard Real-Time Systems.

The Physics of Danger

Consider a vehicle traveling at 100 km/h (approximately 27.7 meters per second).

If a pedestrian steps into the road:

- Sensor Capture: 10ms

- Cloud Transmission (Round Trip): 50ms–150ms (depending on 5G/6G congestion)

- Cloud Processing: 20ms

- Actuation (Braking): 10ms

In a cloud-dependent scenario, the vehicle might travel over 5 meters before the brakes are even applied. With Edge Intelligence, the transmission delay is eliminated. The processing happens in roughly 5-10ms locally, allowing the vehicle to react in less than 1 meter of travel distance.

Mathematical Modeling of Latency

In technical terms, the total latency $L_{total}$ can be expressed as:

$$L_{total} = L_{sensor} + L_{proc} + L_{comm} + L_{act}$$

Where:

- $L_{sensor}$ is the data acquisition time.

- $L_{proc}$ is the inference time of the AI model.

- $L_{comm}$ is the communication delay (which EI aims to minimize toward zero).

- $L_{act}$ is the mechanical actuation time.

By moving $L_{proc}$ to the edge and reducing $L_{comm}$ to internal bus latency (like Automotive Ethernet), the safety margin increases exponentially.

3. Hardware Architectures Enabling the Edge

Edge Intelligence is only as good as the silicon it runs on. In 2026, the market has moved away from general-purpose CPUs toward highly specialized System-on-Chips (SoCs).

The Rise of NPUs and TPUs

Companies like NVIDIA (with the Drive Thor platform), Qualcomm (Snapdragon Ride), and Tesla (FSD Chip 3) have pioneered chips with dedicated silicon for matrix multiplication—the core math of deep learning. These chips must balance:

- TOPS (Tera Operations Per Second): Modern AVs require 500 to 2,000+ TOPS.

- Thermal Design Power (TDP): High-performance chips generate heat; EI hardware must be efficient to avoid draining the EV battery or requiring complex liquid cooling.

- Redundancy: Following ASIL-D (Automotive Safety Integrity Level D) standards, these chips often feature “lock-step” architectures where two cores perform the same calculation and compare results to detect hardware faults.

Memory Bottlenecks

Edge Intelligence also requires high-bandwidth memory (HBM). Moving weights of a large transformer model (often used in modern computer vision) from memory to the processor consumes time and energy. 2026 architectures utilize “In-Memory Computing” or massive L3 caches to keep the data as close to the logic gates as possible.

4. Sensor Fusion: The Edge’s Primary Input

An autonomous vehicle “sees” through a suite of sensors. Edge Intelligence is the “brain” that merges these disparate signals into a single, cohesive environmental model—a process known as Sensor Fusion.

LiDAR vs. Camera vs. Radar

- Cameras: Provide high-resolution texture and color but struggle with depth and low light.

- LiDAR (Light Detection and Ranging): Creates a precise 3D point cloud but is expensive and can be affected by heavy fog or snow.

- Radar: Excellent at detecting velocity and working in all weather, but lacks spatial resolution.

Late Fusion vs. Early Fusion

In Early Fusion, raw data from all sensors is combined before being processed by a single AI model. This requires massive edge bandwidth.

In Late Fusion, each sensor has its own edge-processing unit that detects objects, and the “results” are then fused. Late fusion is more common in current edge architectures because it provides redundancy; if the LiDAR edge-node fails, the Camera edge-node still provides object detection.

5. V2X: The Collaborative Edge

Edge Intelligence doesn’t mean the vehicle is an island. V2X (Vehicle-to-Everything) communication allows the vehicle’s edge node to talk to other edge nodes.

V2I (Vehicle-to-Infrastructure)

Smart traffic lights and roadside units (RSUs) act as “Edge Servers.” If a pedestrian is around a blind corner, the RSU detects them and broadcasts the information to the vehicle’s edge system. The vehicle processes this “external” data as if it were its own sensor input.

V2V (Vehicle-to-Vehicle)

Vehicles can share “intent” data. If a lead vehicle detects a patch of ice, its edge intelligence broadcasts a “friction coefficient update” to all trailing vehicles. This creates a distributed network of intelligence where the “Edge” is the entire roadway.

6. AI Model Optimization: Making Intelligence “Fit”

You cannot simply take a massive data-center AI model and “drop” it into a car. It would exceed the memory and power limits. Edge Intelligence relies on Model Compression.

Quantization

Most AI models are trained using 32-bit floating-point numbers. Quantization reduces these to 8-bit or even 4-bit integers ($INT8$ or $INT4$). While there is a slight “accuracy drop,” the gain in speed and reduction in memory footprint is massive.

Pruning and Knowledge Distillation

- Pruning: Removing “neurons” from the neural network that don’t contribute significantly to the output.

- Knowledge Distillation: Training a small “student” model to mimic the behavior of a massive “teacher” model. The student is then deployed to the vehicle’s edge.

SLAM (Simultaneous Localization and Mapping)

At the edge, the vehicle must perform SLAM. It builds a map of its environment while simultaneously tracking its location within that map. As of 2026, EI-driven SLAM uses “Semantic Mapping,” where the vehicle doesn’t just see a “3D object,” but understands it is a “parked car with a door slightly ajar.”

7. Security and Privacy at the Edge

With great processing power comes great risk. Edge Intelligence is a double-edged sword for cybersecurity.

The Security Advantage

By processing data locally, the vehicle doesn’t have to transmit raw video feeds of passengers or the environment to the cloud. This limits the data that can be intercepted in transit.

The Security Risk: Model Poisoning

If an attacker can access the vehicle’s edge hardware, they could potentially “poison” the local AI model. In 2026, hardware-based Root of Trust (RoT) and Trusted Execution Environments (TEEs) are used to ensure that the AI models running at the edge have not been tampered with.

Federated Learning

This is a breakthrough in EI. Instead of sending raw data to the cloud to train a better model, the vehicle’s edge node trains a mini-update based on its local experience. It then sends only the weights (the mathematical adjustments) to a central server. The server aggregates weights from millions of cars and sends an updated, smarter model back to every vehicle via an OTA (Over-The-Air) update.

8. Regulatory and Safety Standards (E-E-A-T Compliance)

Autonomous driving is a matter of public safety. Therefore, Edge Intelligence must adhere to strict international standards.

ISO 26262 and ASIL

The International Organization for Standardization (ISO) 26262 defines the functional safety of road vehicles. Edge Intelligence systems must reach ASIL-D—the highest level of safety integrity. This requires:

- Failure Mode Effects Analysis (FMEA): Predicting every way the AI could fail.

- Graceful Degradation: If the edge AI detects a “low confidence” score in its perception, it must be able to safely pull the car over or hand back control to a human (in Level 3 systems).

SOTIF (Safety of the Intended Functionality)

Unlike traditional software, AI can fail even without a “bug”—it might just “misinterpret” a strange visual scene (like a person in a dinosaur costume). ISO 21448 (SOTIF) addresses these “unknown unsafe” scenarios by requiring edge systems to have vast libraries of “corner cases” to reference locally.

Safety Disclaimer: As of March 2026, while Level 4 autonomous driving is available in select geo-fenced areas, always maintain situational awareness. Current edge intelligence systems are highly advanced but not infallible. Local traffic laws regarding “hands-on” requirements vary by jurisdiction.

9. Common Mistakes in Implementing Edge Intelligence

Even with advanced technology, several pitfalls remain for developers and manufacturers:

- Over-Reliance on a Single Sensor: Relying too heavily on cameras (the “Tesla approach”) without redundant LiDAR/Radar at the edge can lead to “phantom braking” or missed obstacles in high-contrast lighting.

- Thermal Throttling: Designing a high-TOPS system without adequate cooling. When the chip gets too hot, it slows down (throttles), increasing latency at the exact moment the vehicle might be in a complex, high-speed environment.

- Static Edge Models: Deploying a model and never updating it. The “real world” is dynamic. Edge intelligence must be supported by robust OTA update pipelines to account for new road signs, vehicle types, or environmental conditions.

- Ignoring Edge-to-Cloud Symmetry: Ensuring the environment used to train the AI (Cloud/Data Center) perfectly matches the environment where it runs (Edge/Vehicle). Even small differences in math libraries can lead to “inference drift.”

10. The Economic Impact of Edge Intelligence

The shift to Edge Intelligence is rewriting the economics of the car. In 2026, the value of a car is determined more by its “Compute per Watt” than its horsepower.

The Software-Defined Vehicle (SDV)

Manufacturers are now software companies. By investing in powerful edge hardware upfront, they can sell “feature-as-a-service” updates. A car owner might pay a monthly subscription to “unlock” Level 4 highway driving, which is delivered as a new, more efficient AI model to the edge processor.

Reducing Infrastructure Costs

If every car has powerful Edge Intelligence, smart cities don’t need to install supercomputers at every intersection. The “intelligence” is distributed, reducing the capital expenditure (CapEx) for municipalities.

11. Future Outlook: Beyond 2026

Where do we go from here? The next frontier is Neuromorphic Computing—chips that mimic the human brain’s architecture more closely than current GPUs. These chips promise to run Edge Intelligence at a fraction of the power, potentially allowing even the smallest electric micro-mobility vehicles (like e-bikes) to have autonomous safety features.

Furthermore, 6G Connectivity (currently in early pilot phases in 2026) will offer sub-millisecond latency, potentially allowing for “Distributed Edge Intelligence,” where a vehicle’s AI can seamlessly use the spare processing power of a nearby vehicle to solve a complex calculation.

Conclusion: The Road Ahead

Edge Intelligence is the unsung hero of the autonomous revolution. It solves the three most significant hurdles facing the industry: latency, reliability, and privacy. By moving the “brain” of the car from a distant data center to the vehicle’s own chassis, we have created a system capable of making life-saving decisions in the blink of an eye.

However, the journey is far from over. As AI models become more complex and sensor resolutions increase, the demand for more efficient edge hardware and more robust optimization techniques will only grow. For developers and stakeholders, the focus must remain on safety-first architectures and collaborative intelligence.

Next Steps for You:

If you are an engineer or a decision-maker, your next step should be auditing your current data pipeline.

- Evaluate: How much of your data processing is cloud-dependent?

- Optimize: Can you implement quantization or pruning to move more logic to the edge?

- Secure: Does your edge hardware have a hardware-based Root of Trust?

The future of driving isn’t just “connected”—it’s “intelligent at the edge.”

FAQs

1. What is the difference between Edge Computing and Edge Intelligence?

Edge Computing is the physical infrastructure (servers, chips, and sensors) located near the data source. Edge Intelligence is the specific application of AI and machine learning within that infrastructure to perform local inference and decision-making.

2. Can autonomous cars work without an internet connection?

Yes, thanks to Edge Intelligence. While features like live traffic updates or global map refreshes require a connection, the core safety and navigation systems are designed to run locally on the vehicle’s edge hardware to ensure safety in “dead zones.”

3. How does Edge Intelligence improve battery life in EVs?

By processing data locally and using optimized AI models (like INT8 quantization), the system reduces the power needed for constant high-bandwidth data transmission. Specialized NPUs are also significantly more power-efficient than general-purpose CPUs.

4. Is Edge Intelligence safe from hackers?

It is generally more secure because raw data stays on the device. However, it introduces new risks like “model spoofing.” Security is maintained through hardware-level encryption and Trusted Execution Environments (TEEs) that ensure only verified AI models can run.

5. What role does 5G/6G play if the processing is at the edge?

5G/6G acts as the “connective tissue” for V2X. While the vehicle makes its own safety decisions, the high-speed network allows it to receive information from other cars and infrastructure, extending its “vision” beyond its own physical sensors.

References

- NHTSA (National Highway Traffic Safety Administration): “Automated Vehicles for Safety,” official guidelines and safety standards for 2025-2026.

- IEEE Xplore: “A Survey of Edge Intelligence in Autonomous Driving: Architectures and Challenges” (Published 2025).

- SAE International: “J3016: Taxonomy and Definitions for Terms Related to On-Road Motor Vehicle Automated Driving Systems.”

- NVIDIA Automotive: “Drive Thor: The Centralized Computer for Safe and Secure Autonomous Vehicles” (Technical Whitepaper).

- Stanford AI Lab: “Deep Learning Optimization for Embedded Systems and Edge Hardware.”

- ISO (International Organization for Standardization): “ISO 26262-1:2024 Road vehicles — Functional safety.”

- Qualcomm Technologies: “The Role of C-V2X in the Evolution of Automated Driving.”

- Waymo Research: “Safety Framework for Fully Autonomous Driving Systems.”