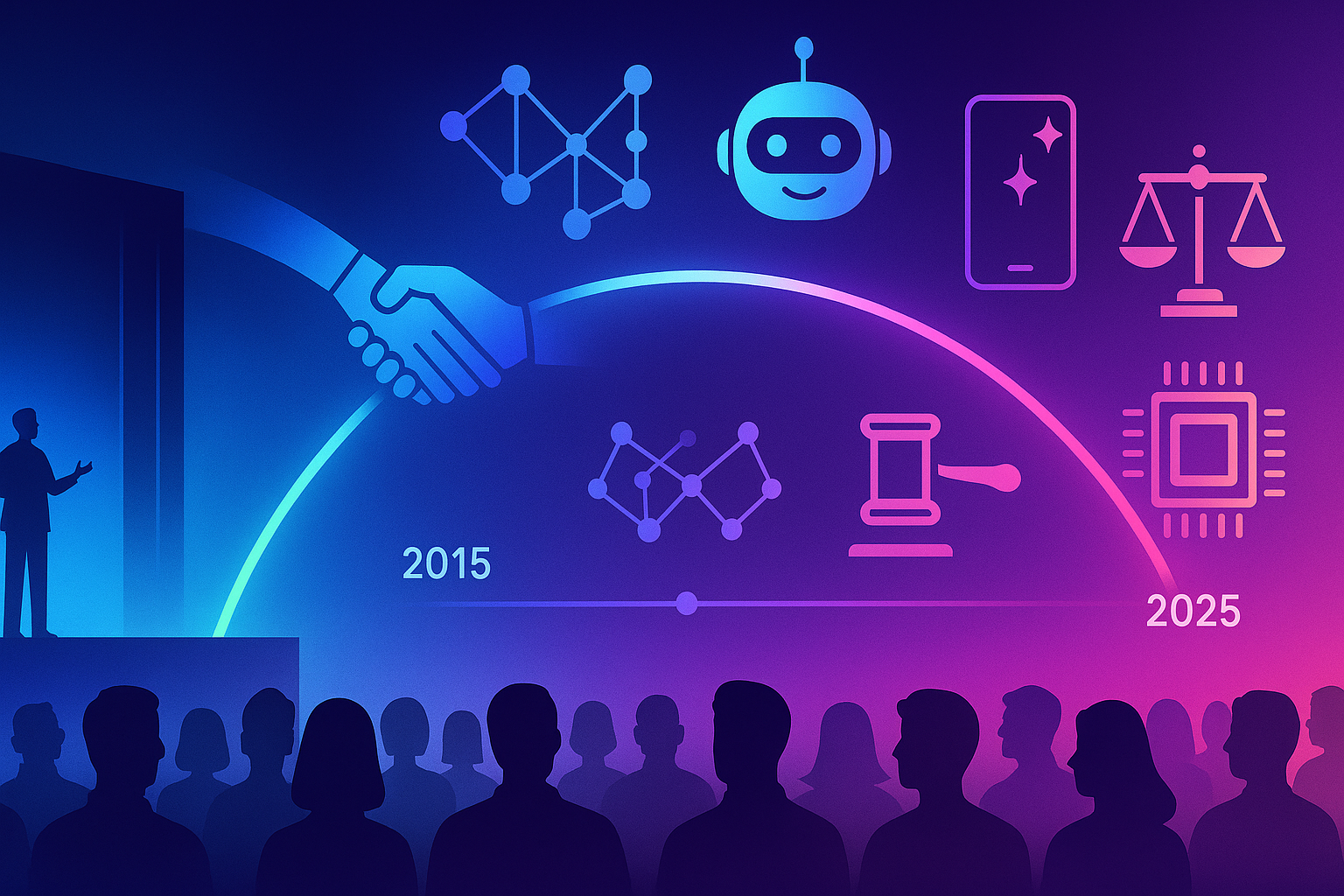

If you’re chasing an AI role right now (August 2025), you’re entering a market where demand is high, standards are higher, and the best teams move fast. This guide spotlights the top eight companies hiring AI talent right now, what they look for, how to position yourself, and the exact steps to get in the door. You’ll walk away with a four-week plan, metrics to track, and practical tips to avoid common mistakes—so you can spend less time guessing and more time interviewing.

Key takeaways

- Right now is hot: The eight companies below are actively posting AI roles across research, engineering, product, and infra.

- Signals matter: A focused portfolio, clear problem ownership, and evidence of impact trump generic credentials.

- Tailor or fail: Role-by-role alignment (skills, domain, stack) dramatically improves your reply and interview rates.

- Ship beats hype: A single, real, shipped project that matches the team’s problem area is the most persuasive “credential.”

- Track your funnel: Measure apply→recruiter→screen→onsite→offer; optimize weak links weekly.

OpenAI

What it is & why it’s compelling

A frontier AI lab building and deploying foundation models and products (consumer apps, developer platform, and enterprise). Roles span research, applied engineering, product, security, data, and go-to-market. The organization is hiring at scale across multiple functions and locations, which means multiple entry vectors for strong candidates.

Requirements & prerequisites

- Core skills: Python, distributed training or inference systems, modern deep learning frameworks, evaluation and safety methods (for many roles).

- Evidence of impact: A shipped model, library, or feature; measurable outcomes (latency improvement, quality lift, cost savings).

- Communication: Clear writing, design docs, and trade-off reasoning.

- Low-cost alternatives: Open datasets, a single GPU cloud instance, and community compute programs to build and publish a small but polished demo.

Step-by-step (beginner-friendly)

- Pick one problem aligned to an open role (e.g., evals, inference infra, red-teaming).

- Ship a focused artifact (small repo, clear README, demo notebook or minimal web UI).

- Write a 1-page narrative: problem, constraints, choices, metrics, results, hard lessons.

- Tailor your resume to the role: three bullets per job—problem → action → measurable result.

- Apply and reference the artifact in the first paragraph of your application.

Beginner modifications & progressions

- Simplify: Reproduce a published benchmark with a lean baseline.

- Scale up: Add logging/telemetry, A/B the baseline against your variant, report regression-safe gains.

Recommended cadence & metrics

- Cadence: 1 high-quality application/week; 1 portfolio artifact/month.

- KPIs: Reply rate (>20%), recruiter screen rate (>10% of total apps), coding screen pass rate (>40%).

Safety, caveats & common mistakes

- Overselling “AI expertise” without tangible work.

- Submitting a CV without role alignment.

- Neglecting reliability and unit tests in infra roles.

Mini-plan (example)

- This weekend: Build a lightweight eval harness comparing two models on a narrow task.

- Next week: Add uncertainty estimates and a regression dashboard; apply to the most relevant role and cite the repo.

Google DeepMind

What it is & why it’s compelling

A research-first organization operating across multiple hubs (London, Mountain View, Zurich, etc.) with openings spanning research, engineering, science, and product. Active listings include both cutting-edge research roles and builder roles bringing models into production at Google scale.

Requirements & prerequisites

- Core skills: Research chops (if applying research), or production engineering and large-scale infra (if applying engineering).

- Public artifacts: Papers, preprints, open-source repos, robust demos, or internal write-ups with de-identified evidence.

- Low-cost alternatives: Narrow but rigorous replications with ablations and error analysis.

Step-by-step (beginner-friendly)

- Choose a niche (e.g., multimodal inference, training algorithms, safety evals).

- Replicate a result on a reduced dataset; document error modes and mitigations.

- Open-source the code and a short “what didn’t work” post.

- Apply with a problem-solution narrative matching the target team’s remit.

Beginner modifications & progressions

- Mod: Swap heavy pretraining for prompt-engineering + small adapters.

- Progress: Move to distributed fine-tuning and dataset curation at modest scale.

Cadence & metrics

- Cadence: 1 substantial experiment/month; submit preprints or detailed tech notes.

- KPIs: Recruiter response rate, interview depth (research talk invited?), paper/code review quality.

Safety & pitfalls

- Presenting a “toy” project without rigor.

- Underestimating the weight of clean ablation studies and error bars.

Mini-plan

- Week 1: Reproduce a small multimodal evaluation with clear plots.

- Week 2: Add a robust baseline and a short interpretability analysis; submit.

Microsoft (including Microsoft Research and consumer/business AI teams)

What it is & why it’s compelling

A product-heavy ecosystem with deep investment in AI across the stack: models, infra, Copilot experiences, Azure, and Research. Fresh job postings cover applied science, platform, safety/post-training, and product roles in multiple hubs (Redmond, Mountain View, London, NYC).

Requirements & prerequisites

- Core skills: For productized AI, emphasize reliability, latency, CI/CD for models, and telemetry. For research, emphasize publications and novel methods.

- Partnering: Demonstrate collaboration with design, security, and compliance for enterprise settings.

- Low-cost alternatives: Build a small Copilot-style agent with logging, guardrails, and an evaluation loop.

Step-by-step

- Pick a Copilot-like use case (e.g., spreadsheet or docs assistant).

- Instrument it: prompts → responses → guardrail outcomes → human feedback loop.

- Report metrics: task success rate, latency, hallucination rate with clear thresholds.

- Apply to the best-fit role; highlight reliability engineering and safety post-training exposure.

Beginner modifications & progressions

- Mod: Start with a thin wrapper over an API plus a deterministic fallback.

- Progress: Add retrieval, function calling, streaming, and safety filters; run a tiny user study.

Cadence & metrics

- Cadence: 1 applied artifact every 2–3 weeks; keep an experiment log.

- KPIs: Technical screen pass rate, system design feedback (“clear trade-offs?”), onsite conversion.

Safety & pitfalls

- Ignoring enterprise constraints (PII, audit logs, RBAC).

- Overfitting to benchmarks without real-world telemetry.

Mini-plan

- This week: Build an agent with a safety checklist and offline evals.

- Next week: Add guardrails and structured logging; apply.

Meta (including core research and product AI teams)

What it is & why it’s compelling

A massive platform footprint, open-source model leadership, and multiple internal AI efforts (foundation models, infra, on-device, content understanding). The company is competing aggressively for senior AI talent and continues to post AI-related roles across disciplines.

Requirements & prerequisites

- Core skills: Large-scale training/inference, systems optimization, evaluation, and safety.

- Open-source and community: Contributions carry real weight.

- Low-cost alternatives: Target a narrow improvement to an open model (e.g., fine-tune, quantize, or evaluate) and show real wins.

Step-by-step

- Select an open model workflow relevant to the team you’re targeting.

- Demonstrate a measured improvement (throughput, accuracy, or cost) using disciplined evals.

- Publish results with reproducible scripts; add a concise blog note.

- Apply with the artifact and a crisp, three-bullet “impact summary.”

Beginner modifications & progressions

- Mod: Optimize inference with basic quantization and caching.

- Progress: Add distillation or data curation; benchmark on real-world tasks.

Cadence & metrics

- Cadence: 1 open contribution every 4–6 weeks.

- KPIs: Maintainer feedback or merges; recruiter reply and screen rates.

Safety & pitfalls

- Hand-waving about scale; show realistic cost and reliability math.

- Submitting without concrete telemetry.

Mini-plan

- Two-week sprint: Optimize a small open model for a single downstream task, publish the before/after metrics, then submit to a matching role.

Amazon (including AWS and device orgs leveraging AI)

What it is & why it’s compelling

A breadth of AI problems: commerce search and recommendations, supply chain, fraud, speech/vision on devices, and especially cloud AI. New postings continue to appear across applied science and ML engineering in multiple geographies.

Requirements & prerequisites

- Core skills: End-to-end ownership, experimentation at scale, measurable business impact.

- Writing: Strong narrative memos and clear experiment design.

- Low-cost alternatives: A minimal recommendation or detection system with robust A/B-like offline evaluation.

Step-by-step

- Pick a customer-obsessed use case (e.g., cold-start recommendation).

- Implement a baseline + improved model, log counterfactual metrics.

- Write the memo: context, goals, design, results, and next steps.

- Apply and attach the memo and repo.

Beginner modifications & progressions

- Mod: Use classical models with strong feature work before deep models.

- Progress: Add online inference and a throttled feedback loop.

Cadence & metrics

- Cadence: 1 well-written memo per project; revise after mentor feedback.

- KPIs: Recruiter responses, loop completion rate, written exercise scores.

Safety & pitfalls

- Vague narratives; Amazon values crisp writing and measurable outcomes.

- Over-engineering without a customer metric.

Mini-plan

- 7–10 days: Build a small ranking model with clear offline metrics; submit with the memo.

Apple (AIML teams across services, platform, and on-device intelligence)

What it is & why it’s compelling

A strong focus on on-device intelligence, privacy-preserving ML, and polished product experiences. Freshly posted roles span applied research, platform engineering, safety & interpretability, and product teams across multiple hubs.

Requirements & prerequisites

- Core skills: On-device constraints, latency, memory budgets, privacy, and evaluation.

- Craft: Performance profiling, SIMD/metal acceleration familiarity is a plus for some roles.

- Low-cost alternatives: Build a compact, on-device or edge-friendly demo and document the trade-offs.

Step-by-step

- Select a user-facing feature (e.g., on-device summarization).

- Prototype with a small model; measure power draw, latency, and accuracy.

- Tune for privacy and safety; write a short design doc on trade-offs.

- Apply and highlight user-centric impact and performance results.

Beginner modifications & progressions

- Mod: Start with a small, quantized model; cache aggressively.

- Progress: Add hardware-aware optimization and guardrails.

Cadence & metrics

- Cadence: 1 performance deep dive per project.

- KPIs: Latency at P95, energy impact, crash-free sessions.

Safety & pitfalls

- Ignoring privacy by default.

- Shipping “cool” but impractical demos.

Mini-plan

- 10–14 days: Build a tiny on-device feature, instrument it, write a 1-page report; apply with the repo and doc.

NVIDIA

What it is & why it’s compelling

The platform company for AI compute and software stacks, hiring across research, frameworks, systems, and platform software. Current openings include senior research scientists, AI infra engineers, and early-career roles—plus internships and new-grad pipelines.

Requirements & prerequisites

- Core skills: GPU programming, parallelism, compilers/frameworks, distributed training and inference.

- Evidence: Performance engineering, kernel-level optimizations, or model throughput gains.

- Low-cost alternatives: A small benchmark harness demonstrating a throughput or memory win on a standard task.

Step-by-step

- Pick a bottleneck (data loader, attention kernel, KV-cache).

- Build a micro-benchmark and compare your variant against a baseline.

- Report end-to-end impact (tokens/sec, $/1M tokens, VRAM profile).

- Apply and attach the benchmark + write-up.

Beginner modifications & progressions

- Mod: Use existing kernels and tune launch configs; show modest but reliable wins.

- Progress: Write a custom kernel or compiler pass; upstream a patch.

Cadence & metrics

- Cadence: 1 performance artifact/month.

- KPIs: Speedup at realistic batch sizes, memory savings without quality regressions.

Safety & pitfalls

- Vendor-specific hacks with poor generality.

- Benchmarks that don’t survive larger batch sizes.

Mini-plan

- One week: Optimize a single operator and prove a stable 10–20% throughput gain on a public model.

Anthropic

What it is & why it’s compelling

A safety-first AI company hiring across research, engineering, policy, product, and security. Current postings span multiple locations and specialties, including evaluations, red-teaming, safeguards, and platform work.

Requirements & prerequisites

- Core skills: Alignment/evals, adversarial testing, interpretable tooling, and robust engineering.

- Evidence: A measured reduction in misbehavior on a realistic eval; safe-by-design product patterns.

- Low-cost alternatives: Build a compact eval suite and show its predictive value for real user harm scenarios.

Step-by-step

- Define a harm surface (e.g., jailbreaks for a specific class of tasks).

- Create a small eval set plus a safety filter and logging.

- Demonstrate a risk reduction with minimal false positives, and document trade-offs.

- Apply with the suite and a succinct “lessons learned” brief.

Beginner modifications & progressions

- Mod: Start with prompt-level defenses and simple classifiers.

- Progress: Add tool-mediated defenses, uncertainty routing, and human review loops.

Cadence & metrics

- Cadence: 1 new eval or defense per month.

- KPIs: Reduced incident rate at constant utility; calibration curves and manual spot checks.

Safety & pitfalls

- Over-blocking; always track false positives and task success.

- Claims without rigorous measurement.

Mini-plan

- 10 days: Build a targeted jailbreak eval, implement two mitigations, quantify risk reduction, apply with the results.

Quick-Start Checklist

- Choose one of the eight companies and one posted role.

- Build a single, focused artifact that mirrors the team’s daily work.

- Write a 1-page narrative tying your artifact to business or user impact.

- Tailor your resume to the role’s top 3 requirements; cut everything else.

- Request two referrals (alumni, OSS maintainers, or meetup contacts).

- Track your apply→reply→screen→onsite→offer funnel weekly.

Troubleshooting & Common Pitfalls

- Low reply rate (<10%)? Your artifact likely doesn’t match the team’s problem. Rewrite the first paragraph of your application to state the problem, constraints, and measured results.

- Stalled at recruiter screen? Your resume may be vague. Convert bullets to problem → action → metric.

- Failing technical screens? Under time pressure, set up a minimal test harness and narrate trade-offs out loud; avoid silent hacking.

- Portfolio bloat? One excellent, recent project beats five scattered ones.

- No referrals? Contribute a small PR to a team-relevant repo and ask thoughtful questions in issues; build authentic rapport before asking.

- Visa concerns? Many teams sponsor selectively; apply early and confirm the policy with the recruiter.

How to Measure Progress (and Know It’s Working)

- Reply rate (target >20%) – signals top-of-funnel alignment.

- Recruiter screen pass rate (target >50%) – signals story clarity.

- Technical screen pass rate (target >40%) – signals execution under constraints.

- Onsite conversion (target >25%) – signals team fit and architecture thinking.

- Cycle time from apply to decision (aim <30 days) – signals velocity.

Instrument your search like a growth funnel. Each week, fix the weakest link.

A Simple 4-Week Starter Plan

Week 1 – Focus & Artifact

- Pick one company and one role.

- Draft a one-page problem statement mirroring the team’s work.

- Build the smallest viable artifact (baseline + metric + README).

- Ask one credible person for feedback (professor, maintainer, trusted peer).

Week 2 – Quality & Story

- Improve reliability (tests, logging, edge cases).

- Add one measurable improvement (latency, accuracy, or cost).

- Write a concise narrative: context, constraints, design, results.

- Submit one tailored application; request two referrals.

Week 3 – Breadth & Interviews

- Add a second artifact or expand the first with a new metric.

- Dry-run a technical screen (timed) and a system design whiteboard.

- Apply to two additional roles at the same company or a close peer.

Week 4 – Review & Iterate

- Review funnel metrics.

- Patch the weakest stage (resume, artifact clarity, interview practice).

- Ship a small improvement, publish a short post, and submit 1–2 new applications.

FAQs

1) Do I need a PhD to land research-adjacent roles?

No. A PhD helps for pure research, but strong engineering artifacts, published code, and rigorous evaluation can be equally persuasive for applied research and engineering roles.

2) How many applications should I send per week?

Quality beats quantity. One or two tailored applications with a matching artifact generally outperform ten generic submissions.

3) What’s the single most important thing to include in my application?

A role-aligned artifact (repo, demo, or paper) that proves you can do the team’s work and shows measurable results.

4) How do I get a referral if I don’t know anyone?

Contribute a focused PR to a public repo used by the team, ask thoughtful questions in issues, attend a relevant meetup or virtual talk, and build a genuine relationship before asking.

5) Will these companies sponsor visas?

Many do (policy varies by role and location). Apply early, and ask the recruiter about sponsorship after your first screen to avoid surprises.

6) I’m new to AI—what’s my fastest credible path?

Pick a narrow problem, ship a tiny but complete project, publish clear metrics, and iterate with community feedback. Depth beats breadth.

7) I keep failing coding screens—what should I practice?

Practice with production-style tasks: reading unfamiliar code, writing tests, and optimizing for latency or memory. Narrate trade-offs and test strategy.

8) Should I optimize my resume for ATS keywords?

Yes—but only after you tailor content for human readers. Put the most role-relevant accomplishment in the top third of the first page.

9) Is open source contribution necessary?

Not mandatory, but it’s a powerful signal of initiative and collaboration—especially if your contributions touch the same stack a target team uses.

10) How soon should I follow up after applying?

Within 5–7 business days. Reply with a short note linking your artifact and three bullets on measurable results.

11) What if I get conflicting feedback across interviews?

Ask for specifics and patterns. Improve what appears twice; don’t chase one-off critiques.

12) How do I talk about failures?

Own them. “Here’s what failed, why, how I measured it, and what I changed.” This is a strong signal of maturity and scientific thinking.

Conclusion

The AI job market is competitive—but also unusually merit-driven. If you choose a company, pick a role, and ship one artifact that solves a real problem with real metrics, your odds rise fast. Use the four-week plan, track your funnel, and iterate. Momentum beats perfection.

CTA: Pick one role at one company from this list, ship a matching artifact in 10 days, and apply with a crisp one-page narrative—starting today.

References

- Careers at OpenAI — OpenAI — (active listings; accessed August 13, 2025). OpenAI

- Careers — Google DeepMind — (shows open roles and locations; “84 jobs found” at time of access). Google DeepMind

- Jobs | Microsoft AI — Microsoft — (current AI roles across locations; accessed August 13, 2025). Microsoft AI

- Careers in Research — Microsoft Research — (new roles with posting dates in August 2025). Microsoft

- Meta Careers — Meta — (company careers portal; accessed August 13, 2025). Meta Careers

- Inside MTIA: Insights from a Custom Silicon Sourcing Manager — Life at Meta (Careers Blog) — (recent article on AI systems work; accessed August 13, 2025). Meta Careers

- Machine Learning Science — Amazon Jobs — (category page describing ML roles and linking to active postings; accessed August 13, 2025). https://www.amazon.jobs/content/en/job-categories/machine-learning-science amazon.jobs

- Machine Learning Engineer, Ring AI — Amazon Jobs — (active posting; accessed August 13, 2025). amazon.jobs

- Machine Learning and AI — Jobs — Careers at Apple — (search results showing active AI postings with August 2025 dates).

- Machine Learning and AI: Applied Research — Jobs — Careers at Apple — (applied research postings with August 2025 dates). Apple

- Machine Learning and AI — Work at Apple — (team overview). Apple

- Jobs at NVIDIA | NVIDIA Careers — NVIDIA — (careers portal; accessed August 13, 2025). NVIDIA

- Principal Artificial Intelligence Algorithms Engineer — NVIDIA (Workday) — (recent posting with application window noted August 2025). NVIDIA Jobs

- Research Scientist, Spatial Intelligence — NVIDIA (Workday) — (July 25, 2025 posting). NVIDIA Jobs

- Careers — Anthropic — (company careers overview; accessed August 13, 2025). Anthropic

- Jobs — Anthropic — (open roles portal; accessed August 13, 2025). Anthropic