As of February 2026, the digital landscape has shifted from a battle of human wits to a war of autonomous algorithms. Agentic Cybersecurity represents the next frontier in digital defense, moving beyond static automation to dynamic, self-reasoning AI entities capable of independent decision-making. Unlike traditional security software that follows “if-then” logic, agentic systems use Large Language Models (LLMs) and specialized reasoning engines to navigate complex environments, hunt for adversaries, and patch vulnerabilities without constant human intervention.

Key Takeaways

- Autonomy: Agentic systems don’t just alert; they act based on high-level goals.

- Velocity: They operate at “machine speed,” neutralizing threats in milliseconds rather than the hours or days required for human-led SOCs.

- Preemption: By predicting attack vectors through continuous self-simulation, they shift the posture from reactive to preemptive.

- Reasoning: These agents understand context, allowing them to differentiate between a developer’s unusual activity and a lateral movement by an attacker.

Who This Is For

This guide is designed for Chief Information Security Officers (CISOs), Security Operations Center (SOC) managers, and IT infrastructure architects who are struggling with “alert fatigue” and the increasing sophistication of AI-driven malware. If your organization is looking to scale its defense without linearly increasing its security headcount, agentic workflows are your roadmap.

Understanding the Shift: From Automation to Agency

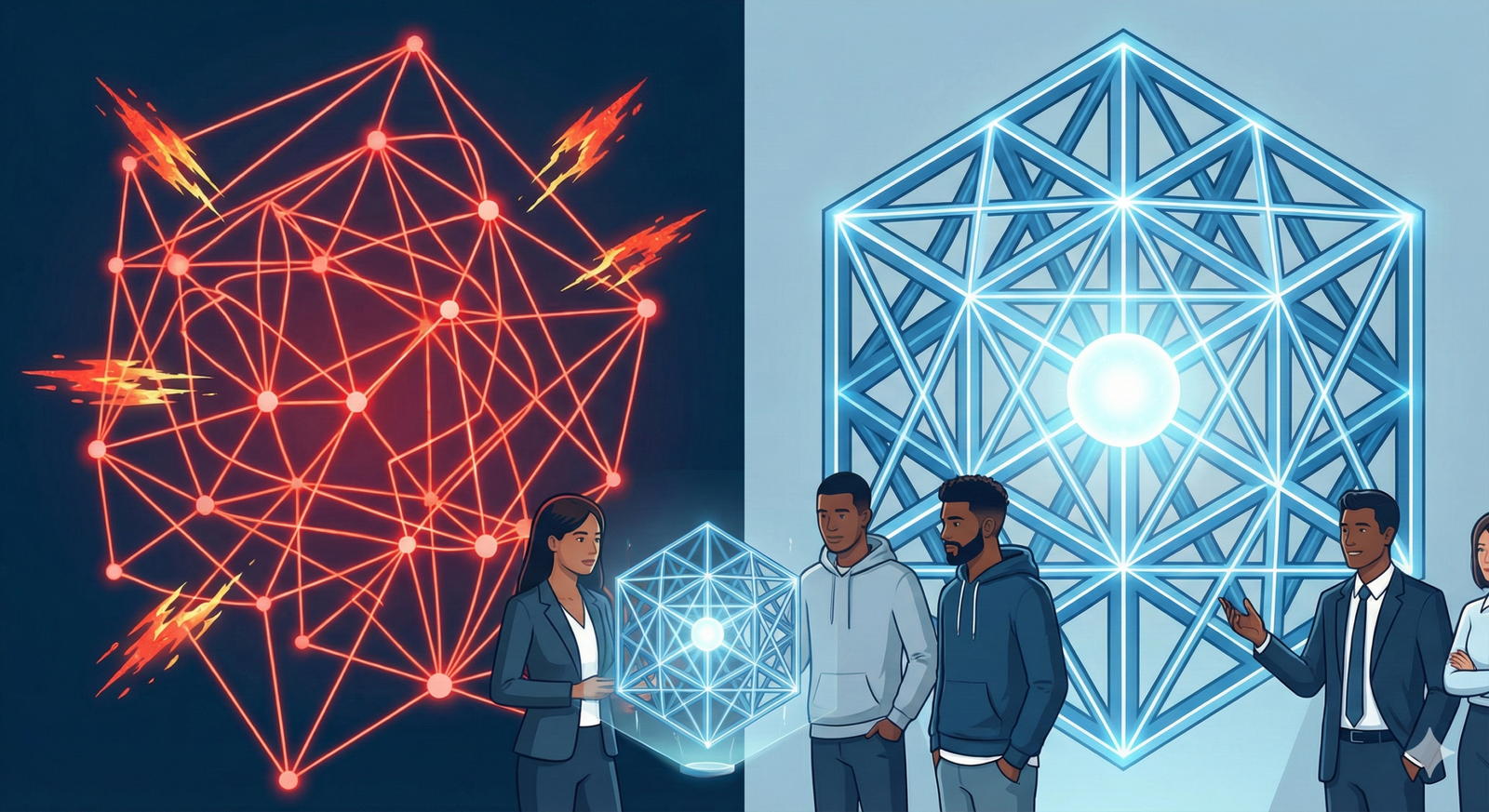

To grasp the power of agentic cybersecurity, we must first distinguish it from the automation we have used for the last decade. Traditional SOAR (Security Orchestration, Automation, and Response) platforms are like a complex train track: they work perfectly as long as the train stays on the rails and follows a pre-defined path.

Agentic AI is the helicopter. It has a destination (the goal) and the ability to navigate obstacles, change course, and choose the best path in real-time.

The Three Pillars of Agentic Security

- Perception: The ability to ingest vast streams of telemetry data—logs, network traffic, and user behavior—and “understand” the state of the environment.

- Reasoning: Using advanced models to weigh options. Should the agent isolate the host or just terminate the specific process? What are the business implications of shutting down this server?

- Action: The capability to interact with the environment via APIs, command-line interfaces, or configuration changes to execute the chosen strategy.

As of early 2026, we are seeing these agents move from “advisory” roles to “active” roles, where they are trusted to perform high-stakes operations under predefined “guardrail” parameters.

The Architecture of an Agentic Defense System

Building a preemptive defense requires more than just an LLM wrapper. It requires a multi-layered architecture that ensures the agent is both effective and safe.

1. The Reasoning Core (The Brain)

At the center is a reasoning engine, often powered by models like GPT-5 or specialized security-tuned LLMs. This core is responsible for Chain-of-Thought (CoT) processing. When a suspicious login is detected, the agent doesn’t just block the IP; it reasons: “The user is logging in from a new country, but they also just cleared their browser cache and are accessing a sensitive database. This matches the pattern of a session hijacking. I will revoke the session and prompt for hardware-based MFA.”

2. Tool Integration (The Hands)

An agent is useless if it cannot interact with your stack. Modern agentic platforms utilize “Tool Use” or “Function Calling.” This allows the AI to:

- Query EDR (Endpoint Detection and Response) tools.

- Update Firewall rules in real-time.

- Search internal documentation to see if a specific behavior is authorized.

- Communicate with other agents (e.g., a “Vulnerability Agent” talking to a “Patching Agent”).

3. Long-term and Short-term Memory

For an agent to be effective, it needs context.

- Short-term memory tracks the current incident’s timeline.

- Long-term memory (often via Vector Databases or RAG) allows the agent to remember that a similar attack occurred six months ago and recall which remediation steps were most effective.

Real-World Use Cases for Agentic Cybersecurity

Autonomous Threat Hunting

Traditionally, threat hunting involves highly skilled analysts querying data lakes for indicators of compromise (IoCs). An agentic system performs this 24/7. It can hypothesize: “If I were an attacker trying to exfiltrate data from the R&D department, how would I hide?” It then goes and looks for those specific artifacts, pivoting through logs with a level of persistence a human couldn’t match.

Automated Patch Management and Vulnerability Research

Drawing inspiration from initiatives like the DARPA AI Cyber Challenge (AIxCC), agentic systems can now find “0-day” vulnerabilities in proprietary code and automatically suggest—or even apply—a patch. In 2026, this has evolved into “self-healing” codebases where the agent monitors for new CVEs (Common Vulnerabilities and Exposures) and applies micro-patches to the production environment in minutes.

Incident Response at Machine Speed

When ransomware begins encrypting files, every second counts. A human responder might take 15 minutes to see the alert and another 10 to decide on a course of action. An agentic system detects the encryption entropy, identifies the source process, isolates the infected machine, and starts the recovery process from backups in under 500 milliseconds.

The “Machine Speed” Advantage: Why Humans Aren’t Enough

The primary driver for agentic security is the sheer volume and velocity of modern attacks. We have entered the era of Polymorphic AI Malware—malware that changes its own code every time it spreads to evade signature-based detection.

Human analysts are limited by:

- Cognitive Load: A typical SOC analyst sees thousands of alerts daily.

- Latency: The time it takes to read, comprehend, and click is an eternity in a cyberattack.

- Scalability: You cannot hire enough analysts to cover every edge of a modern cloud-native perimeter.

Agentic systems provide “horizontal scalability.” If your network grows by 40%, you simply deploy more agent instances. They don’t get tired, they don’t have “off” days, and they maintain a consistent level of rigor across every incident.

Common Mistakes When Implementing Agentic AI

While the promise is high, the pitfalls are significant. Avoid these common errors to ensure your “defense” doesn’t become a “liability.”

1. The “Black Box” Problem

The Mistake: Giving an agent full administrative rights without observability. The Fix: Implement strict logging for every action the agent takes. You must be able to audit why an agent decided to shut down a production database. Use “Human-in-the-loop” (HITL) for high-impact actions until the agent has proven its reliability.

2. Ignoring Prompt Injection

The Mistake: Failing to realize that the AI agent itself is a target. The Fix: If an attacker can feed malicious data into a log that the agent reads, they might be able to “trick” the agent into disabling security features. Treat the agent’s input as untrusted data and use secondary “Supervisor Agents” to verify the logic of the primary agent.

3. Lack of Guardrails

The Mistake: Defining goals that are too broad (e.g., “Stop all unauthorized access”). The Fix: Use “Constrained Optimization.” Tell the agent: “Stop unauthorized access, but under no circumstances shut down the payment gateway during business hours.”

Implementing Agentic Defense: A Step-by-Step Roadmap

Phase 1: The “Observer” Mode (Months 1–2)

Deploy agents in a read-only capacity. Let them ingest your logs and “shadow” your human analysts. Have the agent generate a “Recommended Action Plan” for every incident. Compare the agent’s plan to what the human actually did.

Phase 2: Restricted Action (Months 3–6)

Grant the agent the ability to perform low-risk actions. This includes:

- Tagging tickets.

- Gathering additional forensics (e.g., pulling a process list from a laptop).

- Sending Slack notifications to users to verify their activity.

Phase 3: Full Agentic Orchestration (Month 6+)

Allow the agent to take “destructive” actions (blocking, isolating, terminating) within specific, high-confidence zones. Move toward a “Zero Trust AI” model where the agent manages the identity and access management (IAM) lifecycle based on real-time risk scores.

Future Trends: What’s Next for 2026 and Beyond?

As we look toward the latter half of the decade, two major trends are emerging:

- Agent-to-Agent Communication: Your security agent will talk to your cloud provider’s security agent. If an attack is hitting multiple companies simultaneously, the agents will share “anonymized threat intelligence” in real-time to build a global immune response.

- Edge Agency: We are moving away from centralized AI. Agents will reside on the “Edge”—directly on IoT devices and mobile phones—allowing for autonomous defense even when the device is disconnected from the main network.

- Generative Red Teaming: Organizations will use “Attacker Agents” to constantly probe their own defenses. This creates a “Generative Adversarial Network” (GAN) for the entire enterprise, where the defense gets stronger by fighting its own digital twin.

Safety and Ethical Disclaimer

The implementation of autonomous agents in a cybersecurity context carries inherent risks. Improperly configured agents can cause system downtime, data loss, or unauthorized changes to infrastructure. Always test agentic workflows in a sandboxed environment and maintain a “Kill Switch” mechanism. This article is for informational purposes and does not constitute professional legal or technical advice.

Conclusion

Agentic cybersecurity is no longer a luxury for the “Big Tech” elite; it is a necessity for any organization operating in an increasingly hostile, AI-accelerated world. By moving from static playbooks to reasoning agents, we finally have a chance to level the playing field against adversaries.

The transition to agentic defense requires a shift in mindset. It’s about moving from being a “doer” to being a “governor.” Your role as a security professional will evolve into defining the goals, boundaries, and ethical constraints within which your agents operate.

The speed of the machine is relentless, but with agentic systems, we can harness that speed for protection rather than destruction.

Next Step: Would you like me to draft a Proof of Concept (PoC) plan for integrating an agentic security pilot into your existing SOC workflow?

FAQs

What is the difference between AI and Agentic AI in cybersecurity?

Standard AI in security usually refers to machine learning models that classify data (e.g., “this file is 90% likely to be malware”). Agentic AI takes it a step further by using that classification to decide on a goal-oriented action (e.g., “Since this is likely malware, I will isolate the host and alert the user’s manager”) and then executing it.

Can Agentic AI replace human security analysts?

It is unlikely to replace them entirely, but it will dramatically change their role. Agents handle the “grunt work”—sorting through noise and responding to known patterns—allowing humans to focus on high-level strategy, complex forensic investigations, and the governance of the AI itself.

Is Agentic Cybersecurity vulnerable to “AI hallucinations”?

Yes. Like all LLM-based systems, an agent can “hallucinate” a threat or a solution. This is why multi-agent systems (where one agent checks the work of another) and strict operational guardrails are essential components of any deployment.

How does Agentic Security fit into a Zero Trust architecture?

Agentic AI acts as the “dynamic policy engine” for Zero Trust. Instead of static rules, the agent evaluates the risk of every request in real-time, considering thousands of data points to decide whether to grant access.

What are the hardware requirements for running security agents?

While much of the reasoning happens in the cloud (via providers like OpenAI, Google, or Anthropic), many organizations are moving toward “On-Prem LLMs” using optimized hardware like NVIDIA’s Blackwell chips to ensure data privacy and reduce latency.

References

- DARPA (2025). “The AI Cyber Challenge (AIxCC): Final Results and the Future of Self-Healing Code.” Official DARPA Research Portal.

- NIST (2026). “Special Publication 800-218A: Secure Software Development Framework (SSDF) for Generative AI and Autonomous Agents.”

- Gartner (2025). “Top Strategic Technology Trends for 2026: The Rise of Agentic AI.”

- Stanford University (2025). “The Ethics of Autonomous Cyber Defense: Reasoning, Accountability, and Control.” Journal of AI Research.

- Microsoft Security (2026). “Cyber Signals Issue 12: Defending Against AI-Powered Polymorphic Threats.”

- MITRE (2025). “ATLAS (Adversarial Threat Landscape for Artificial-Intelligence Systems) Update: Agent-Specific TTPs.”

- IEEE Xplore (2025). “A Comparative Study of LLM-Based Agents vs. Traditional SOAR in Incident Response.”

- CISA (2026). “Joint Advisory: Mitigating Risks in Autonomous Security Operations Centers.”