As of February 2026, the global corporate landscape has split into two distinct camps: those that use AI as a tool and those that are built around it. The latter, known as agent-native firms, represent a new breed of organization where autonomous AI agents—not just software—perform the bulk of cognitive labor. Recent industry data suggests that only 11% of these firms have reached “peak performance,” achieving a level of operational leverage previously thought impossible.

What is an Agent-Native Firm?

An agent-native firm is an organization designed from the ground up to utilize autonomous AI agents as the primary unit of work. Unlike “AI-enhanced” companies that bolt chatbots onto legacy workflows, agent-native firms build their entire cognitive architecture around multi-agent systems (MAS) that can reason, use tools, and collaborate with minimal human intervention.

Key Takeaways

- Architectural Superiority: Success isn’t about the model (GPT-5, Claude 4, etc.) but the orchestration layer that connects them.

- Human-in-the-Loop 2.0: Humans have shifted from “doers” to “editors” and “orchestrators.”

- The 10x Efficiency Gap: The top 11% of firms operate with a revenue-per-employee ratio that is ten times higher than traditional competitors.

- Adaptive Intelligence: These firms don’t just automate; they create feedback loops where agents learn from every edge case encountered.

Who This Is For

This deep dive is intended for Chief Technology Officers (CTOs), Enterprise Architects, and Founders who are looking to move beyond the experimental phase of generative AI and into the production-grade deployment of autonomous workforces. If you are responsible for the digital transformation of a mid-to-large-scale enterprise, this analysis provides the blueprint for the “11% Leaderboard.”

1. Cognitive Architecture: Moving Beyond Simple Chatbots

The primary differentiator of the 11% leaderboard is their departure from “Chat” as the primary interface. Early AI adopters focused on a user talking to a bot. Agent-native firms, however, utilize Cognitive Architecture.

In these firms, an agent isn’t just a prompt; it is a module with four distinct components:

- Planning: The ability to break down a complex goal (e.g., “Research and write a 2,000-word whitepaper”) into sub-tasks.

- Memory: Utilizing both short-term context and long-term Agentic RAG (Retrieval-Augmented Generation) to recall past interactions.

- Tool Use: The ability to interact with APIs, databases, and third-party software (Slack, Jira, Salesforce).

- Self-Correction: A “critic” agent that reviews the work of the “actor” agent before output.

Common Mistake: Relying on a single large context window rather than building structured memory. This leads to “hallucination drift” where the agent loses the objective over time.

2. The “Agent-to-Human” Ratio: Benchmarking Labor Efficiency

In traditional firms, scaling output requires scaling headcount. In the top 11%, scaling output requires scaling compute.

As of 2026, the benchmark for “The 11%” is an agent-to-human ratio of 50:1. For every one human employee, there are approximately 50 autonomous agents performing specialized tasks. These agents handle everything from initial customer support triage to complex financial reconciliation and even code generation.

| Metric | Traditional Firm | AI-Enhanced Firm | Agent-Native (Top 11%) |

| Primary Labor Unit | Human | Human + Copilot | AI Agent |

| Scaling Cost | Linear (Salaries) | Sub-linear | Marginal (Compute) |

| Decision Speed | Days/Weeks | Hours | Milliseconds/Minutes |

By treating agents as “digital workers” rather than software, these firms can enter new markets or launch new products in a fraction of the time.

3. Orchestration Layers: The Central Nervous System

Success in the agentic world isn’t about having the smartest single agent; it’s about how well they talk to each other. The 11% use sophisticated Orchestration Layers (often built on frameworks like LangGraph, CrewAI, or proprietary internal controllers).

These layers manage:

- State Management: Keeping track of where a task is across multiple agents.

- Conflict Resolution: What happens when two agents disagree on a data point?

- Dynamic Routing: Sending a task to the most “cost-effective” model based on complexity.

Practical Example: A leading fintech firm uses an orchestrator to manage a loan application. Agent A extracts data from documents; Agent B verifies it against public records; Agent C assesses risk; and Agent D prepares the human-readable summary for final approval.

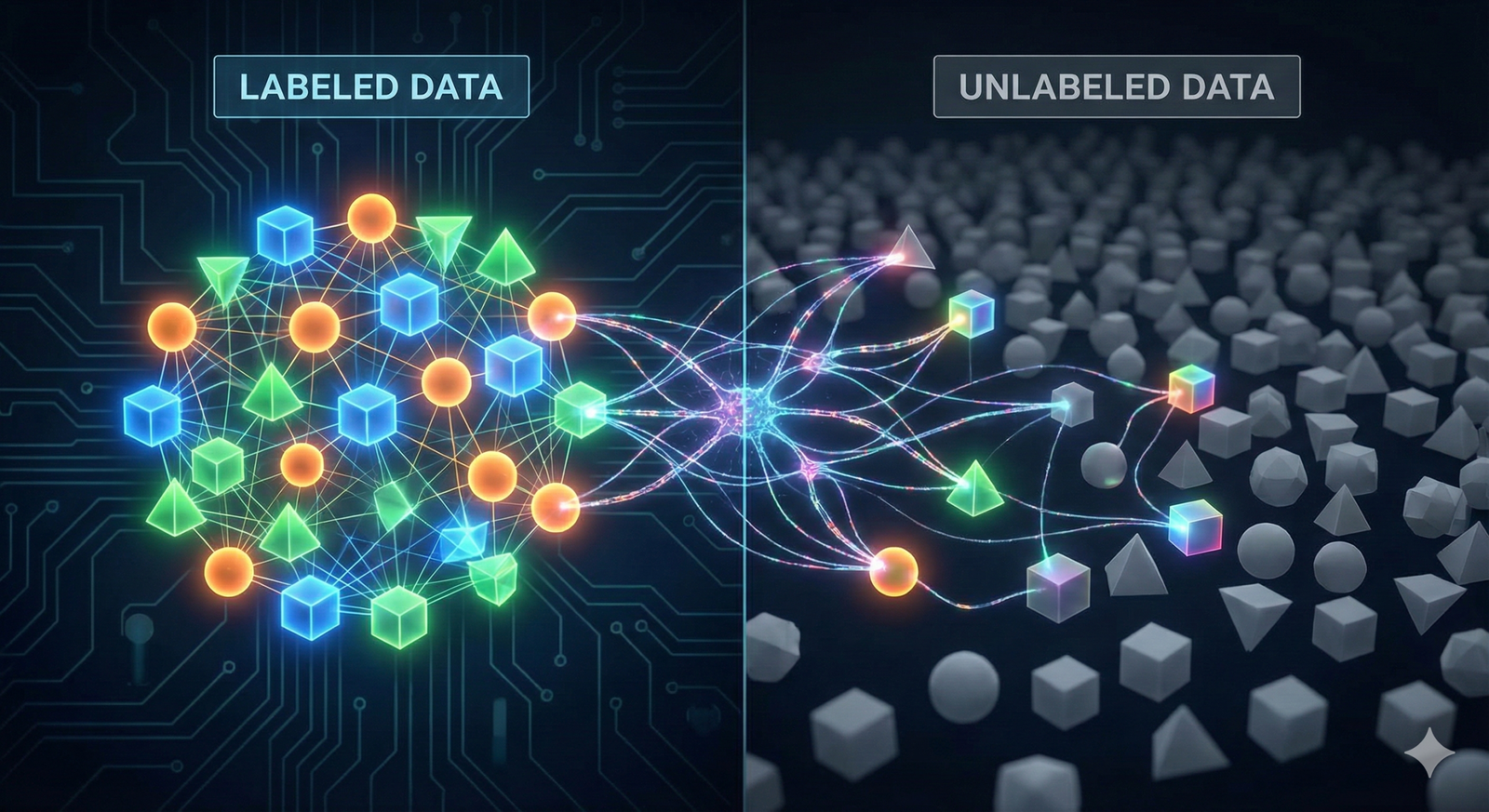

4. Data Flywheels: How Agent-Native Firms Compound Intelligence

The 11% leaderboard firms treat data differently. While others use data to train models, agent-native firms use agents to generate and refine data.

They implement what is known as a Synthetic Data Flywheel. When an agent performs a task, the result is audited by a human or a “superior” agent. This feedback is captured not just as a log, but as a fine-tuning dataset for the next iteration.

Safety Disclaimer: When dealing with financial or medical data, these flywheels must include “Air-Gapped Validation” to ensure synthetic data does not introduce systemic bias or false information into the core model.

5. Dynamic Resource Allocation: Just-in-Time AI Compute

Managing the cost of LLMs is the biggest challenge for 2026 enterprises. The 11% don’t use GPT-5 for everything. They use Dynamic Resource Allocation.

They employ a “Cascade Strategy”:

- Level 1 (Trivial Tasks): Small, locally hosted models (e.g., Llama 3 8B or Mistral) for classification and routing. Cost: ~$0.01 per 1M tokens.

- Level 2 (Analytical Tasks): Mid-tier models for reasoning and data processing.

- Level 3 (Strategic/Creative Tasks): State-of-the-art frontier models (GPT-5, Claude 4) for final synthesis.

This allows them to maintain high-quality output while keeping their token expenditure 40% lower than firms that use a “one-model-fits-all” approach.

6. The Shift from SaaS to MaaS (Model-as-a-Service) Internal Tooling

Top-performing firms are moving away from traditional SaaS subscriptions. Instead of paying for 500 seats of a CRM, they build Agentic Wrappers around the CRM’s API.

In an agent-native firm, the “user” of the software is the agent, not the human. This has led to the rise of Internal MaaS, where departments “rent” specialized agent clusters from the IT department.

- Marketing Agents: Fine-tuned on the company’s brand voice.

- Legal Agents: Specialized in the firm’s specific jurisdictional requirements.

- DevOps Agents: Capable of self-healing infrastructure.

7. Safety and Observability: Guardrails of the Top 11%

Autonomy without oversight is a liability. The 11% invest heavily in Agentic Observability. They don’t just log errors; they log “reasoning traces.”

Key Safety Patterns:

- Semantic Monitoring: Using AI to watch other AI for “drift” in tone or logic.

- Kill-Switches: Hard-coded thresholds that pause an agentic workflow if it attempts to spend more than a certain amount of money or modify critical data.

- Audit Trails: Every decision made by an agent is traceable back to the specific prompt, data source, and model version used.

Common Mistake: Launching agents with “write access” to databases without a Human-in-the-Loop (HITL) verification step for sensitive operations.

8. Inter-Agent Communication Protocols (Standardizing Autonomy)

As firms scale from 10 to 1,000 agents, communication becomes a bottleneck. The 11% have moved toward Standardized Agent Communication Protocols (SACP).

Much like the internet relies on HTTP, agent-native firms use structured schemas (JSON-LD or Protocol Buffers) to ensure that a “Sales Agent” and a “Billing Agent” can exchange information without loss of context. This prevents the “Telephone Game” effect where instructions get distorted as they pass through multiple LLM nodes.

9. Human-in-the-Loop 2.0: From Doers to Reviewers

The role of the human has fundamentally changed in the top 11%. We call this Management by Exception.

Instead of performing the task, humans act as:

- Policy Makers: Defining the “vibe,” ethics, and goals of the agents.

- Exception Handlers: Dealing with the 2–5% of cases that the agents flag as “high uncertainty.”

- Quality Auditors: Sampling agent output to ensure alignment with brand standards.

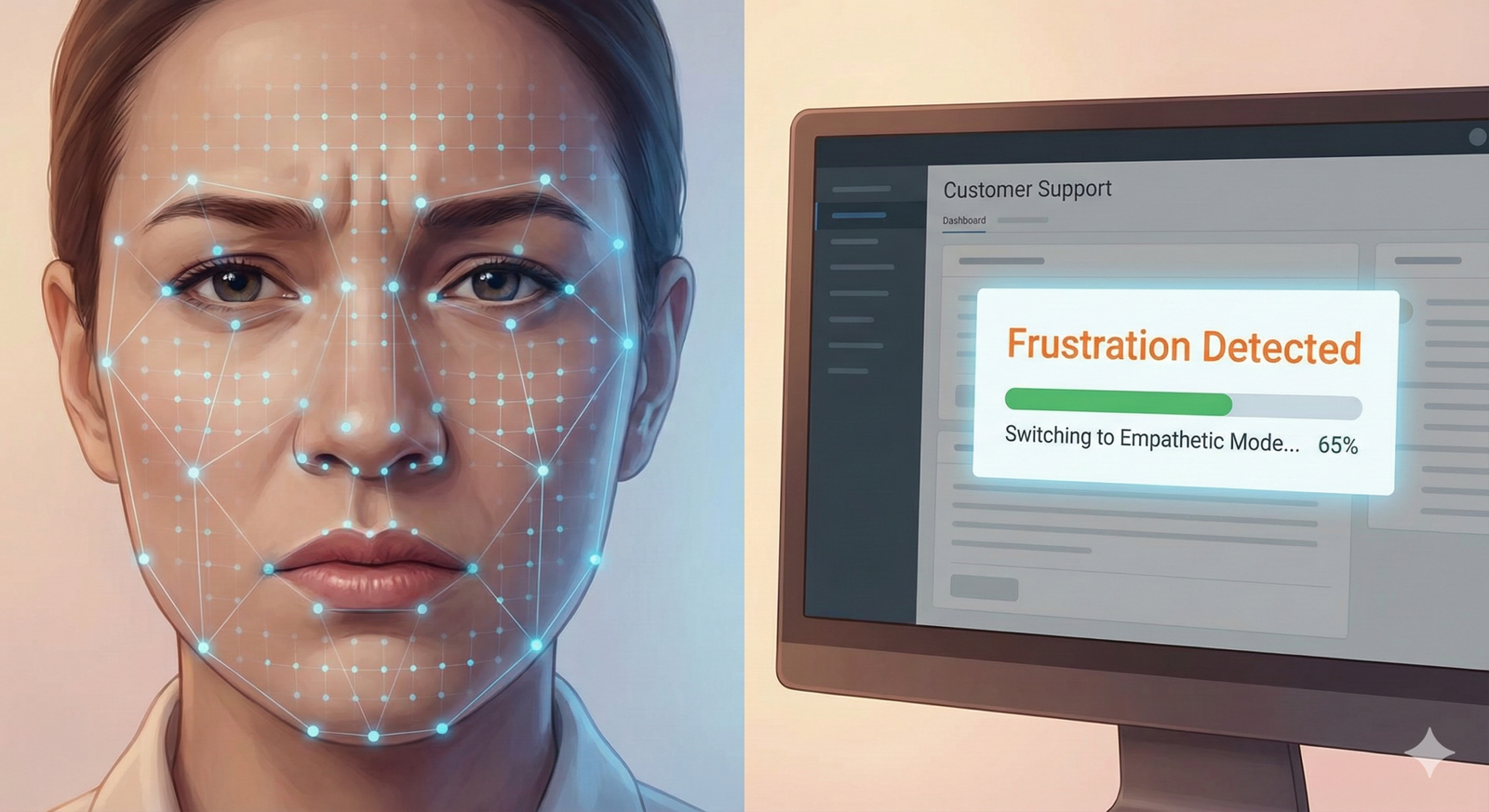

Practical Example: In an agent-native customer service department, the human never sees a “routine” ticket. They only step in when the agent detects a “high-frustration” sentiment or a legal threat.

10. Outcome-Based Monetization: Pricing in an Autonomous World

The top 11% of agent-native firms are disrupting their industries by changing how they charge. Because their costs are tied to tokens and compute rather than hours of labor, they are moving to Outcome-Based Pricing.

If you are an agent-native law firm, you don’t charge by the hour; you charge by the “Contract Successfully Verified.” If you are a marketing agency, you charge by the “Lead Generated.” This shift is only possible because the marginal cost of agentic labor is so low that these firms can afford to bet on their own results.

11. Cultural Transformation: Hiring for an Agentic Future

The final pattern of the 11% is a radical shift in hiring. They no longer look for “Subject Matter Experts” who can do the work. They look for Agentic Architects.

An Agentic Architect is someone who understands the domain (e.g., Accounting) but also knows how to:

- Write complex prompt chains.

- Debug agentic reasoning loops.

- Structure data for RAG systems.

This cultural shift is the hardest to replicate and is often why traditional firms fail to enter the 11% leaderboard despite having the same technology.

Conclusion

The rise of the agent-native firm is not a trend; it is a fundamental re-architecting of how economic value is created. The “11% Leaderboard” consists of organizations that realized early that AI is not a faster horse—it is a jet engine. By focusing on cognitive architecture, dynamic resource allocation, and a new “Human-in-the-Loop” philosophy, these companies have achieved a level of scalability that renders traditional business models obsolete.

To join the 11%, leadership must stop asking “How can AI help our people?” and start asking “How can our people orchestrate an agentic workforce?” The transition is difficult, requiring a total overhaul of legacy systems and, more importantly, legacy mindsets. However, the reward is a “forever-scale” business model that compounds intelligence as fast as it compounds revenue.

Your Next Steps:

- Audit your workflows: Identify one high-frequency, multi-step process (e.g., vendor onboarding or content distribution).

- Build a Prototype: Create a three-agent “crew” (Researcher, Writer, Critic) using a framework like LangGraph to handle that process.

- Measure the “Agentic Leverage”: Calculate the time saved and the token cost per output to establish your baseline.

FAQs

1. What is the difference between an AI-first and an Agent-native company?

An AI-first company prioritizes using AI to improve products (e.g., Netflix recommendations). An agent-native company uses AI agents as its primary internal labor force. One focuses on the customer experience, the other on the operational architecture.

2. How much does it cost to transition to an agent-native model?

The initial cost is high due to the need for specialized “Agentic Architects” and infrastructure. However, the 11% leaderboard firms typically see a 60-80% reduction in operational overhead within 18 months, as the cost per “unit of work” shifts from human salaries to token costs.

3. Are agent-native firms safe from hallucinations?

No firm is 100% safe, but agent-native firms use “Multi-Agent Debate” and “Self-Reflection Loops” to reduce hallucinations to less than 0.1% in production environments. They treat hallucination as a debugging problem rather than a fact of life.

4. Does being agent-native mean firing all employees?

Not necessarily. It means re-rolling them. Employees move from low-value repetitive tasks to high-value strategic oversight. The top 11% actually report higher employee satisfaction because “the boring stuff” has been entirely delegated to agents.

5. What is the biggest risk for an agent-native firm?

Architectural Rigidity. If a firm builds its entire workflow around a specific model’s quirks and that model is updated or deprecated, the “cognitive plumbing” can break. The 11% use “Model-Agnostic” orchestration to mitigate this.

References

- Anthropic (2025). The Era of Agentic Workflows: A Technical Overview. Official Documentation.

- Stanford Institute for Human-Centered AI (2025). 2025 AI Index Report: The Rise of Autonomous Labor.

- Gartner (2026). Magic Quadrant for Agentic Orchestration Platforms.

- OpenAI (2025). Scaling Laws for Multi-Agent Systems. Research Paper.

- Harvard Business Review (Jan 2026). The Management of Digital Labor: Lessons from the 11%.

- LangChain Blog (2025). State of the Agent: Surveying 5,000 Production-Grade Deployments.

- MIT Technology Review (2026). Why Agent-Native Firms are Outpacing the Fortune 500.

- NIST (2025). Safety and Alignment Standards for Autonomous Business Agents.