Cloud-based software has turned from a bold experiment into the backbone of modern business. In the span of a decade, enterprises have shifted from server closets and monolithic apps to global-scale platforms, containerized microservices, and increasingly serverless and AI-powered stacks. This article takes a rigorous, numbers-first tour of that evolution—how big the market is now, what’s growing fastest, where teams are getting results (and where they’re wasting money), and the practical steps to adopt, measure, and govern cloud capabilities with confidence. If you’re a technology leader, product owner, architect, or hands-on builder who wants a statistics-backed roadmap—not just opinions—this is for you.

Key takeaways

- Cloud is the default: Forecasts put global end-user cloud spending in the $700B+ range in 2025, with quarterly infrastructure services spend already approaching $100B. Market share remains highly concentrated among the top three providers.

- Cloud-native is mainstream: Production use of containers exceeds 90% in many organizations, and Kubernetes production adoption is around 80%, reflecting a decisive shift from VMs to orchestrated services.

- Serverless is no longer niche: In large samples, well over half of organizations on the major clouds use at least one serverless service; on some platforms, 70%+ do.

- Costs and governance matter: Organizations report roughly a quarter to a third of IaaS/PaaS spend wasted; managing cloud spend is consistently the top challenge. FinOps teams are expanding.

- Hybrid and multi-cloud are the norm: Most enterprises now use two or more providers, and a meaningful minority have repatriated ~one-fifth of workloads to private or on-prem infrastructure.

- Resilience, security, and sustainability are measurable: A majority of significant data center outages cost >$100K; four-nines vs three-nines uptime changes allowable monthly downtime by an order of magnitude; average PUE hovers near 1.56; and electricity demand from data centers is set to roughly double by 2030.

The state of cloud in 2025—market size, share, and momentum

What it is and why it matters.

“Cloud” is an umbrella for subscription services that deliver compute, storage, networking, databases, analytics, and software on demand. The business case has matured: near-instant provisioning, global reach, elastic scaling, and a growing portfolio of managed services for data, AI, and edge.

The numbers.

- Macro spend: Independent forecasts project ~$723B in worldwide end-user spending on public cloud services in 2025, up roughly a fifth year over year.

- Quarterly run rate: In Q1 2025, global enterprise spending on cloud infrastructure services was about $94B, up ~23% YoY, with the top three providers holding a combined ~63% market share (roughly 29% / 22% / 12% respectively).

- Workload location: Across operators, off-premises hosting (public cloud and colocation) rose from the low forties percent earlier in the decade to ~55% by 2024, with further increases expected.

- Team adoption: Cloud-native techniques reached ~89% adoption in 2024’s broad surveys; containers in production ~91%; Kubernetes production use ~80%.

Requirements and prerequisites.

- Organizational buy-in for a multi-year cloud strategy and budgeting discipline.

- Foundational skills in identity and access management (IAM), networking, observability, and automation (IaC).

- Initial platform choices (primary cloud, landing zone pattern, network topology).

- A basic FinOps motion (chargeback/showback, tagging standards, budgets and guardrails).

Beginner-friendly implementation steps.

- Baseline and business case. Inventory current apps, map them to business goals, and model TCO across three migration paths: rehost, replatform, refactor.

- Choose a starting portfolio. Select 3–5 services (e.g., managed database, object storage, Kubernetes service, serverless functions, an identity broker).

- Create a secure landing zone. Establish accounts/projects, org policies, network segmentation, IAM roles, and logging from day one.

- Automate everything. Use IaC for VPCs/VNets, subnets, IAM, policies, and core services; bake tagging and budgets into the code.

- Pilot, then scale. Move a low-risk, measurable workload; instrument it; document lessons; update standards; and iterate.

Beginner modifications and progressions.

- Simplify: Start with a single region, a single account/project, and one managed database to reduce moving parts.

- Scale up: Add multi-account architecture, multiple regions or AZs, service meshes, and advanced data services once you’ve stabilized operations.

Frequency, duration, and metrics.

- Quarterly: Portfolio reviews (cost vs value), SLA/SLO trending, and security posture scorecard.

- Monthly: Cloud budget variance (target <±10%), unit cost metrics (e.g., cost per order, per API call, per model inference), and % tagged resources (target >95%).

- Weekly: Deployment frequency, change fail rate, and lead time for changes.

Safety, caveats, and common mistakes.

- Treat “lift-and-shift” as a temporary step; otherwise you import technical debt.

- Don’t skip identity design—over-privileged roles cause the majority of early incidents.

- Avoid ungoverned sprawl; standardize patterns (networking, IAM, logging) early.

Mini-plan (example).

- Approve a one-page cloud operating model (roles, guardrails, budgets).

- Stand up a landing zone and migrate a non-critical analytics workload with full tagging and cost alerts.

- Review measured cost/perf vs on-prem baseline; document patterns to reuse.

SaaS, PaaS, and IaaS—how the mix evolved (and where value concentrates)

What it is and core benefits.

- SaaS: Complete applications (CRM, ERP, collaboration) delivered over the internet—fastest time to value, predictable pricing.

- PaaS: Managed building blocks (databases, queues, ML platforms) that accelerate development without full OS management.

- IaaS: VMs, disks, networks—the foundation for compatibility and control when you can’t refactor immediately.

The numbers.

- SaaS remains the largest spending category, with application and software services approaching ~$300B in 2025 forecasts.

- IaaS/PaaS show the fastest growth rates year over year as teams modernize toward cloud-native services and AI platforms.

- Across organizations, over half of workloads now run in public clouds; AI/ML PaaS usage is reported by ~79%, with ~72% using generative AI services in some capacity.

Requirements/prerequisites and low-cost alternatives.

- A vendor evaluation framework (security, data residency, integration, price predictability).

- A lightweight iPaaS or event bus for integrating SaaS with in-house services.

- Low-cost alternative: For small teams, a serverless backend plus a SaaS analytics tool often beats a full microservices build.

Implementation steps (beginner).

- Map business capabilities to service types. Commodity needs → SaaS; differentiated features → PaaS/serverless; legacy compliance → IaaS.

- Define data contracts. Decide where each system is the source of truth; design CDC or event streams between them.

- Automate integration. Use webhook/event-driven patterns over brittle scheduled imports.

Beginner modifications and progressions.

- Start with one core SaaS system plus a managed database; later introduce event streaming and feature toggles to decouple releases.

Recommended frequency/metrics.

- Quarterly: SaaS license utilization (% active seats), integration success rate (>99%), MTTR on integration failures (<1 hour).

- Monthly: Cost per user/capability vs revenue uplift; re-evaluate shelfware.

Safety and pitfalls.

- Data sprawl across SaaS tools is real—centralize identity and logging.

- Watch for hidden egress fees when moving data between services. (Transfers to leave some clouds have become free—but normal internet egress generally is not.)

Mini-plan.

- Consolidate identity across top five SaaS apps with SSO and SCIM.

- Replace nightly CSV jobs with event-driven sync for one critical flow.

- Review license usage; reclaim or downgrade underused seats.

Containers and Kubernetes—why orchestration won

What it is and core benefits.

Containers package apps with dependencies, enabling consistent deploys across environments. Orchestrators schedule, scale, and heal them automatically.

The numbers.

- Container usage in production ~91% across organizations.

- Kubernetes production use ~80%, with adoption still climbing.

- Teams increasingly report challenges shifting from security/complexity to culture, skills, and training as the primary blockers—evidence that tooling matured, while people and process must catch up.

Requirements/prerequisites.

- Baseline skills in Dockerfiles, registries, and CI/CD.

- A managed Kubernetes service (for most teams) to avoid control plane toil.

- Image scanning and admission policies; a secrets manager.

Implementation steps (beginner).

- Choose a base image strategy. Keep a small, patched set (e.g., distro-less or slim) and maintain a single approved registry.

- Start with a managed cluster. One node pool, one namespace per team, and network policies enabled.

- Ship one service. Add horizontal pod autoscaling, resource requests/limits, and liveness/readiness probes.

- Add observability. Central logs, metrics, traces; set SLOs for error rate and latency.

Beginner modifications and progressions.

- Simplify further: Use a PaaS on top of containers or a serverless containers product to delay cluster operations work.

- Scale up: Introduce multi-cluster, GitOps, service mesh, and progressive delivery (canary/blue-green).

Recommended frequency/metrics.

- Weekly: Deployment frequency and change fail rate; image vulnerability backlog burn-down.

- Monthly: p95 latency vs SLO, pod OOM/restart trends, cost per replica hour.

- Quarterly: Supply-chain security score (signing, SBOM coverage).

Safety and common mistakes.

- Over-allocating CPU/memory inflates cost; under-allocating causes throttling and timeouts.

- Avoid running mutable workloads in root containers; enforce policy centrally.

Mini-plan.

- Containerize one stateless API with minimal base image and health probes.

- Deploy to a managed cluster via GitOps and set a 99.9% SLO.

- Review cost and performance; right-size requests/limits accordingly.

Serverless and edge—when you don’t need servers (and when you do)

What it is and core benefits.

Serverless lets you run code or containers without managing servers. You pay per invocation or time used; platforms auto-scale to zero and to massive spikes. Edge runtimes push logic closer to users.

The numbers.

- In large customer datasets, 70%+ of organizations on some major clouds use at least one serverless service; ~60% is common on others.

- Container-based serverless platforms continue to rise; on some providers, two-thirds of serverless users now run containerized workloads.

Requirements/prerequisites and low-cost alternatives.

- Stateless functions or containerized microservices, event triggers, and CI/CD tailored to functions.

- Low-cost: For simple APIs, a functions + managed auth + object storage stack can beat “full” Kubernetes on cost and complexity.

Implementation steps (beginner).

- Pick a narrow use case. Scheduled jobs, image processing, webhooks, or bursty APIs.

- Cold-start-aware design. Warm paths with scheduled pings (or provider features), keep dependencies slim, and prefer languages with shorter cold starts for user-facing endpoints.

- Instrument. Capture invocation counts, durations, error rates, and tail latencies; set budgets/alerts by function.

Beginner modifications and progressions.

- Start with event-driven glue between SaaS and your backend.

- Progress to request/response APIs with caching and queues to smooth spikes.

Frequency/metrics.

- Weekly: p95/p99 latency; throttles; retries; cost per million invocations.

- Monthly: Function-level budgets vs actuals; error classes and cold-start contribution to tail latency.

Safety and mistakes.

- Over-chatty functions (too many small calls) balloon cost and latency.

- Beware unbounded concurrency on stateful backends.

Mini-plan.

- Migrate a cron job to a function with retries and idempotency keys.

- Add an edge function for cache-friendly route logic.

- Set a cost alert at 80% of monthly budget.

Multi-cloud, hybrid, and repatriation—choice, portability, and control

What it is and core benefits.

Enterprises increasingly balance public cloud, private/on-prem, and colocation to meet compliance, latency, cost, and sovereignty needs. “Multi-cloud” is more than dual providers; it often includes SaaS, edge, and GPU specialty clouds.

The numbers.

- In broad surveys of end-users and integrators, 37% use two cloud providers and ~26% use three; multi-cloud is prevalent.

- Repatriation is real but measured: respondents report roughly ~21% of cloud workloads have been moved back to private or on-prem environments.

- Data portability improved: major providers now waive data transfer-out fees when you leave their platform, reducing lock-in costs for migrations.

Requirements/prerequisites.

- A clear portability boundary: containers with standard images, infrastructure as code, database abstraction (or change-data-capture for migrations).

- Centralized policy/identity spanning clouds; SSO with role mapping.

Implementation steps (beginner).

- Standardize on an abstraction layer. OCI images, OpenAPI contracts, and a single secrets/PKI pattern.

- Adopt cross-cloud IaC. Keep provider-specific modules behind reusable interfaces.

- Plan exit ramps. Document per-service “if we had to move tomorrow” steps; test at least one controlled migration.

Beginner modifications and progressions.

- Start with active-active across two regions in a single cloud before adding a second provider; then pilot a read-only copy in a second cloud.

Frequency/metrics.

- Quarterly: % services with a tested failover plan; RTO/RPO drill results; % assets under policy controls in all environments.

- Annually: A measured cost-benefit review of multi-cloud complexity vs risk reduction.

Safety and mistakes.

- Multi-cloud without a solid platform team magnifies toil.

- Beware egress you still pay: waived exit fees apply to switching providers, not day-to-day data transfer.

Mini-plan.

- Put one production service behind portable contracts with IaC-based deploys to two clouds.

- Run a game-day: fail traffic from cloud A to cloud B; document RTO/RPO.

- Decide which services merit multi-cloud vs single-cloud depth.

AI meets cloud—GPU capacity, cost curves, and power reality

What it is and core benefits.

Cloud providers now sell on-demand access to accelerated compute (GPUs/AI chips), managed vector stores, model hosting, and training/inference platforms—making AI workloads feasible without owning hardware.

The numbers.

- Cloud infrastructure growth accelerated post-late-2022 with generative AI demand; GenAI-specific services have seen triple-digit growth from a low base, and specialty GPU clouds have climbed into top-provider rankings by growth rate.

- Electricity demand from data centers is projected to roughly double by 2030, surpassing 1,000 TWh, with AI a primary driver.

Requirements/prerequisites.

- Capacity planning for accelerators; region/zone power and cooling constraints.

- Data governance for training sets; lineage and rights.

- Cost controls for training and inference (quota, budget, and unit economics).

Implementation steps (beginner).

- Start with managed inference. Host a fine-tuned small/medium model behind a managed endpoint; measure cost per 1,000 tokens/images.

- Pilot vector search. Add retrieval-augmented generation with a managed vector DB; benchmark latency and accuracy.

- Right-size hardware. Test multiple instance types and batch sizes; choose the cheapest that meets latency SLOs.

Beginner modifications and progressions.

- Keep early workloads stateless and spot-capable; progress to distributed training only after proving inference value.

Frequency/metrics.

- Weekly: Cost per inference and per successful completion; p95 latency; error rate of grounding/retrieval.

- Monthly: Model quality metrics (precision, hallucination rate), and featurized unit economics (cost per embedded document, per recommendation).

Safety and mistakes.

- Shadow AI usage bypassing governance; enforce policies, audit logs, and DPIA (where required).

- Treat model updates like code: version, roll back, monitor.

Mini-plan.

- Deploy an inference endpoint with budget and throughput limits.

- Add retrieval; bias toward smaller, cheaper models when quality is “good enough.”

- Review cost per business event (e.g., per support ticket deflected).

Reliability—what the uptime math (and outage costs) really say

What it is and core benefits.

Reliability is measurable. Uptime targets translate into precise allowable downtime budgets, and outages have well-quantified financial impacts.

The numbers.

- A majority of organizations reported an outage within the prior three years, but most were low impact. Still, ~54% of significant incidents cost more than $100,000, and ~20% exceed $1M.

- 99.9% (“three nines”) allows ~43 minutes of downtime per month; 99.99% (“four nines”) allows ~4 minutes per month.

Requirements/prerequisites.

- SLOs per service (availability and latency), error budgets, and runbooks.

- Region/AZ spreading and automated failover; chaos testing.

Implementation steps (beginner).

- Define SLOs and error budgets. Tie them to business outcomes and inform release velocity.

- Build redundancy. Use multi-AZ deployments by default; move state to managed HA services where possible.

- Practice failure. Run monthly game-days; measure MTTR and playbook accuracy.

Beginner modifications and progressions.

- Start with multi-AZ; add multi-region only for services with strict RTO/RPO.

- Adopt service-level error budget policies to throttle risky deploys when you’re “in the red.”

Frequency/metrics.

- Weekly: SLO compliance; incidents per deploy; MTTR.

- Monthly: Downtime vs budget; post-mortem action completion rate.

Safety and mistakes.

- Chasing five-nines everywhere is wasteful; align availability to user impact.

- Don’t neglect data backups and restore testing—untested backups are gambles.

Mini-plan.

- Pick two critical services; set 99.9% SLOs and dashboards.

- Add multi-AZ and a managed database with cross-AZ replicas.

- Run a failover drill; fix whatever breaks first.

Cloud costs and FinOps—turn spend into strategy

What it is and core benefits.

FinOps combines engineering, finance, and product to maximize the business value of cloud. It operationalizes cost visibility, accountability, and optimization.

The numbers.

- Managing cloud spend is cited as the top challenge by the vast majority of organizations.

- Estimated waste in IaaS/PaaS spend is still ~27% (down from earlier highs but significant).

- FinOps teams are expanding, with a clear majority of enterprises reporting dedicated FinOps roles.

- Many organizations report budget overrun ~17% and planned spend growth ~28% year over year—without strong governance, overruns compound.

Requirements/prerequisites.

- Tagging and hierarchy standards (cost centers, owners, environments).

- Budgets, alerts, and anomaly detection integrated into CI/CD.

- Commit/savings plan management; rightsizing; idle resource policies.

Implementation steps (beginner).

- Establish a taxonomy. Mandatory tags: owner, app, env, cost_center, compliance.

- Automate guardrails. Policies that stop untagged resources and out-of-bounds instance types.

- Quick wins. Turn off idle instances outside business hours; downsize over-provisioned clusters; buy commitments for steady loads.

- Unit economics. Define “cost per transaction” or “per 1,000 recommendations” so teams see business impact.

Beginner modifications and progressions.

- Start with showback to build culture; progress to chargeback once metrics stabilize.

- Expand FinOps scope beyond public cloud to SaaS and data center spend to get a full picture.

Frequency/metrics.

- Weekly: Unattached storage volumes, orphaned IPs, idle databases.

- Monthly: Savings rate (actual vs potential), forecast accuracy, commitment coverage.

- Quarterly: Cost-to-revenue ratios by product line.

Safety and mistakes.

- Over-rotating on discounts without workload hygiene locks you in and hides waste.

- “Cost cuts” that degrade user experience destroy value—tie savings to SLOs.

Mini-plan.

- Enforce tags and block untagged deployments.

- Rightsize the top five over-provisioned workloads; purchase commitments for steady capacity.

- Publish a dashboard with unit metrics and celebrate the first 10% savings.

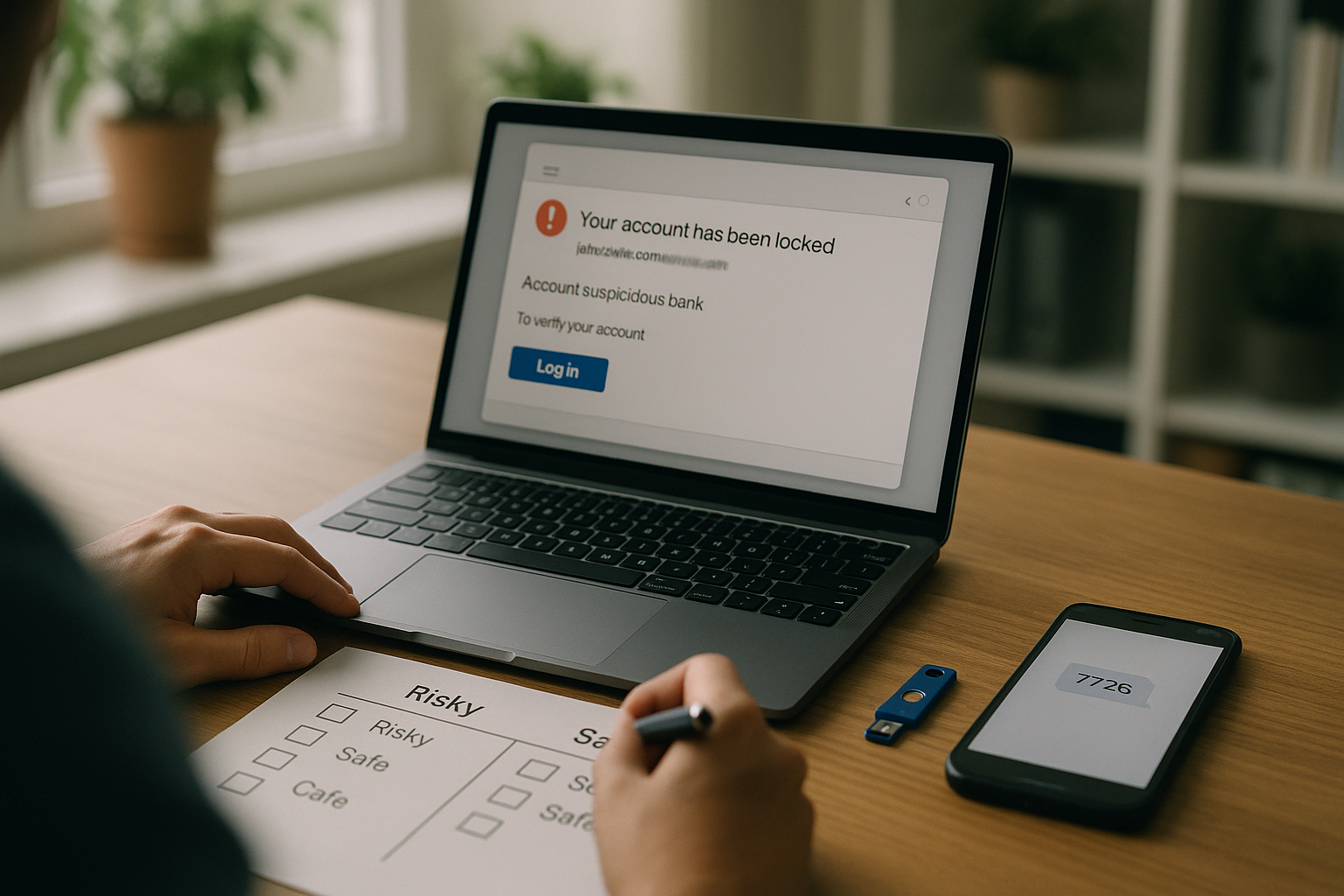

Security and compliance—the risk profile of modern cloud

What it is and core benefits.

Cloud security emphasizes shared responsibility: providers secure the infrastructure; customers secure configurations, identities, and data. The economics of breaches are well documented.

The numbers.

- The global average data breach cost in 2024 was ~$4.88M, representing a sharp year-over-year increase. Updated analyses in 2025 show a lower but still material average around the mid-$4M mark.

- Rising vulnerability exploitation and misconfiguration remain leading initial access vectors; large incident datasets show significant portions of attacks tracing back to preventable configuration and privilege errors.

Requirements/prerequisites.

- Strong IAM: least privilege, short-lived credentials, and role separation.

- Centralized logging, SIEM integration, and continuous posture management.

- Encryption by default, key management, and data classification.

- Third-party risk management and software supply-chain controls (SBOMs, signing).

Implementation steps (beginner).

- Identity first. Replace user keys with SSO + MFA + roles; kill long-lived credentials.

- Harden the perimeter. Private subnets, restricted security groups, egress controls, and WAFs on public endpoints.

- Automate posture checks. Use baseline policies for CIS benchmarks and misconfig detection; fix high-risk findings within set SLAs.

- Backups and drills. Immutable backups and quarterly restore tests; tabletop exercises for incident response.

Beginner modifications and progressions.

- Start with a managed secrets service and rotate automatically; progress to hardware-backed keys and workload identity.

Frequency/metrics.

- Weekly: Critical misconfigs open >7 days (target 0); unscanned images (target 0).

- Monthly: Mean time to detect and contain; % assets covered by continuous scanning; % third-party packages with known vulnerabilities.

- Quarterly: Incident tabletop exercises and restore tests completed.

Safety and mistakes.

- Over-privileged service roles and open object storage buckets are still the most common foot-guns.

- “Compliance equals security” is a myth; test real-world attack paths.

Mini-plan.

- Eliminate static keys; enforce MFA and short-lived roles.

- Block public storage by policy; require server-side encryption.

- Run a purple-team exercise and fix the top three findings.

Sustainability—the energy and emissions footprint you can control

What it is and core benefits.

Sustainability goals now influence data center siting, workload scheduling, and architectural choices. Cloud provides transparency (to varying degrees) and the leverage to run with better PUE and greener grids.

The numbers.

- Average PUE ~1.56 remains stubbornly flat due to legacy facilities; modern builds can be far lower.

- Global electricity supply for data centers is estimated around ~460 TWh in 2024, projected to exceed 1,000 TWh by 2030 and 1,300 TWh by 2035, with AI workloads a significant driver.

- A growing share of organizations track cloud carbon footprints and incorporate those metrics into optimization and reporting.

Requirements/prerequisites.

- Provider-supplied energy/CO₂ dashboards (where available) and internal tagging for carbon accounting.

- Policies to select lower-carbon regions and schedule non-urgent jobs when grids are cleaner.

- Awareness of embodied carbon in hardware and code efficiency.

Implementation steps (beginner).

- Turn on sustainability dashboards. Tag workloads by business unit; measure kgCO₂e per transaction or per user.

- Pick regions wisely. Prefer greener grids where latency allows; avoid power-constrained zones for growth workloads.

- Reduce waste. Right-size, shut down idle resources, and use serverless for intermittent tasks that otherwise idle.

Beginner modifications and progressions.

- Start with reporting; progress to policy-driven placement and carbon-aware scheduling.

Frequency/metrics.

- Monthly: kgCO₂e per unit of business output; % workloads in low-carbon regions.

- Quarterly: Reduction in idle resource hours; % batch jobs scheduled during greener windows.

Safety and mistakes.

- “100% renewable” marketing often refers to credits, not local grid mix—validate local conditions.

- Don’t degrade SLOs chasing a green region; use replication and caching.

Mini-plan.

- Enable carbon reporting and tag top 10 services.

- Move one analytics workload to a greener region without harming user latency.

- Add idle-shutdown policies for dev/test and batch.

Quick-start cloud checklist

- Executive-approved cloud operating model with budgets, guardrails, and roles

- Secure landing zone (accounts/projects, org policies, IAM, network, logging)

- Mandatory tags and budget alerts; anomaly detection enabled

- CI/CD with IaC and a basic release policy (rollbacks, approvals)

- SLOs and dashboards for two critical services

- Backup and restore test completed; incident playbooks documented

- FinOps working group formed; first savings plan or commitment purchased

- Sustainability dashboard enabled; initial carbon metrics captured

Troubleshooting & common pitfalls

- Budget “mystery” spikes: Turn on anomaly alerts tied to tags; require cost approvals for new instance families and large storage classes.

- Noisy neighbor performance: Add SLOs and autoscaling; provision IOPS-optimized storage; move hot data to faster tiers.

- Cold starts in serverless APIs: Keep deployment packages small; consider provisioned concurrency or container-based serverless.

- Container restarts and OOMs: Right-size requests/limits; check memory leaks; add liveness/readiness probes.

- Multi-cloud whiplash: Consolidate platform tooling; define portability boundaries; test exit ramps annually.

- Security drift: Automate posture checks; block non-compliant deploys; rotate secrets; run quarterly restore drills.

- Sustainability blind spots: Track carbon per unit outcome; right-size; schedule batch to greener windows.

How to measure progress (and prove ROI)

- Financial: Forecast accuracy (target ±5–10%), percentage of spend covered by commitments, savings realized vs potential, unit economics per product.

- Operational: Deployment frequency, lead time, change fail rate, MTTR, SLO compliance, incident action completion.

- Security: Time to remediate critical findings, coverage of continuous scanning, % assets with least-privilege roles.

- Sustainability: kgCO₂e per user/transaction, % workloads in low-carbon regions, idle hour reduction.

- Adoption/Enablement: % teams using golden patterns, % apps on containers/serverless/PaaS, training completion rates.

A simple 4-week starter plan (adapt to your context)

Week 1: Foundations and visibility

- Approve a one-page cloud operating model and a tagging standard.

- Stand up a secure landing zone and connect SSO; enable budgets and anomaly alerts.

- Inventory top 10 services by cost and define two business-level unit metrics.

Week 2: First migration and SLOs

- Migrate one low-risk workload (e.g., an internal API) to a managed PaaS or container service.

- Set 99.9% SLOs and instrument latency, errors, and cost.

- Turn on security posture management; fix critical misconfigurations.

Week 3: Optimize and govern

- Rightsize compute and storage; buy small commitments for steady capacity.

- Add backup, restore testing, and a monthly game-day; document RTO/RPO.

- Enable sustainability reporting; tag migrated workloads.

Week 4: Expand and standardize

- Move a second workload using the learned pattern; consider serverless for bursty tasks.

- Publish a dashboard with unit costs, SLOs, and carbon for the two workloads.

- Hold a retrospective; add top three improvements to your platform backlog.

FAQs

1) Is repatriation a real trend or just headlines?

It’s real but measured. Many organizations report moving a non-trivial minority (~one-fifth) of workloads back to private or on-prem infrastructure, typically for latency, compliance, predictability, or cost control—and often within a broader hybrid strategy.

2) How can I prevent cloud cost overruns?

Start with mandatory tagging, budgets and alerts, and showback. Then attack rightsizing, idle shutdown, and commitment management. Graduating to unit economics ensures engineering decisions consider cost per business outcome.

3) Is multi-cloud worth it for everyone?

No. It adds operational complexity. It’s most valuable for sovereignty, vendor risk, or very high availability requirements. For many teams, multi-region in one cloud delivers most of the resilience at lower cost.

4) Do free egress programs mean I won’t pay to move data?

Free egress for leaving a provider reduces switching costs, but routine internet egress (and inter-cloud data transfer) typically still incurs charges. Design for locality and caching.

5) How do I choose between containers and serverless?

Use serverless for spiky, event-driven, or small APIs where cold start impact is manageable. Choose containers for long-running services, heavy customization, or when you need consistent per-pod resources and portability.

6) What’s a good uptime target?

Align to user impact and cost. 99.9% allows ~43 minutes downtime per month; 99.99% allows ~4 minutes. Start at three nines for most internal systems and raise only where the business case supports it.

7) Are AI workloads only for hyperscalers?

No. Managed inference endpoints and smaller models make AI accessible. Start with inference and measure cost per successful business outcome before attempting distributed training.

8) How should we measure cloud sustainability?

Track kgCO₂e per unit (e.g., per order), % workloads in low-carbon regions, and idle hours. Prefer regions with greener grids when latency allows, and use serverless to reduce idle compute.

9) What’s the fastest way to reduce security risk in the cloud?

Eliminate static keys, enforce MFA, restrict public access to storage, and automate posture checks. Test restores and incident response regularly.

10) How do we avoid tool sprawl?

Publish golden patterns (IaC modules, logging, identity) and a short approved services catalog. Require exceptions to be documented and time-boxed.

11) When should we add a second cloud?

After you’ve stabilized operations in one cloud, have SLOs/SRE practices in place, and can articulate a clear benefit (e.g., regulatory, latency, or a critical dependency risk). Pilot with a non-critical workload first.

12) Is serverless actually cheaper?

It can be—for bursty or low-duty-cycle workloads and operationally for smaller teams. For steady, high-throughput services, containers or even VMs with commitments often win on cost.

Conclusion

The evolution of cloud-based software is best understood through the numbers: hundreds of billions in annual spend, nine-digit quarterly growth, mainstream container and serverless adoption, measurable outage costs, and clear governance and sustainability metrics. The organizations that win aren’t just “in the cloud”—they operate the cloud as a measurable system: cost as code, reliability as SLOs, security as posture, sustainability as a tracked KPI, and product impact as unit economics. Start small, measure early, and scale the practices that pay off.

CTA: Ready to turn your cloud into measurable business value? Pick one workload, one metric, and one improvement from this guide—and ship it this month.

References

- AI Helps Cloud Market Growth Rate Jump to Almost 25% in Q1, Synergy Research Group, May 1, 2025. https://www.srgresearch.com/articles/ai-helps-cloud-market-growth-rate-jump-back-to-almost-25-in-q1

- 2025 State of the Cloud Report, Flexera, 2025 (landing page with highlights). https://info.flexera.com/CM-REPORT-State-of-the-Cloud

- Cloud Native 2024: Approaching a Decade of Code, Cloud, and Change (CNCF Annual Survey 2024), The Linux Foundation, March 2025 (PDF). https://www.cncf.io/wp-content/uploads/2025/04/cncf_annual_survey24_031225a.pdf

- The State of Serverless, Datadog, updated August 2023. https://www.datadoghq.com/state-of-serverless/

- Global Data Center Survey 2024 (Keynote Report), Uptime Institute, 2024 (PDF). https://datacenter.uptimeinstitute.com/rs/711-RIA-145/images/2024.GlobalDataCenterSurvey.Report.pdf

- Global cloud spend to surpass $700B in 2025 as hybrid adoption spreads: Gartner, CIO Dive, November 19, 2024. https://www.ciodive.com/news/cloud-spend-growth-forecast-2025-gartner/733401/

- Cloud spending to reach a staggering $723bn in 2025, Yahoo Finance, January 4, 2025. https://finance.yahoo.com/news/cloud-spending-reach-staggering-723bn-110300560.html

- Free Data Transfer Out to Internet When Moving Out of AWS, AWS Official Blog, March 5, 2024. https://aws.amazon.com/blogs/aws/free-data-transfer-out-to-internet-when-moving-out-of-aws/

- The CMA anti-trust investigation into AWS and Microsoft explained: Everything you need to know, Computer Weekly, January 28, 2025. https://www.computerweekly.com/feature/The-CMA-anti-trust-investigation-into-AWS-and-Microsoft-explained-Everything-you-need-to-know

- AI is set to drive surging electricity demand from data centres, International Energy Agency, April 10, 2025. https://www.iea.org/news/ai-is-set-to-drive-surging-electricity-demand-from-data-centres-while-offering-the-potential-to-transform-how-the-energy-sector-works

- Energy supply for AI, International Energy Agency, accessed August 13, 2025. https://www.iea.org/reports/energy-and-ai/energy-supply-for-ai

- Uptime and downtime with 99.9% SLA, uptime.is, accessed August 13, 2025. https://uptime.is/

- Uptime and downtime with 99.99% SLA, uptime.is, accessed August 13, 2025. https://uptime.is/99.99

2 Comments