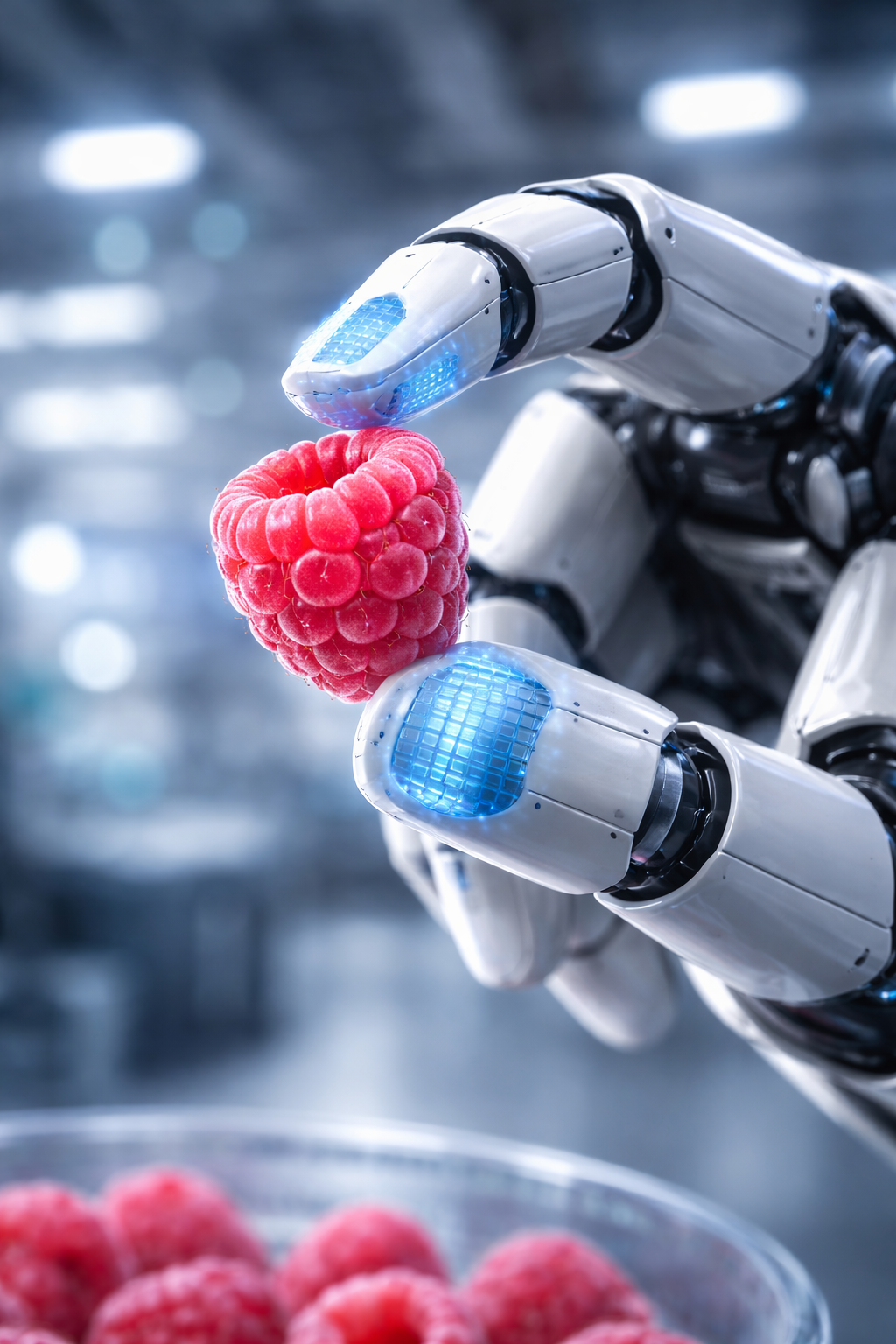

Tactile sensing is the ability of a robotic system to detect physical interaction with its environment through touch. While robots have excelled at “seeing” through advanced computer vision for decades, “feeling” has remained one of the most difficult hurdles in engineering. As of March 2026, we are witnessing a paradigm shift where robots no longer just “see” an object; they understand its texture, temperature, and weight through a complex layer of electronic skin (e-skin).

Key Takeaways

- Historical Shift: We have moved from simple binary “on/off” contact switches in the 1970s to multi-modal graphene-based skins that rival human fingertip sensitivity.

- Diverse Technologies: Today’s robotic hands use a mix of capacitive, piezoresistive, and optical sensors to process data.

- AI Integration: Modern tactile sensing relies heavily on “Tactile Intelligence”—AI models that interpret billions of data points to prevent object slippage in real-time.

- Biological Mimicry: The most successful 2026 designs mimic the four primary mechanoreceptors found in human skin.

Who This Is For

This guide is designed for robotics engineers, prosthetic developers, and tech enthusiasts who want to understand the deep technical history and the cutting-edge future of haptic technology. Whether you are building a collaborative robot (cobot) for a factory or researching the next generation of bionic limbs, understanding the evolution of touch is critical.

1. The Biological Blueprint: Why Touch is Hard to Replicate

To understand the evolution of robotic touch, we must first look at the gold standard: the human hand. Our skin is not just a protective layer; it is a massive, distributed sensor network.

Human skin contains thousands of specialized sensors called mechanoreceptors. These are categorized based on what they detect and how fast they react:

- Meissner’s Corpuscles: Detect light touch and low-frequency vibration (perfect for sensing when an object is slipping).

- Pacinian Corpuscles: Detect deep pressure and high-frequency vibrations (used for feeling textures).

- Merkel Disks: Provide information on pressure and spatial features (like the edge of a coin).

- Ruffini Endings: Sense skin stretch (crucial for knowing the shape of a gripped object).

For decades, roboticists struggled to pack this level of density and multi-modality into a mechanical hand. Early robots suffered from “clumsiness” because they lacked the feedback loops required to adjust their grip strength on the fly.

2. The Origins (1965–1979): The Era of the Mechanical Switch

The earliest “tactile” sensors were remarkably primitive. In the late 1960s and early 70s, industrial robots like the Unimate were designed for heavy lifting in car factories. They didn’t need to “feel” a grape; they needed to know if a heavy steel beam was present or absent.

The Binary Limit

Early sensing was almost entirely binary. Engineers used limit switches—essentially small buttons—at the end of a gripper. When the gripper closed and hit the object, the button would click “on.” This told the computer, “Object detected.”

The Problem: There was no scale. The robot couldn’t tell if it was holding a brick or a lightbulb. This led to thousands of broken parts and the realization that robotics needed a way to measure force, not just presence.

3. The Foundations and Growth (1980–1994): The Rise of “Taxels”

In the 1980s, the concept of the Taxel (Tactile Element) was born. Much like a pixel is a single point of light in a digital image, a taxel is a single point of pressure on a robotic surface.

The Introduction of Piezoresistivity

During this era, researchers began using piezoresistive materials. These are substances (like carbon-filled rubber) that change their electrical resistance when squeezed.

- How it worked: You place a grid of wires behind a piezoresistive sheet. When a robotic finger presses an object, the resistance drops at the point of contact.

- The Breakthrough: For the first time, robots could see a “pressure map” of what they were touching. This allowed for basic shape recognition.

Capacitive Arrays

Parallel to piezoresistivity, capacitive sensing emerged. This technology—the same kind used in your smartphone screen—measures the ability of a material to hold an electrical charge. By placing two conductive layers separated by a soft, compressible foam, engineers created sensors that were highly sensitive to even the lightest touch.

4. The “Tactile Winter” (1995–2009): The Hardware Bottleneck

While the 80s were full of optimism, the late 90s saw a slowdown often referred to by academics as the “Tactile Winter.” Progress didn’t stop, but it hit a wall of practical reality.

The Durability Crisis

Most tactile sensors of this era were incredibly fragile. The very materials that made them sensitive (thin films and delicate wires) made them useless in real-world factories. If a robotic hand scraped against a metal edge, the sensor was destroyed.

The Wiring Nightmare

As engineers tried to increase resolution (adding more taxels), they ran into a logistical problem. A 16×16 sensor array required 256 individual wires. If you have five fingers, you have over 1,000 wires running through a wrist joint. These wires would inevitably fatigue, snap, and cause system failure.

Safety Disclaimer: In medical robotics and prosthetics, sensor failure is not just a technical issue—it’s a safety risk. As of March 2026, redundant sensor arrays are mandatory in all FDA-cleared surgical robotic hands to prevent accidental tissue damage during sensor drift.

5. The Renaissance (2010–2024): MEMS and Optical Innovation

The 2010s saw a massive comeback for tactile sensing, driven by three major technological leaps: MEMS, Optical Sensing, and Soft Robotics.

MEMS (Micro-Electro-Mechanical Systems)

Advances in the smartphone industry allowed for the mass production of tiny, cheap sensors. Accelerometers and pressure sensors could now be shrunk to the size of a grain of sand. This allowed engineers to embed sensors inside the structure of the robotic finger rather than just on the surface.

Optical Tactile Sensing: The “TacTip” Revolution

One of the most creative solutions to the “wiring problem” was using cameras to “see” touch.

- The Mechanism: Inside a rubber-tipped finger, a small internal camera watches the inside surface of the “skin.” When the finger touches something, the skin deforms.

- The Benefit: The camera tracks the movement of markers on the inside of the skin. By processing this video feed, the robot can detect the exact point of contact, the direction of the force, and even the texture of the object without needing thousands of individual electrical wires.

Soft Robotics

The move toward “Soft Robotics” replaced rigid metal fingers with flexible, silicone-based actuators. This made touch safer and more natural. Sensors like the SynTouch BioTac began to mimic the physical properties of a human finger, including a “bone” (rigid core) and “pulp” (liquid-filled skin).

6. The State of the Art: March 2026 Breakthroughs

As we stand in March 2026, the evolution of tactile sensing has entered a high-speed “Intelligence Era.” We are no longer limited by hardware; we are now mastering the software of touch.

Graphene and Liquid Metal E-Skin

Recent breakthroughs at the University of Cambridge and MIT have introduced “Graphene Skin.” This material is a single layer of carbon atoms that is incredibly conductive and virtually indestructible.

- The 2026 Difference: Unlike old sensors that only measure “downward” pressure, these new skins measure 3D force vectors. They can feel if an object is twisting, sliding, or vibrating simultaneously.

- Self-Healing Properties: We now have e-skins that can “heal” themselves if they are cut, using reversible chemical bonds in the polymer substrate.

Multimodal Fusion

Modern robotic hands (like those used in the latest Tesla Optimus or Shadow Robot models) no longer rely on a single sensor type. They use Sensor Fusion:

- Capacitive: For light approach and proximity.

- Piezoelectric: For high-speed vibration (feeling the “snap” of a connector).

- Thermal: To distinguish between a cold soda can and a warm human hand.

7. The Role of Artificial Intelligence (Tactile Intelligence)

Hardware is only half the battle. In 2026, the real magic happens in the “Tactile Intelligence” layer.

Large Touch-Language Models (TLMs)

Similar to how Large Language Models (LLMs) like Gemini understand text, new TLMs are trained on billions of “grasping events.” When a robot touches a new object—say, a wet sponge—the AI recognizes the specific “tactile signature” of water and soft cellular structure. It immediately adjusts the grip pressure to prevent the sponge from being crushed while ensuring it doesn’t slide out of the hand.

Real-Time Slip Detection

The most critical skill for a robotic hand is knowing when it is about to lose its grip. By analyzing micro-vibrations (similar to how our Meissner’s corpuscles work), AI can predict a slip 50 milliseconds before it happens and tighten the grip.

8. Common Mistakes in Robotic Tactile Design

Even with 2026 technology, engineers often fall into several traps when implementing tactile sensing.

1. Over-Resolution

More taxels are not always better. A common mistake is trying to put 10,000 sensors in a fingertip. This creates a massive data processing bottleneck. Most tasks—like picking up a screwdriver—only require a spatial resolution of about 2mm.

- Solution: Use high-density sensors only where needed (fingertips) and low-density “stretch” sensors on the palms.

2. Ignoring Hysteresis

Many flexible materials have “memory.” If you squeeze a sensor and let go, it might take a few seconds for the reading to return to zero. This is called hysteresis.

- Mistake: Failing to calibrate for this “lag” leads to the robot thinking it’s still touching something when it has already let go.

3. Rigid Calibration

Environment matters. A sensor calibrated in a 20°C lab will behave differently in a 40°C warehouse.

- Mistake: Using static thresholds for “touch.”

- Solution: Implementing adaptive, AI-driven calibration that adjusts for ambient temperature and humidity.

9. Applications: Where the Evolution Matters

The evolution of touch isn’t just a lab experiment; it is changing lives in 2026.

Prosthetic Hands (Bionic Restoration)

For the first time, amputees are receiving prosthetic hands that send tactile signals directly to their nervous systems. Users can “feel” the difference between a wife’s hand and a child’s hand, or know if they are holding a glass too tightly without looking at it.

Robotic Surgery

In “Telesurgery,” where a surgeon operates from a distance, tactile sensing is the missing link. 2026-era surgical robots provide “Haptic Feedback,” allowing the surgeon to feel the resistance of the tissue they are cutting, significantly reducing the risk of accidental punctures.

Logistics and Warehousing

Handling “unstructured” objects—like a bag of potato chips or a tangled pair of headphones—was impossible for robots five years ago. Now, with multimodal touch, robots can handle delicate e-commerce items with the same speed as a human worker.

10. Future Outlook: Beyond 2026

Where do we go from here? The next five years will likely focus on Proprioceptive Fusion. This is the ability for a robot to know exactly where its “limbs” are in space based on the tension in its artificial skin and joints, rather than relying on external cameras.

We are also seeing the emergence of Electronic Pain. While it sounds dystopian, “pain” is a vital safety signal. If a robot detects a force that is likely to break its hand, a “pain” signal allows it to immediately retract, saving thousands of dollars in repairs.

Conclusion

The evolution of tactile sensing in robotic hands has been a journey from the simple “on/off” switch to the complex, AI-integrated graphene skins of today. We have moved past the “Tactile Winter” and entered an era of “Embodied Intelligence.”

Today, a robotic hand is no longer just a gripper—it is a sophisticated sensory organ. For developers and engineers, the challenge has shifted from “How do we make it feel?” to “How do we make sense of everything it feels?”

Next Steps: If you are interested in implementing these technologies, I recommend starting with an Optical Tactile Sensor (like TacTip) for prototyping, as it avoids the complex wiring issues of piezoresistive arrays. Would you like me to generate a technical comparison table of the top 5 tactile sensor manufacturers available in 2026?

FAQs

1. What is the difference between force sensing and tactile sensing?

Force sensing typically measures the total load applied to a joint or the entire hand (like a scale). Tactile sensing measures the distribution of that force across a surface, allowing the robot to detect edges, textures, and local pressure points.

2. Why is electronic skin (e-skin) so expensive?

As of 2026, the high cost is primarily due to the nanomaterial fabrication (like graphene and carbon nanotubes) and the complex “interconnects” required to pull data from a flexible surface. However, prices have dropped 40% since 2023 due to new roll-to-roll manufacturing techniques.

3. Can a robot feel “softness”?

Yes. By measuring the relationship between the force applied and the surface deformation, a robot can calculate the “compliance” or Young’s Modulus of an object, allowing it to distinguish between a marshmallow and a rock.

4. Is tactile sensing more important than vision?

They are complementary. Vision is great for “Global” planning (where is the object?), but touch is essential for “Local” manipulation (how do I move it once I’ve grabbed it?). Without touch, a robot is essentially “numb” and must rely on perfect visual alignment, which rarely happens in the real world.

References

- Yun, G., et al. (2026). Graphene Skin Advances Human-like Robot Touch. University of Cambridge Graphene Centre. [Official Release]

- Analog Devices. (2025). Multimodal Tactile Sensing in Industrial Robotics. White Paper.

- Dahiya, R. (2024). Electronic Skin: Materials and Designs. IEEE Transactions on Robotics.

- Nature Robotics. (2025). The Role of AI in Tactile Perception and Object Recognition.

- Stanford University. (2023). Bao Group: Stretchable Electronic Materials for E-Skin.

- IEEE Xplore. (2026). Tactile-Triggered Control Strategies for Prosthetic Hands.

- Science Robotics. (2024). Optical Tactile Sensing: The Future of High-Resolution Touch.

- MIT CSAIL. (2025). GelSight: High-Resolution Tactile Sensing via Optical Deformation.

- ArXiv. (2025). Tactile Robotics: Past and Future Trends (1965-2030).

- MDPI Sensors. (2026). Recent Advances in Flexible Piezoresistive Sensors for Robotics.