Software-as-a-Service is no longer just about replacing on-prem systems—it’s how modern teams build, learn, and scale. In this guide to the Top 5 Emerging SaaS Platforms, you’ll meet a handful of tools that are defining what “cloud-first” means in 2025: faster product learning cycles, operational data wherever your teams work, durable automation that survives failures, AI-ready infrastructure, and internal tools you can ship in days instead of months.

This article is written for founders, product leaders, data teams, and CTOs who want practical, step-by-step guidance they can apply immediately. You’ll get clear implementation plans, common pitfalls, metrics that matter, and a simple four-week roadmap to pilot everything without blowing up your backlog. By the end, you’ll know exactly how to evaluate, launch, and measure each platform in the context of your stack and your goals.

Key takeaways

- Emerging SaaS isn’t about novelty—it’s about speed to insight and lower operational risk.

- Start small, measure quickly: run contained pilots with crisp KPIs before wide adoption.

- Mind the seams between tools: identity, privacy, and data quality are where projects succeed or fail.

- Durability beats duct tape: workflows, flags, and data syncs need observability and rollback paths.

- Use a 30-day runway: four disciplined weeks are enough to validate value with production-grade guardrails.

PostHog (Product Analytics, Feature Flags, Experiments, Session Replay)

What it is and core benefits

PostHog is an end-to-end product OS that bundles product analytics, session replay, feature flags, experiments, surveys, and more into a single platform. In practice, that means you can instrument events, watch real sessions, gate new features to a percentage of users, and run A/B tests—without stitching half a dozen point solutions together.

Why it matters:

- Tighter learning loops: ship a feature behind a flag, observe behavior with analytics and replay, iterate, then ramp.

- Lower blast radius: feature flags let you roll out to 1% first, then widen—kill switch included.

- Less vendor sprawl: one SDK and one data model simplify governance and debugging.

Requirements and low-cost alternatives

- You’ll need: a web or mobile app to instrument, the PostHog SDK for your stack, and basic event naming conventions.

- Nice to have: a staging environment, Segment-style forwarders (if you use them), and a lightweight schema doc.

- Alternatives to consider when budget is tight: self-hosted analytics, basic event tracking in your data warehouse, or lightweight web analytics for top-of-funnel data only.

Step-by-step implementation (beginner-friendly)

- Create a project and install the SDK in your app. Use autocapture for fast visibility; add named events for critical flows like sign-up, onboarding, and purchase.

- Define core product metrics (activation, retention steps, time-to-value). Keep names short and unambiguous.

- Add one feature flag for an upcoming UI change. Target 5–10% of traffic, or specific user cohorts.

- Set up an experiment with the flag as the toggle. Choose a single primary metric (e.g., completion rate of onboarding step 3).

- Enable session replay for a small sample to diagnose friction, and configure privacy filters to avoid capturing sensitive fields.

- Run for 7–10 days, then decide: ship, iterate, or kill.

Beginner modifications and progressions

- Simplify: start with a single platform (web or mobile), one feature flag, and two or three must-have events.

- Scale up: instrument server-side events, add error tracking, unlock more sophisticated experiments (e.g., multi-armed bandits when needed), and introduce surveys to capture qualitative context.

Recommended frequency and metrics

- Cadence: weekly reviews of the analytics board; daily checks on experiments during active tests.

- KPIs: activation rate (first aha moment), conversion rate for the flagged journey, retention at day-7 or week-4, experiment p-values and sample sizes, error rate after ramp-ups, and replay-tagged issue counts.

Safety, caveats, and common mistakes

- PII exposure: incorrectly configured replay can capture sensitive input fields. Mask or block by default.

- Metric sprawl: too many metrics obscure signal. Pick one primary metric per experiment.

- Flag debt: long-lived flags accumulate risk. Archive flags after decisions and document remaining ones.

- Sampling bias: test cohorts must represent your real user mix; avoid targeting just power users.

Sample mini-plan

- This week: install SDK, add three events, create one feature flag.

- Next week: launch a simple experiment on the flagged change; review results after 7 days.

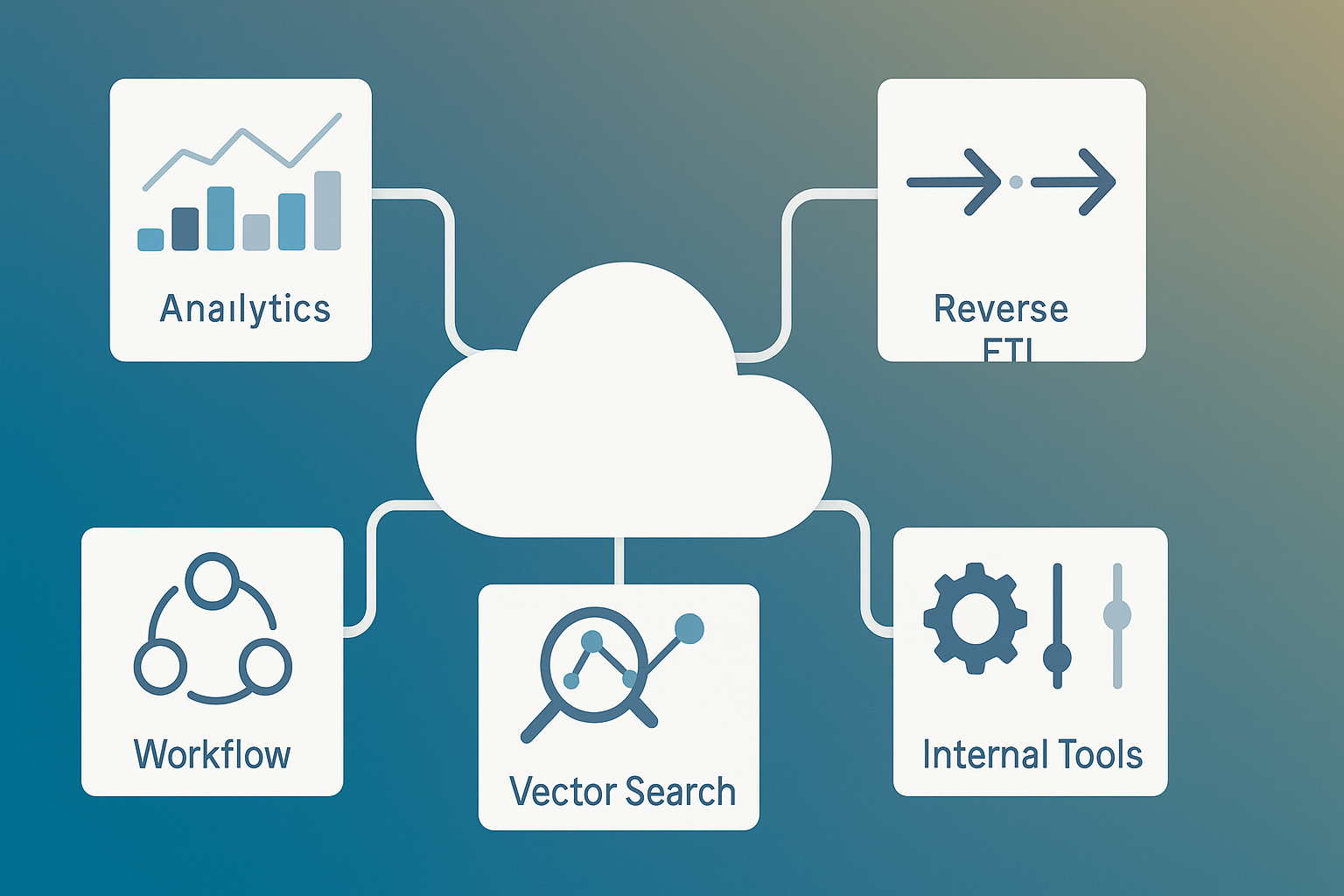

Hightouch (Reverse ETL & Composable Data Activation)

What it is and core benefits

Hightouch operationalizes your warehouse by syncing modeled data into the tools that teams already use—think CRM, marketing automation, ads, support, and even spreadsheets. This “reverse ETL” pattern turns your analytics models into real-time actions, from personalized email journeys to priority lead routing and churn alerts.

Why it matters:

- One source of truth, everywhere: no more hand-built scripts to copy CSVs into downstream apps.

- Fewer manual gaps: marketers and sales ops get fresh, trustworthy segments without pinging data teams.

- Governance: version control and approval flows reduce the “shadow pipelines” that appear around go-to-market teams.

Requirements and low-cost alternatives

- You’ll need: a cloud data warehouse (e.g., BigQuery, Snowflake, or Redshift), SQL skills, destination tools with APIs, and identity mapping (e.g., user_id, email).

- Nice to have: dbt models, Git-based change control, and a QA sandbox destination.

- Alternatives: custom scripts with scheduled jobs, or built-in CSV importers when sync freshness isn’t critical.

Step-by-step implementation (beginner-friendly)

- Pick one high-leverage use case, e.g., “push product-qualified leads to CRM with a PQL score daily.”

- Model the audience or metric in SQL inside your warehouse (join events, account metadata, billing data).

- Connect your warehouse and destination; authenticate with read-only credentials where possible.

- Map identity fields (user_id ↔ contact_id, email ↔ email). Validate a small sample manually in the destination.

- Choose sync mode (upsert vs. update) and set cadence (e.g., hourly).

- Enable logging and alerts. Start with a limited audience and monitor drift or unexpected field overwrites.

Beginner modifications and progressions

- Simplify: daily syncs, read-only field updates, narrow field sets, no deletes.

- Scale up: add more destinations, compute PQL/RFM scores, set up bidirectional feedback (e.g., campaign performance back to warehouse), and manage flows in Git as code.

Recommended frequency and metrics

- Cadence: start hourly or daily; move to 15-minute syncs only when needed.

- KPIs: sync success rate, row counts updated, freshness (latency from model completion to destination), downstream lift (reply rate, pipeline created), and data drift (null rates, distribution changes).

Safety, caveats, and common mistakes

- Identity mismatches: partial joins can create duplicates and dirty profiles; test mapping on edge cases.

- Field overwrites: guard against null-over-non-null and vice versa; protect critical fields with write scopes.

- Governance gaps: treat models as code—PRs, code owners, and approvals—to avoid “silent” changes that break campaigns.

- Backfills without limits: cap maximum rows per sync to prevent cost blowups.

Sample mini-plan

- This week: build a “PQL > 7” model, connect CRM, sync 100 contacts with a custom field.

- Next week: expand to 10,000 contacts, add a churn-risk sync to your ticketing system.

Temporal Cloud (Durable, Observable Workflows as a Service)

What it is and core benefits

Temporal Cloud provides a fully managed control plane for durable workflows. You write your workflows in code using a familiar SDK; the platform ensures state persistence, retries, timeouts, and resumability—even across deployments and outages. In other words, the business logic you care about survives network hiccups and process restarts.

Why it matters:

- Reliability out of the box: long-running operations (e.g., KYC, batch billing, ML pipelines) won’t vanish if a worker dies.

- Developer-friendly: workflows and activities live in your repo, reviewed and tested alongside your app code.

- Observability and control: you can list, query, cancel, or retry workflows and see their event history.

Requirements and low-cost alternatives

- You’ll need: a service where you can run worker processes, SDK support for your language, and secure connectivity to Temporal Cloud.

- Nice to have: a message bus and idempotent external APIs, but Temporal significantly reduces the amount of glue code you’d otherwise maintain.

- Alternatives: cron jobs with queues, state machines in cloud provider services, or custom retry frameworks—each with more operational burden.

Step-by-step implementation (beginner-friendly)

- Pick a workflow with obvious failure points—for example, a three-step sign-up that calls two third-party APIs.

- Define a workflow function that expresses the business steps and compensations; implement each step as an activity.

- Run a worker locally and connect it to a Temporal Cloud namespace.

- Execute the workflow from your app or a CLI, then intentionally trigger failures to validate retries and timeouts.

- Add search attributes to make workflows queryable (e.g., by user_id or order_id).

- Set alerts and dashboards for failure rates and latency.

Beginner modifications and progressions

- Simplify: one small workflow with two activities, short timeouts, and basic retries.

- Scale up: child workflows, schedules and cron, dynamic routing, versioning for safe rolling upgrades, and multi-region workers.

Recommended frequency and metrics

- Cadence: CI/CD deploys tied to workflow versioning; weekly reviews of failure patterns.

- KPIs: workflow success rate, retry counts, median and p95 latency, worker utilization, and cost per workflow execution.

Safety, caveats, and common mistakes

- Non-deterministic code in workflows: avoid reading system time or random values directly; pass them in as inputs.

- Idempotency gaps: if an activity writes to an external system, ensure your operation can safely retry.

- Packet loss without backoff: always configure exponential backoff and maximum attempts thoughtfully.

- Secret handling: use managed secret stores; never embed credentials in workflow code.

Sample mini-plan

- This week: migrate one flaky cron job to a workflow with two activities and timeouts.

- Next week: add an approval step and alerts; scale workers to handle peak load.

Pinecone (Managed Vector Database for AI & Semantic Search)

What it is and core benefits

Pinecone is a fully managed vector database purpose-built for semantic search and retrieval-augmented generation. It stores embedding vectors from models you choose and returns the most similar items for a given query. The serverless architecture handles scaling, replication, and performance tuning so you can focus on your application logic.

Why it matters:

- Production-grade retrieval: consistent latency and relevance at scale for chatbots, search, and recommendation.

- Model-agnostic: bring your own embedding model or switch later without replatforming your index.

- Operational simplicity: create an index, upsert vectors, query—no cluster sizing rituals.

Requirements and low-cost alternatives

- You’ll need: an embedding model (hosted or API), a clean data source to embed, and basic familiarity with vector dimensions and distance metrics.

- Nice to have: a data pipeline to keep embeddings fresh and a reranking layer for relevance.

- Alternatives: local vector libraries for prototypes, or DIY indices in general-purpose databases—fine for experiments, brittle at scale.

Step-by-step implementation (beginner-friendly)

- Choose an embedding model compatible with your content and language. Fix the vector dimension up front.

- Create a Pinecone index in the region closest to your application and pick a metric (cosine, dot-product, etc.).

- Embed and upsert a representative dataset (e.g., 10–50k documents). Include metadata for filtering (tags, timestamps).

- Query with a handful of example prompts and inspect results. Add filters and reranking to improve relevance.

- Instrument latency and recall in your application; set acceptable thresholds (e.g., p95 < 200 ms).

- Automate ingestion to keep vectors in sync when the source content changes.

Beginner modifications and progressions

- Simplify: start with a single index and no metadata filters; measure baseline relevance.

- Scale up: multiple namespaces per tenant, hybrid search (sparse + dense), and write-optimized batch pipelines.

Recommended frequency and metrics

- Cadence: evaluate retrieval quality weekly with a golden set of queries.

- KPIs: p50/p95 latency, recall@k, click-through rate on search results, hallucination rate in RAG outputs, and ingestion lag.

Safety, caveats, and common mistakes

- Dimension mismatch: embeddings must match the index dimension; re-indexing is expensive—choose wisely.

- Dirty data: garbage in, garbage out—normalize, deduplicate, and chunk thoughtfully.

- Unbounded context windows: retrieve fewer, better chunks; add reranking to avoid LLM drift.

- Cost spikes: cap batch sizes and monitor write hot spots.

Sample mini-plan

- This week: embed 20k docs, create one index, and wire up a semantic search endpoint.

- Next week: add metadata filters and a quality dashboard with recall@k and latency.

Retool (Internal Tools & Workflows—Fast)

What it is and core benefits

Retool is a unified builder for internal apps, mobile tools, and automated workflows. You connect data sources (databases, APIs, warehouses), drag-and-drop components to design interfaces, and write minimal code to glue it together. The result: production-ready admin panels, ops consoles, and backoffice tools in days, not quarters.

Why it matters:

- Speed with control: engineers stay in the loop with source control and environments; non-engineers can extend safely.

- Breadth in one place: web apps, mobile apps, cron-less workflows, and AI-powered actions when helpful.

- Lower total cost: less custom boilerplate to build, host, secure, and maintain.

Requirements and low-cost alternatives

- You’ll need: a read-write connection to your primary data sources and someone comfortable with SQL/REST.

- Nice to have: role-based access control, SSO, staging and production environments, and CI for app definitions.

- Alternatives: hand-rolled admin apps, spreadsheets, or open-source builders if you’re willing to operate them.

Step-by-step implementation (beginner-friendly)

- Pick a painful manual process (refund approvals, inventory edits, content moderation).

- Connect your data sources (Postgres, REST, GraphQL). Use least-privilege credentials.

- Build a minimal UI: a table view, a form for edits, and a button that triggers a server action.

- Add validation and audit logs so every change is traceable.

- Gate the app behind SSO and set per-role permissions.

- Ship to a small group, collect feedback, and iterate.

Beginner modifications and progressions

- Simplify: start read-only and add write paths later; ship to a single team first.

- Scale up: embed apps in your internal portal, convert flows into scheduled workflows, and templatize app patterns.

Recommended frequency and metrics

- Cadence: weekly demos of new internal tools; monthly cleanup of unused apps.

- KPIs: time saved per task, error rate of manual operations, number of support tickets resolved through the tool, and app adoption (weekly active users).

Safety, caveats, and common mistakes

- Over-permissioning: use granular resource scopes and row-level filters.

- No audit trail: log every change, include who/when/what.

- Tightly coupled queries: centralize queries to avoid duplication across pages; version them.

- Skipping QA: maintain a staging environment and seed data for repeatable tests.

Sample mini-plan

- This week: connect Postgres read-only, ship a dashboard.

- Next week: add an approval form and workflow that posts to your ticketing system with audit logs.

Quick-start checklist (print and use)

- Choose one use case per platform; write a one-sentence success metric for each.

- Create secure service accounts and rotate credentials before connecting anything.

- Set up staging for analytics, data syncs, workflows, and internal apps.

- Agree on naming conventions for events, models, and workflow IDs.

- Enable logging and alerts on day one; decide who triages.

- Document rollback or kill-switch steps for each tool.

- Schedule a single weekly checkpoint to review KPIs and make go/no-go decisions.

Troubleshooting & common pitfalls

Symptoms: experiment results bounce around week to week.

Likely causes: too small a sample or overlapping rollouts.

Fixes: lengthen test duration, increase eligible traffic, and pause competing changes.

Symptoms: reverse ETL syncs succeed but downstream data looks wrong.

Likely causes: identity mismatches or null overwrites.

Fixes: add deterministic joins on stable keys, protect non-nullable fields, and run diff checks on small batches.

Symptoms: workflows get stuck or retried endlessly.

Likely causes: non-deterministic workflow code or non-idempotent activities.

Fixes: purge randomness from workflow logic, make writes idempotent with upserts, and cap retry attempts with backoff.

Symptoms: semantic search returns irrelevant results.

Likely causes: poor chunking, missing metadata, or an embedding model misaligned with your content.

Fixes: standardize chunk sizes, add metadata filters, try a domain-tuned model, and add reranking.

Symptoms: internal tools drift into spaghetti.

Likely causes: no design system or query reuse.

Fixes: templatize components, centralize queries, and require reviews for app schema changes.

How to measure progress and results (KPI starter pack)

Product learning (PostHog):

- Activation rate for new users exposed to a flagged feature.

- Conversion uplift from experiments with confidence intervals.

- Time-to-decision (days from feature start → ship/kill).

Data activation (Hightouch):

- Sync freshness (minutes from warehouse model completion to destination).

- Downstream impact (reply rate, pipeline created, LTV of activated cohort).

- Data quality (null rates, identity match rate, schema drift alerts).

Workflow reliability (Temporal Cloud):

- Workflow success rate and retry depth.

- p95 latency for representative workflows.

- Incidents avoided due to automatic retries/timeouts.

AI retrieval (Pinecone):

- Recall@k on a golden dataset.

- Latency (p50/p95).

- End-user satisfaction (thumbs-up rate, task completion with RAG).

Internal velocity (Retool):

- Lead time to ship an internal tool.

- Time saved per task (baseline vs. post-tool).

- Adoption (weekly active internal users).

A simple 4-week starter plan (30 days to confidence)

Week 1 — Foundations & guardrails

- Provision accounts and namespaces across all five platforms in staging.

- Install the PostHog SDK; add three events and one feature flag.

- Connect Hightouch to your warehouse and your CRM in read-only mode.

- Spin up a Temporal Cloud namespace and run a “hello world” workflow.

- Create a Pinecone index and upsert 5k embedded documents.

- Connect a read-only database to Retool and build a simple dashboard.

Week 2 — First real use cases

- Launch a small experiment behind a PostHog feature flag.

- Ship one Hightouch sync (e.g., PQLs to CRM) to a tiny cohort.

- Migrate one flaky cron job to a Temporal workflow with retries and timeouts.

- Build a semantic search endpoint with Pinecone and wire it to a QA page.

- Add a Retool form to approve a standard operation with audit logs.

Week 3 — Scaling & safety nets

- Add identity rules and masking to analytics and replay.

- Increase Hightouch sync cadence and add drift checks.

- Introduce workflow versioning and search attributes in Temporal.

- Add metadata filters and a relevance dashboard for Pinecone.

- Harden Retool RBAC, SSO, and environment separation.

Week 4 — Decision day

- Review KPIs against success criteria.

- Run one controlled ramp-up in production for each tool.

- Document operating runbooks (alerts, ownership, on-call).

- Decide: expand, pause, or deprecate—and close the loop with stakeholders.

FAQs

1) Are these platforms suitable for small teams?

Yes. Each can start small, often with a free or trial tier, and grow as your usage grows. Begin with one narrowly scoped use case to prove value before expanding.

2) Do I need a data warehouse to benefit from data activation?

For reverse ETL use cases, a warehouse is strongly recommended because it acts as your source of truth and modeling layer. Without it, you’ll spend more time wrangling fragmented data.

3) What if I already use basic web analytics—why add product analytics?

Web analytics focuses on traffic and acquisition. Product analytics and feature flags let you test product changes, attribute outcomes to users and cohorts, and iterate with confidence.

4) How technical do we need to be to use Temporal Cloud?

You’ll write workflow code using an SDK and run worker processes. It’s approachable for application engineers and pays off by reducing brittle, glue-code-heavy systems.

5) Which embedding model should I use with a vector database?

Start with a reliable general-purpose embedding model that supports your language and content type. Measure retrieval quality weekly and consider domain-specific models if needed.

6) How do we avoid creating data chaos with reverse ETL?

Treat models and syncs as code: version control, reviews, QA destinations, and explicit owners. Protect critical fields in destinations and always test on small cohorts first.

7) What are the privacy considerations for session replay?

Mask or block sensitive inputs by default and store only what you need. Align your configuration with your privacy policy and regulatory obligations.

8) How do we prevent feature flags from becoming permanent?

Create a “flag lifecycle” policy. Every flag needs an owner, a purpose, and an expiration date. Archive flags after decisions and delete unused variants.

9) Can Retool replace all our internal apps?

No single tool fits every case. Use Retool where speed and CRUD-heavy operations dominate. Keep highly specialized or latency-critical systems in custom code.

10) What happens if the vector index grows too large?

Sharding and namespaces help, but relevance and cost can degrade. Regularly purge outdated items, compress vectors when appropriate, and monitor hot partitions.

11) How do we know when to scale up pilots?

When your primary KPI shows a sustained improvement, operational risk is managed (alerts, rollbacks), and team owners commit to ongoing maintenance.

12) We’re worried about vendor lock-in—how to mitigate?

Prefer tools with open SDKs, export paths, and model-agnostic designs. Keep your core business logic and data models in your repos and warehouse.

Conclusion

Emerging SaaS platforms win not because they’re shiny, but because they shorten the distance between intent and impact. Product teams learn faster with integrated analytics and flags. Revenue teams move faster when the warehouse powers their tools. Engineers sleep better when workflows are durable by default. AI features become reliable when retrieval is measured, not guessed. And internal tools stop being a tax on velocity.

Pick one platform, one use case, one KPI—then follow the four-week plan. The compounding returns will show up faster than you think.

CTA: Start your 30-day pilot today—pick one platform, define one metric, and book a kickoff for next Monday.

References

- Feature flags, product analytics, experiments, and related docs, PostHog, accessed June–August 2025. https://posthog.com/docs/feature-flags

- Flags – the feature flags evaluation API endpoint (last updated June 12, 2025), PostHog, 2025-06-12. https://posthog.com/docs/api/flags

- Feature flag best practices (last updated June 26, 2025), PostHog, 2025-06-26. https://posthog.com/docs/feature-flags/best-practices

- Product analytics with autocapture, PostHog, accessed August 2025. https://posthog.com/product-analytics

- What is Reverse ETL? The Definitive Guide, Hightouch, 2024-01-23. https://hightouch.com/blog/reverse-etl

- The Complete Guide to Reverse ETL (resource page), Hightouch, accessed August 2025. https://hightouch.com/resources/reverse-etl-whitepaper

- Hightouch Documentation (platform overview and concepts), Hightouch, accessed August 2025. https://hightouch.com/docs

- Overview – Temporal Cloud (service overview), Temporal, accessed August 2025. https://docs.temporal.io/cloud/overview

- Temporal Workflows (concepts and guidance), Temporal, accessed August 2025. https://docs.temporal.io/workflows

- Pinecone Database Docs (platform overview and quickstart), Pinecone, accessed August 2025. https://docs.pinecone.io/

- Serverless architecture (architecture overview), Pinecone Docs, accessed August 2025. https://docs.pinecone.io/reference/architecture/serverless-architecture

- Quickstart (getting started guide), Pinecone Docs, accessed August 2025. https://docs.pinecone.io/guides/get-started/quickstart

- Retool – Build internal software better, with AI (product overview), Retool, accessed August 2025. https://retool.com/

- Retool Docs (product documentation hub), Retool, accessed August 2025. https://docs.retool.com/

- Build vs buy: a guide for internal tools, Retool, 2025-06-05. https://retool.com/blog/build-vs-buy-guide-for-internal-tools