The power of numbers is more than a metaphor in this era of intelligent products. It’s literal: tokens processed per second, vectors retrieved per query, dollars of compute per million tokens, conversion rates nudged by smarter onboarding, and capital pooling into a few mega-rounds. This article explores how young companies harness those numbers—data, metrics, costs, and outcomes—to outpace incumbents and reshape markets. You’ll learn the modern playbook for building and scaling AI-driven products, how to think about infrastructure and evaluation, and how to measure real business impact. If you’re a founder, product lead, or investor looking to translate model hype into durable value, this is for you.

Disclaimer: The content below is for general information only and not legal, financial, or compliance advice. For decisions with regulatory, legal, or financial implications, consult qualified professionals.

Key takeaways

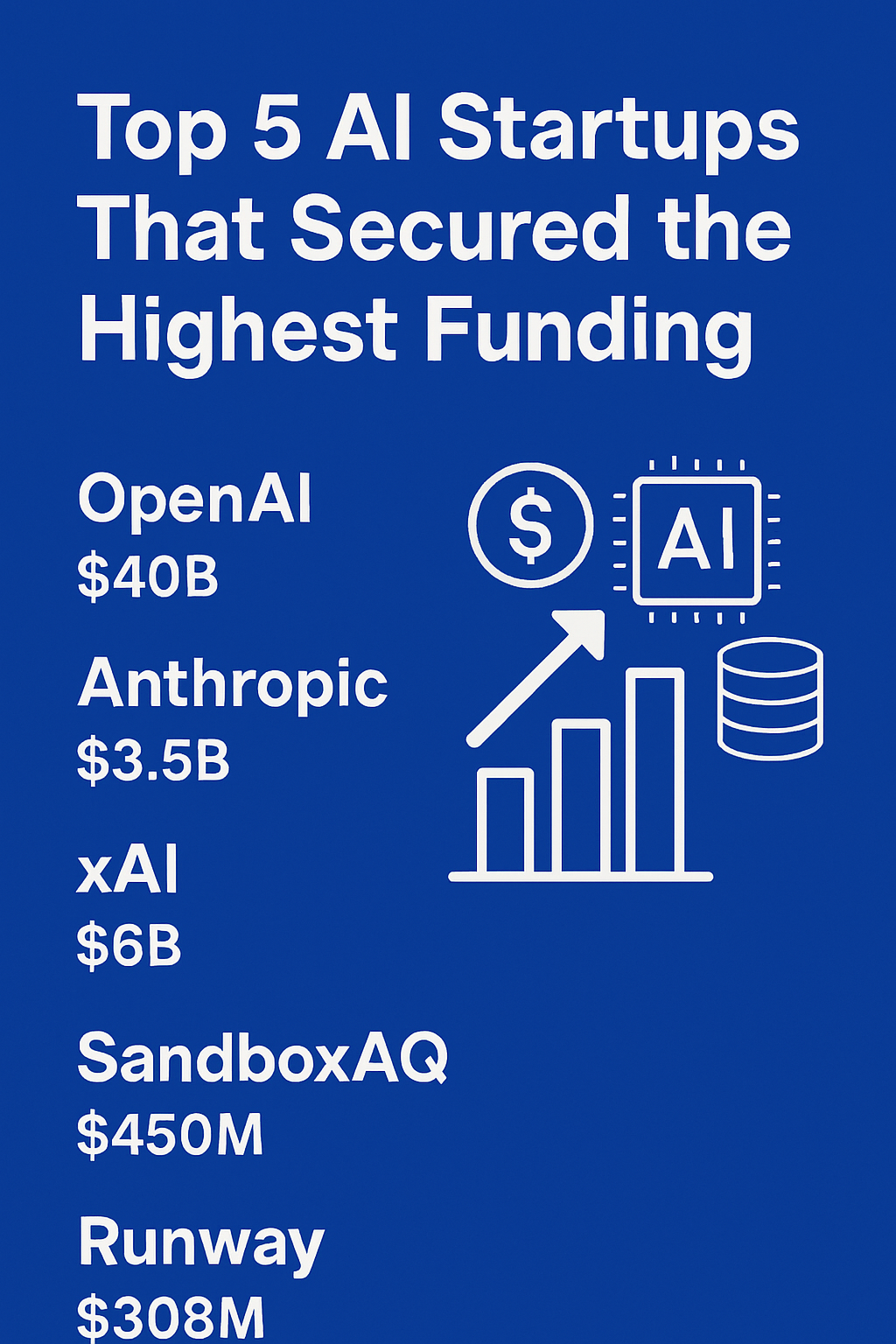

- Adoption is mainstream. A large majority of organizations reported using AI in 2024, with about seven in ten adopting generative capabilities, and private AI investment surpassed one hundred billion dollars the same year.

- Capital is concentrated. Funding increasingly pools into a handful of mega-rounds, which reshapes partnership, pricing, and platform strategies for everyone else.

- Architecture is product strategy. RAG, fine-tuning, and agentic workflows are complementary levers; the right mix depends on data custody, latency, and cost constraints.

- Evaluation is the moat. Robust, task-level evaluation and continuous monitoring matter more than headline benchmarks for real-world performance and trust.

- ROI is measurable. Founders should model unit economics per request, identify two “golden” customer workflows, and instrument usage-to-value conversion end to end.

- Regulatory timelines are a roadmap. Risk-based rules in Europe phase in through 2025–2026; the smartest teams align their technical and documentation pipelines with those dates early.

Why numbers now: context and momentum

The last two years turned AI from an R&D curiosity into a production staple. Two figures tell the story: global private investment in AI surpassed the hundred-billion-dollar mark in 2024, and generative models accounted for a sizable share of that total. On the adoption side, overall AI usage rose to well over half of organizations, while generative capabilities were embraced by roughly seven in ten during 2024. Meanwhile, the cost, procurement, and scarcity of advanced accelerators shaped strategy at every stage—prototype, pilot, and scale.

This convergence—broad adoption, large capital inflows, and hardware constraints—forces startups to operate with hard metrics: latency budgets, cost per action, retrieval quality, red-team coverage, and governance readiness. The sections below translate those pressures into a practical operating playbook.

Building with data network effects and trustworthy synthetic data

What it is and why it matters

Data network effects emerge when each new customer or use case improves model performance for the next, through fine-tuning, prompt libraries, or retrieval corpora. Synthetic data multiplies those effects when real-world data is sparse or sensitive. Used carefully, it helps expand coverage, edge-case robustness, and safety testing.

Requirements and low-cost alternatives

- Core needs: A secure data pipeline, labeling or distillation workflows, and clear policies around user consent and privacy.

- Skills: Data engineering, evaluation design, and basic statistical testing (e.g., A/B, bootstrap CIs).

- Costs: Storage and vector databases; training or fine-tuning credits; evaluation runs.

- Low-cost options: Start with small retrieval corpora, prompt catalogs, and lightweight adapters before full model fine-tuning. Use open formats for datasets and prompt templates to avoid lock-in.

Step-by-step implementation

- Map “golden workflows.” Identify two core user journeys where AI can reduce time-to-value (e.g., triage a support ticket; draft a sales email).

- Instrument end to end. Log inputs, outputs, user actions, latency, and outcome labels (accepted, edited, rejected).

- Create a minimal retrieval corpus. Begin with your top 50–200 canonical documents or snippets that resolve the majority of user tasks.

- Iterate with synthetic data. Generate edge cases and rare scenarios; validate with human review before model updates.

- Close the loop. Ship small improvements weekly: update prompts, rules, and entity dictionaries; evaluate each change against a fixed test set.

Beginner modifications and progressions

- Start small: Use a single “assist” surface (one button, one panel) and one retrieval index.

- Scale up: Add domain-specific evaluators (e.g., a regex or rule-based checker) to catch known failure modes, then expand to multi-index retrieval (product docs + policy + knowledge base).

Recommended cadence and metrics

- Frequency: Weekly data curation; bi-weekly evaluation refresh; monthly fine-tuning or adapter retrains.

- KPIs: Top-1 retrieval hit rate, edit-accept rate, time saved per task, and regression-free release rate.

- Guardrail metrics: Hallucination incidents per 1,000 requests, personal data leakage rate, and blocked-response precision.

Safety, caveats, and common mistakes

- Over-relying on synthetic data without validating distributional similarity to production traffic.

- Treating user data as “free”; ensure consent and opt-outs are clear.

- Allowing silent regressions; always run a locked test suite on each change.

Mini-plan example (2–3 steps)

- Week 1: Instrument two workflows and build a 150-document retrieval corpus.

- Week 2: Add synthetic edge cases for rare intents and run a baseline evaluation; ship the best prompt + rules variant.

Product architecture patterns: RAG, fine-tuning, and agentic systems

What it is and why it matters

Modern AI products combine three building blocks:

- Retrieval-augmented generation (RAG): Ground responses in your verified knowledge, reducing hallucinations and enabling fast updates.

- Fine-tuning or adapters: Teach the model domain style, structured outputs, and shorthand.

- Agentic workflows: Break complex tasks into tool-using steps with explicit plans, memory, and verification.

Requirements and low-cost alternatives

- Core needs: A vector database, prompt management, evaluation harness, and access to base models.

- Skills: Prompt engineering, schema design, and tool integration (search, APIs, calculators).

- Low-cost options: Start with standard embeddings, a single function-calling tool, and a prompt-only system; upgrade to fine-tuning later.

Step-by-step implementation

- Define intents and schemas. For each user intent (answer, extract, transform, plan), design output schemas and validation rules.

- Start with RAG. Index curated sources; implement re-ranking and citation snippets; log retrieved passages for debuggability.

- Add a verifier. Before responses reach the user, run checks: schema validation, forbidden content filters, and a simple fact-check against the retrieved passages.

- Introduce lightweight agency. For multi-step tasks, add a planner tool that decomposes tasks and calls other tools with explicit inputs/outputs.

- Fine-tune last. Once prompts and schemas stabilize, fine-tune adapters for style and structure; keep a prompt-only fallback.

Beginner modifications and progressions

- Basic: Single-hop RAG with top-k retrieval and strict output schemas.

- Intermediate: Add query rewriting, multi-vector retrieval, and re-ranking.

- Advanced: Tool-using agents with memory and self-verification across steps.

Cadence and metrics

- Frequency: Weekly architecture reviews; monthly refactors.

- KPIs: Answer correctness on eval sets, citation coverage, structured-output validity, and tool-call success rates.

- User metrics: Edit-accept rate and task completion time.

Safety, caveats, and common mistakes

- Allowing unbounded tool calls without timeouts or budgets.

- Skipping schema validation; malformed outputs break downstream systems.

- Ignoring retrieval freshness; stale indices degrade trust.

Mini-plan example (2–3 steps)

- Step 1: Deploy RAG with schema validation for one workflow.

- Step 2: Add a plan-and-verify step for multi-tool tasks; track tool-call failure reasons.

Go-to-market for AI products: pricing, packaging, and compliance

What it is and why it matters

AI products often win with bottoms-up adoption but monetize on usage and outcomes. Pricing must reflect compute realities and perceived value, while packaging must be simple enough for self-serve trials but enterprise-ready for security reviews.

Requirements and low-cost alternatives

- Core needs: A metering service, cost analytics, PII controls, and a security review packet (architecture diagrams, data flows, subprocessors, and guardrails).

- Skills: Pricing design, demand testing, and sales enablement.

- Low-cost options: Offer a free tier with strict caps and optimize for one hero workflow; use credits tied to tokens or actions rather than unlimited trials.

Step-by-step implementation

- Define units. Choose a customer-friendly unit (tasks, documents, messages) and map it to internal costs (tokens, tool calls, context length).

- Set thresholds. Establish rate limits and overage pricing; communicate latency expectations.

- Operationalize trust. Prepare a one-pager covering data retention, training opt-outs, and incident response.

- Proof of value. Offer a two-week pilot with baseline and target KPIs; publish a results summary.

Beginner modifications and progressions

- Start: One plan, one add-on, and clear overages.

- Progress: Introduce enterprise controls (SAML, private connectors), and outcome-based pricing for specific workflows.

Cadence and metrics

- Frequency: Quarterly pricing reviews.

- KPIs: Free-to-paid conversion, average revenue per account, gross margins net of compute, and pilot-to-contract conversion.

Safety, caveats, and common mistakes

- Underpricing long-context or high-tooling interactions; hidden costs erode margins.

- Failing to provide opt-out from training on customer data when required.

- Overcomplicating packaging; users avoid choice paralysis.

Mini-plan example (2–3 steps)

- Step 1: Ship a usage-based plan with a hard cap and one enterprise add-on.

- Step 2: Run a pilot in one vertical; report time-saved per task and acceptance rate.

Infrastructure strategy: compute, procurement, and cost control

What it is and why it matters

Access to advanced accelerators can define a startup’s speed and unit costs. Prices for top-tier chips have spanned from mid-five figures up to six figures depending on configuration and supply. Teams must plan for availability, pre-emption, and scaling—and design for graceful degradation.

Requirements and low-cost alternatives

- Core needs: Cloud or colocation contracts, autoscaling, job schedulers, vector databases, and observability.

- Skills: Capacity planning, queueing theory basics, and cost modeling per request.

- Low-cost options: Mix spot or preemptible instances for non-urgent jobs; cache embeddings and reuse intermediate results.

Step-by-step implementation

- Baseline your cost per unit. Measure tokens in/out, context length, tool calls, and storage per request.

- Tier your workloads. Separate latency-critical inference from batch jobs (evaluation, indexing, training).

- Introduce hedging. Support at least two model providers and maintain a fallback prompt-only flow.

- Control context. Use rerankers and summaries to keep context windows tight; cap maximum tokens per request.

- Procure deliberately. Blend reserved capacity for steady load with on-demand for spikes; model the breakeven.

Beginner modifications and progressions

- Basic: Single cloud, one model, strict token caps.

- Intermediate: Multi-provider routing, content caching, dynamic top-k retrieval.

- Advanced: Hybrid cloud + colocation, custom accelerators, and dedicated data planes.

Cadence and metrics

- Frequency: Weekly cost reviews; monthly capacity tests.

- KPIs: p95 latency, cost per 1,000 tokens, cache hit rate, and incident minutes.

- Guardrails: Drop-in degraded responses when SLAs risk breach.

Safety, caveats, and common mistakes

- Failing to cap context expansion; costs balloon invisibly.

- Underestimating queueing delays under bursty traffic.

- Over-optimizing before product-market fit; avoid premature commitments.

Mini-plan example (2–3 steps)

- Step 1: Add a reranker and token budget to trim context by 30–50%.

- Step 2: Implement a second provider and a read-only fallback when primary capacity saturates.

MLOps and evaluation: the moat you can actually build

What it is and why it matters

For production AI, the best moat is not a proprietary model but a feedback-tight evaluation stack. Live traffic changes constantly; your evaluation harness must detect regressions, enforce safety, and measure business outcomes—not just benchmark scores.

Requirements and low-cost alternatives

- Core needs: A test set of real prompts; labeled outcomes; rule-based checks; a sandbox with golden documents or expected outputs.

- Skills: Designing task-level metrics, inter-rater reliability (if using human labels), and basic statistical testing.

- Low-cost options: Begin with a spreadsheet of 100–200 prompts and ground-truth responses; run batch evals nightly.

Step-by-step implementation

- Create a seed eval set. Include common, edge, and adversarial cases; store expected outcomes and pass/fail criteria.

- Instrument everything. Log retrievals, intermediate tool calls, and validation errors for post-mortems.

- Automate regression checks. Block releases that degrade key metrics beyond a small threshold.

- Tie to business outcomes. Map eval metrics to conversion, retention, or time-saved.

Beginner modifications and progressions

- Basic: Static test set; manual review of failures.

- Intermediate: Synthetic adversarial generation and semantic diffing.

- Advanced: Continuous evaluation with shadow traffic and anomaly detection.

Cadence and metrics

- Frequency: Nightly batch evals; weekly manual review.

- KPIs: Accuracy by task, hallucination incidents, structured-output validity, and regression-free deploys.

- User metrics: Edit-accept rates and resolution times.

Safety, caveats, and common mistakes

- Optimizing for benchmarks that don’t match your users’ tasks.

- Ignoring inter-rater agreement; noisy labels will mislead development.

- Shipping changes without re-running evals across critical workflows.

Mini-plan example (2–3 steps)

- Step 1: Build a 150-prompt test set and automate pass/fail checks.

- Step 2: Add nightly runs and a dashboard tying model metrics to business outcomes.

Responsible AI operations: privacy, safety, and governance

What it is and why it matters

Safety and privacy are not just ethical obligations—they’re commercial requirements and, in many jurisdictions, legal ones. Risk-based rules in Europe entered into force in 2024 with phased application dates through 2026, including early provisions in 2025 for certain prohibitions and literacy requirements, followed by obligations for general-purpose model providers in 2025 and full applicability in 2026. Startups that treat documentation and controls as product features win enterprise trust.

Requirements and low-cost alternatives

- Core needs: Data-flow diagrams, retention and deletion policies, content filters, bias and fairness tests, and an incident response plan.

- Skills: Threat modeling, red-teaming, and documentation.

- Low-cost options: Start with a minimal set of filters and clear data-handling choices (e.g., no training on customer inputs by default); expand as requirements demand.

Step-by-step implementation

- Map data flows. Identify what data is collected, where it moves, how long it’s kept, and who can access it.

- Define unacceptable behaviors. Block jailbreaks, obvious disallowed content, and dangerous tool actions.

- Red-team. Run internal adversarial tests; fix high-severity issues before release.

- Document and disclose. Share limitations, known failure modes, and opt-out options.

Beginner modifications and progressions

- Basic: Minimal filters, privacy policy, and data deletion endpoint.

- Intermediate: Context-aware filtering, per-tenant isolation, and role-based access.

- Advanced: Differential privacy, cryptographic controls, and third-party audits.

Cadence and metrics

- Frequency: Quarterly risk reviews; monthly red-team sprints.

- KPIs: Blocked-content precision/recall, incident resolution time, and audit-ready artifact coverage.

Safety, caveats, and common mistakes

- Over-blocking to the point of breaking legitimate workflows.

- Failing to tell users what the system can’t do.

- Treating governance as a one-time checklist instead of a continuous process.

Mini-plan example (2–3 steps)

- Step 1: Publish your model card and data-handling summary.

- Step 2: Add a red-team sprint before each major release and track fixes.

Measuring ROI: unit economics and outcome-based thinking

What it is and why it matters

AI can reduce manual effort, expand coverage, and unlock new revenue—but only if you measure it. Translate each product surface into a small model of inputs and outputs: tokens, time, accuracy, and dollars.

Requirements and low-cost alternatives

- Core needs: Analytics with event-level logging, cost meter, cohort analysis, and a financial model.

- Skills: Basic spreadsheet modeling and experimentation.

- Low-cost options: Use open-source analytics and a simple warehouse; export weekly snapshots to a spreadsheet until scale demands more.

Step-by-step implementation

- Define a unit. Pick a task that captures user value (e.g., one resolved ticket).

- Quantify baseline. Measure time and cost without AI assistance.

- Measure uplift. Track time saved, accuracy, and downstream conversion with AI.

- Model breakeven. Include compute, storage, and support; adjust pricing or caps to keep gross margin healthy.

Beginner modifications and progressions

- Start: One task, one cohort, one metric.

- Scale: Multi-task portfolios with weighted ROI and capacity planning.

Cadence and metrics

- Frequency: Weekly ROI reviews; monthly pricing tweaks.

- KPIs: Gross margin net of compute, time-to-value, payback period, and churn.

Safety, caveats, and common mistakes

- Ignoring the cost of errors (e.g., bad outputs that require rework).

- Measuring only vanity metrics (queries per day) instead of outcomes.

- Underestimating support and retraining costs.

Mini-plan example (2–3 steps)

- Step 1: Publish a simple ROI dashboard for one workflow.

- Step 2: Use it to justify pricing and capacity decisions.

Quick-start checklist

- Two “golden” workflows defined with clear success criteria

- Minimal retrieval corpus curated and indexed

- Instrumentation for inputs, outputs, latency, and user edits

- Fixed evaluation set with pass/fail criteria

- Standard operating procedure for safety and red-team testing

- Usage-based pricing with clear caps and overages

- Cost meter tied to tokens, context, and tool calls

- Documentation packet covering data flows and retention

Troubleshooting and common pitfalls

Symptoms and likely causes

- High costs with stable traffic: Context windows are bloated; retrieval returns irrelevant passages; caching disabled.

- Inconsistent outputs: No schema validation; missing verifier step; non-deterministic prompts across versions.

- Users don’t trust answers: Stale knowledge base; missing citation snippets; weak red-team coverage.

- Pilot success doesn’t translate to production: Eval set drift; business metrics not tied to model metrics; insufficient onboarding.

- Procurement stalls: Incomplete security package; unclear data-handling and opt-out story.

Fixes that work

- Add a reranker and strict token budgets.

- Introduce schema validation and a verifier before responses are shown.

- Refresh indices weekly; expose citations and feedback buttons.

- Lock an evaluation set and prevent regression; add guardrail metrics.

- Maintain a clean, shareable packet of architecture, data flows, and incident process.

How to measure progress and results

Foundational metrics

- Retrieval quality: Top-k hit rate and novelty coverage.

- Output quality: Task-level accuracy, structured-output validity, and edit-accept rate.

- User value: Time saved per task, resolution rate, and conversion lift.

- Reliability: p95 latency, incident minutes, and regression-free deploys.

- Economics: Cost per 1,000 tokens, gross margin, and payback period.

Diagnostic metrics

- Context efficiency: Average tokens per request and reranker effectiveness.

- Tooling: Tool-call success rate and retry rate.

- Safety: Blocked-content precision/recall and leakage incidents.

Review cadence

- Daily: reliability and safety dashboards

- Weekly: product quality and ROI

- Monthly: pricing, packaging, and capacity

A simple 4-week starter plan

Week 1 — Foundation and focus

- Define two “golden” workflows with crisp success criteria.

- Curate a 150-document knowledge base; index with embeddings.

- Instrument logging for inputs, outputs, latency, and edits.

- Draft a baseline evaluation set (150 prompts: common, edge, adversarial).

Week 2 — Ship a reliable v1

- Implement RAG with schema-validated outputs and a verifier.

- Add citation snippets and a feedback control on each response.

- Run the evaluation set; fix failures; ship to a 5–10 user beta.

- Publish a minimal data-handling and security summary.

Week 3 — Prove value and control costs

- Add a reranker; cap token budgets; cache frequent results.

- Measure time-saved per task and edit-accept rate in production.

- Design and announce usage-based pricing with clear caps.

Week 4 — Harden, learn, and prepare to scale

- Run a red-team sprint; fix high-severity issues.

- Add nightly batch evals and block regressions automatically.

- Prepare a two-week pilot plan for one target account: baseline, target, and success criteria.

Frequently asked questions

1) How do I decide between RAG and fine-tuning?

Start with RAG to ground answers in your own knowledge and enable quick updates. Add fine-tuning when you need consistent style, structured outputs, or shorthand that prompts alone can’t deliver. Many teams do both: RAG for truth, fine-tuning for form.

2) What’s a realistic target for edit-accept rate?

Aim for a majority of outputs needing minimal edits in your top workflows. Track acceptance by task, not globally, and compare against human-only baselines.

3) How do I keep compute costs under control?

Cap context length, add a reranker, cache embeddings and frequent results, and tier workloads. Measure cost per unit (e.g., per resolved ticket) rather than just tokens.

4) How do I evaluate hallucination?

Use a fixed test set with ground-truth answers and design pass/fail checks for factuality. Also track incidents per 1,000 requests in production and tie them to root causes (stale docs, missing citations).

5) What’s the minimum documentation packet for enterprise buyers?

Provide architecture and data-flow diagrams, retention and deletion policies, subprocessors, safety controls, and an incident response plan. Keep it concise and current.

6) Should I build my own models?

Unless you have a unique data advantage and the resources to train and maintain models, start with hosted models. Differentiate with data curation, evaluation, and workflow design.

7) How do I design pricing?

Choose a unit that aligns with user value (documents, actions, messages) and map it to internal costs. Start simple with one plan and overages; refine quarterly using ROI data.

8) How do I prepare for changing regulations?

Track phased timelines in your target markets, especially dates tied to prohibitions, literacy requirements, and obligations for general-purpose models. Build documentation and controls early so you’re not scrambling near deadlines.

9) What if users don’t trust AI outputs?

Show citations, make it easy to give feedback, and fix high-impact errors quickly. Publish a summary of known limitations and what the system won’t do.

10) How do I avoid vendor lock-in?

Use open formats for prompts, datasets, and evaluation artifacts. Support multiple providers and maintain a prompt-only or reduced-capability fallback.

11) What’s the quickest way to demonstrate ROI?

Pick one high-frequency workflow with measurable outcomes, run a two-week pilot with a baseline, and report time-saved and acceptance rates.

12) Do I need agentic systems from day one?

No. Start with single-turn tasks and add planning and tool-use only where necessary. Each layer adds complexity and new failure modes.

Conclusion

The power of numbers is how AI startups win: precise metrics, relentless evaluation, disciplined cost control, and honest safety practices. Adoption and investment tailwinds are real, but the companies that endure are the ones that convert compute into customer value with measurable, repeatable processes. Start small, instrument everything, and ship improvements every week. In a market this dynamic, operational excellence—not just model access—is the ultimate unfair advantage.

Call to action: Pick one workflow, build a minimal retrieval system this week, and prove value with numbers your customers can feel.

References

- AI Index 2025 Report (Overview), Stanford Institute for Human-Centered AI, April 7, 2025. https://hai.stanford.edu/ai-index/2025-ai-index-report

- AI Index 2025 Report (PDF), Stanford Institute for Human-Centered AI, April 18, 2025. https://hai-production.s3.amazonaws.com/files/hai_ai_index_report_2025.pdf

- AI Index 2025: State of AI in 10 Charts, Stanford Institute for Human-Centered AI, April 7, 2025. https://hai.stanford.edu/news/ai-index-2025-state-of-ai-in-10-charts

- Economy Chapter: Private Investment Trends, AI Index 2025, Stanford Institute for Human-Centered AI, 2025. https://hai.stanford.edu/ai-index/2025-ai-index-report/economy

- The State of AI (Global Survey 2025), McKinsey & Company, March 12, 2025. https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

- The State of AI in Early 2024: Gen AI Adoption Spikes and Starts to Generate Value (PDF), McKinsey & Company, May 30, 2024. https://www.mckinsey.com/~/media/mckinsey/business%20functions/quantumblack/our%20insights/the%20state%20of%20ai/2024/the-state-of-ai-in-early-2024-final.pdf

- Exclusive: Nvidia pursues $30 billion custom chip market with new unit, Reuters, February 10, 2024. https://www.reuters.com/technology/nvidia-chases-30-billion-custom-chip-market-with-new-unit-sources-2024-02-09/

- NVIDIA H100 Price Guide 2025: Detailed Costs and FAQs, Jarvis Labs (Docs), April 12, 2025. https://docs.jarvislabs.ai/blog/h100-price

- Application Timeline for Risk-Based AI Regulation in Europe, European Commission – Digital Strategy, accessed August 13, 2025. https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

- The Roadmap to the European AI Act: A Detailed Guide, Alexander Thamm blog, July 23, 2025. https://www.alexanderthamm.com/en/blog/eu-ai-act-timeline/

- Biggest Rounds Gobble Up A Growing Share Of Startup Funding, Crunchbase News, May 2, 2025. https://news.crunchbase.com/venture/startup-funding-biggest-rounds-openai-anthropic-data/

- Q2 Global Venture Funding Climbs In A Blockbuster Year, Crunchbase News, July 8, 2025. https://news.crunchbase.com/venture/global-funding-climbs-q2-2025-ai-ma-data/

3 Comments