As of February 2026, the global economy has shifted from asking “What can AI do?” to “How fast can we train it?” This shift has birthed a new category of labor: the Silicon Workforce. Unlike the human workforce, which relies on biological experience and years of education, this digital workforce—composed of Large Language Models (LLMs), autonomous agents, and industrial robots—thrives on one thing: high-quality data.

However, the world has hit a “data wall.” The high-quality, human-generated text and images that fueled the initial AI boom are largely exhausted. Enter synthetic data, the artificially generated information that mirrors the statistical properties of real-world data without compromising privacy or hitting the limits of physical collection.

Key Takeaways

- Scalability: Synthetic data allows developers to create infinite training sets for scenarios that rarely occur in real life.

- Privacy-by-Design: Because synthetic data isn’t tied to real individuals, it bypasses many GDPR and CCPA constraints.

- Bias Mitigation: Generative models can “balance” datasets by intentionally creating synthetic examples of underrepresented groups.

- Cost Efficiency: Generating data in a virtual environment is often 10x to 100x cheaper than manual data labeling.

Who This Article Is For

This deep dive is designed for AI strategists, data scientists, and business leaders who are tasked with scaling digital operations. If you are struggling with data scarcity, privacy regulations, or the “sim-to-real” gap in robotics, this guide provides the technical and strategic roadmap for leveraging synthetic data in 2026.

Defining the Silicon Workforce

The term “Silicon Workforce” refers to the collective ecosystem of AI models and autonomous systems that perform cognitive or physical tasks previously reserved for humans. In 2026, this includes:

- Customer Service Agents: LLMs that handle complex, multi-turn negotiations.

- Autonomous Logistics: Drones and warehouse robots that navigate dynamic environments.

- Digital Twins: Virtual versions of factories or cities that simulate “what-if” scenarios.

For these entities to work effectively, they need to be trained on “edge cases”—those rare but critical events, like a pedestrian jumping in front of a self-driving car or a rare fraudulent transaction. Real-world data for these events is scarce. Synthetic data fills these gaps, providing the “on-the-job training” the silicon workforce needs to reach professional-grade reliability.

The Technology Behind the Synthesis

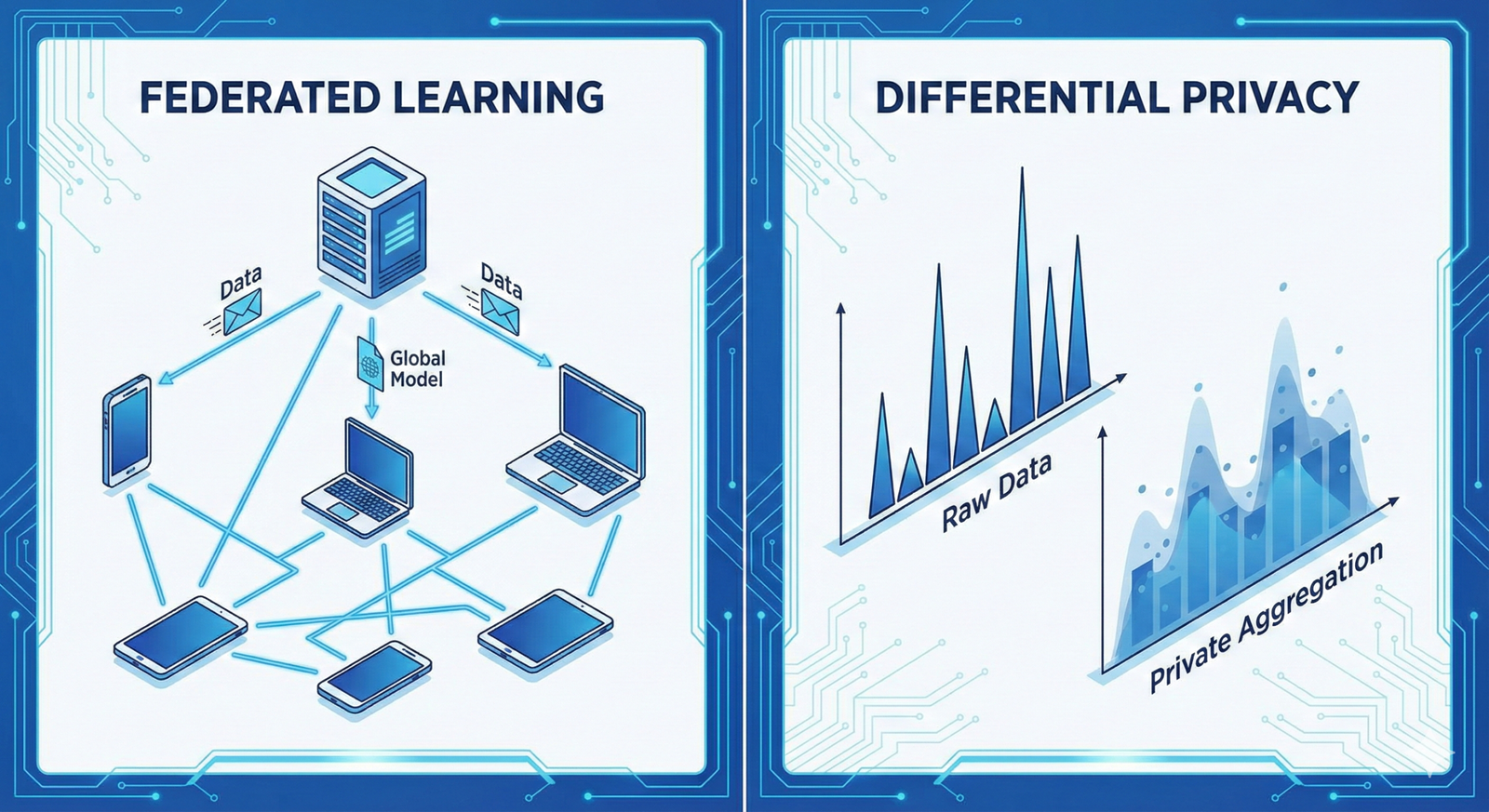

How do we create data that is “fake” but statistically “true”? As of current developments, three primary architectures dominate the landscape.

1. Generative Adversarial Networks (GANs)

GANs consist of two neural networks: a Generator and a Discriminator. The generator creates fake data, and the discriminator tries to guess if it’s real. They “fight” until the generator becomes so skilled that the discriminator can no longer tell the difference. This is the gold standard for creating synthetic images and video.

2. Variational Autoencoders (VAEs)

VAEs compress real data into a “latent space” and then reconstruct it. By sampling different points in that latent space, researchers can generate new data points that share the same DNA as the original set. This is frequently used for synthetic tabular data (like financial records).

3. Diffusion Models

The technology behind tools like Midjourney and stable diffusion, these models start with pure noise and “reverse the entropy” to create structured data. In 2026, diffusion models are being used to generate synthetic sensor data for LiDAR and radar, essential for the silicon workforce in transportation.

Why Real Data is No Longer Enough

For decades, the mantra was “Data is the new oil.” But oil is finite, and its extraction is messy. The silicon workforce has outpaced our ability to provide “natural” data for several reasons:

The Privacy Bottleneck

In sectors like healthcare and finance, using real patient or client data is a legal minefield. Synthetic data allows a bank to train a fraud-detection AI on millions of “synthetic” transactions that look like real fraud but belong to no real person.

The Problem of “The Long Tail”

In machine learning, “The Long Tail” refers to the 1% of events that happen rarely. If you are training a robot to work in a kitchen, you might have 10,000 hours of footage of a robot chopping onions. But do you have footage of the robot reacting to a grease fire or a shattered glass? Synthetic data allows us to simulate these “long-tail” events millions of times.

Data Labeling Fatigue

Human labeling is slow and prone to error. To train a computer vision model, a human might have to draw boxes around cars in thousands of photos. Synthetic data comes pre-labeled. The computer generating the image already knows exactly where the car is, down to the last pixel.

Industry-Specific Applications in 2026

1. Autonomous Vehicles and Robotics

The “Sim-to-Real” gap has been the biggest hurdle in robotics. By using Digital Twins—highly accurate virtual replicas of the physical world—companies like NVIDIA and Tesla train their silicon drivers in virtual cities. They can simulate rain, snow, glare, and even “reckless synthetic pedestrians” to ensure the AI is battle-tested before it touches asphalt.

2. Financial Services

Synthetic tabular data is used to train AI in credit scoring and algorithmic trading. By generating synthetic market crashes, firms can stress-test their “silicon traders” against scenarios that haven’t happened since 2008 or the “Flash Crashes” of the early 2020s.

3. Healthcare and Genomics

Privacy laws (like HIPAA) often prevent researchers from sharing rare disease data. By creating synthetic genomic sequences, scientists can train diagnostic AIs to recognize patterns in rare cancers without ever exposing a single patient’s identity.

Common Mistakes in Synthetic Data Implementation

While synthetic data is a superpower, it is often misused. Here are the most frequent pitfalls:

- Model Collapse (Inbreeding): If you train an AI on synthetic data generated by another AI, and then use that new AI to generate more data, the quality degrades. It’s like a photocopy of a photocopy. You must always maintain a “ground truth” anchor of real-world data.

- Ignoring Fidelity vs. Diversity: High fidelity means the data looks very real. High diversity means the data covers many different scenarios. Teams often optimize for fidelity (making it look pretty) but fail on diversity, leading to AI that is confident but wrong in new situations.

- The “Sim-to-Real” Delusion: Assuming that because an AI succeeded in a synthetic simulation, it will succeed in the messy, physical world. Validation on a small “gold set” of real data is mandatory.

Ethical Considerations: Bias and Fairness

A common misconception is that synthetic data is “neutral.” It is not. If the generative model is trained on a biased real-world dataset, it will simply automate and scale that bias.

Safety Disclaimer: When using synthetic data for financial lending or medical diagnostics, ensure that the generative process includes “fairness constraints.” Failure to do so can result in algorithmic discrimination that is difficult to trace back to the source.

In 2026, the best practice is targeted oversampling. If your real-world data lacks representation from a specific demographic, you can use synthetic generation to “fill in” those gaps, creating a more equitable silicon workforce.

The Technical Workflow: How to Start

- Audit Your Data Gaps: Identify where your model is failing. Is it failing on edge cases? Is it failing because of a lack of volume?

- Select a Generative Method: Use GANs for visual tasks, VAEs for structured/tabular data, or LLMs for synthetic text.

- Generate and Filter: Not all synthetic data is good. Use a “Discriminator” or a secondary AI to filter out low-quality or nonsensical outputs.

- Augment, Don’t Replace: Start by mixing 20% synthetic data with 80% real data, gradually increasing the ratio as you validate performance.

- Validation: Always test the final model on a 100% real-world “holdout” dataset.

Conclusion

The rise of the silicon workforce is inevitable, but its intelligence is tethered to the data we provide. Synthetic data is no longer a “luxury” for tech giants; it is a fundamental requirement for any organization looking to deploy AI at scale in 2026. By breaking the chains of manual data collection and the risks of privacy breaches, synthetic data allows us to build AI that is more robust, more private, and more capable of handling the complexities of our world.

As we move forward, the focus will shift from “Big Data” to “Good Data.” The organizations that master the art of synthesis—balancing real-world grounding with synthetic scale—will be the ones that lead the next industrial revolution.

Your next steps:

- Identify one “high-risk” or “low-volume” dataset in your pipeline.

- Experiment with a small-scale GAN or VAE to generate a 5x expansion of that set.

- Compare the “Mean Squared Error” or “Accuracy” of a model trained with and without the synthetic boost.

Would you like me to generate a specific Python script using a library like SDV (Synthetic Data Vault) to help you start generating your first synthetic tabular dataset?

FAQs

What is the difference between data augmentation and synthetic data?

Data augmentation involves making slight changes to existing real data (like flipping a photo or changing its brightness). Synthetic data is created from scratch by a generative model that understands the underlying distribution of the data, allowing for entirely new, unique data points.

Can synthetic data be used to train LLMs?

Yes, but with caution. While synthetic text can help train LLMs on specific formats (like code or legal jargon), over-reliance on synthetic text can lead to “model collapse,” where the AI begins to repeat its own mistakes and loses the nuances of human language.

Is synthetic data legal under GDPR?

Generally, yes. Since synthetic data does not contain “Personal Identifiable Information” (PII) and cannot be traced back to a specific individual, it often falls outside the strict constraints of GDPR. However, you must ensure the synthesis process itself doesn’t “memorize” and leak real PII.

Does synthetic data replace human data labelers?

It changes their role. Instead of labeling millions of individual images, humans move “upstream” to label the “seed data” used to train the generator and to perform quality assurance on the synthetic output.

How do I know if my synthetic data is “good”?

Quality is measured through “Statistical Fidelity” (how well it matches the real data’s patterns) and “Utility” (how well a model trained on synthetic data performs on real-world tasks).

References

- NVIDIA Corporation (2025). The Omniverse Platform: Synthetic Data Generation for Industrial Digital Twins. [Official Documentation]

- Gartner Research (2024). Maverick Research: Synthetic Data Will Be the Primary Source of AI Training by 2030.* [Industry Report]

- MIT Technology Review. How Synthetic Data is Solving AI’s Privacy Problem. [Tech Journalism]

- Goodfellow, I., et al. (2014/2024 updated). Generative Adversarial Networks: A Decade of Progress. [Academic Paper]

- OpenAI Research. Scaling Laws for Neural Language Models and the Role of Synthetic Text. [Technical Blog]

- IEEE Xplore. Sim-to-Real Transfer Learning in Robotics: A Comprehensive Survey. [Academic Journal]

- Journal of Artificial Intelligence Research (2025). Addressing Model Collapse in Recursive Generative Training. [Academic Paper]

- Harvard Business Review. The Manager’s Guide to the Silicon Workforce. [Business Strategy]

- Google Research. Diffusion Models in Medical Imaging: Synthetic Data for Rare Diseases. [Technical Paper]

- Stanford Institute for Human-Centered AI (HAI). The Ethics of Synthetic Data and Bias Mitigation. [Policy Paper]