Robotics no longer lives on islands of tinkered code and isolated machines. The companies winning deployment-level results are the ones turning big data—from sensors, cameras, logs, and fleets—into a continuous performance engine. In the next few minutes, you’ll see how modern robotics teams build data pipelines, choose the right metrics, apply predictive maintenance, scale fleet learning, blend real-world logs with simulation and synthetic data, and secure the entire loop so robots get safer and better week after week.

Who this is for: operations leaders, engineering managers, PMs, and hands-on roboticists who want a practical, step-by-step playbook to make robots faster, more reliable, and easier to scale across sites.

What you’ll learn: the core use cases for big data in robotics; architecture patterns and affordable tools; the KPIs that actually move OEE; how to deploy predictive maintenance and fleet learning safely; how to combine sim, synthetic data, and real logs; and how to run a four-week starter plan that lifts performance without stalling production.

Key takeaways

- Performance gains compound when you treat robot data as a product—logged, labeled, versioned, and fed back into models and playbooks.

- Predictive maintenance consistently cuts unplanned downtime and stabilizes throughput when tied to the right telemetry and KPIs.

- Fleet learning turns every robot into a data collector and every site into a lab, accelerating improvement without rewriting code from scratch.

- Sim-to-real + synthetic data reduce risk and expand coverage for edge cases you’ll rarely capture on the floor.

- Security, safety, and governance are non-negotiable; bake them into your ROS 2 stack, permissions, and change-control from day one.

- A simple 4-week plan—log → measure → pilot → scale—can show visible ROI while building the foundation for long-term gains.

The business case: why big data is now the beating heart of robotics

What it is and why it matters.

“Big data” in robotics is the continuous, high-volume capture of sensor streams (images, depth, lidar), telemetry (joint torques, currents, temperatures), system logs, event traces, and operator inputs across robots, cells, and sites—plus the tooling to store, search, secure, and reuse all of it. The aim is threefold: (1) increase reliability (fewer stoppages and recoveries), (2) optimize throughput and quality (higher OEE), and (3) accelerate learning (shorter cycles from issue → insight → fix → rollout).

Requirements/prerequisites.

- Robot middleware (e.g., ROS 2 or vendor SDKs) with topics you can bag/record.

- Edge compute (industrial PC) with enough storage and a path to ship data to your cloud or on-prem lake.

- A place to put it (object storage or lakehouse), plus schemas, retention, and access controls.

- Clear KPIs: OEE (availability × performance × quality), MTBF/MTTF, pick success rate, recovery rate, cycle time, scrap rate.

Beginner-friendly path and low-cost alternatives.

- Start by recording only what you need: camera frames (downsampled), robot joint states, error topics, and key events.

- Use built-in recording tools (e.g., ros2 bag) before investing in bespoke loggers.

- Push data to a simple object store bucket with lifecycle rules rather than an expensive data platform from day one.

Step-by-step starter implementation.

- Define KPIs and targets per cell or robot (e.g., OEE from availability/performance/quality; MTBF for critical axes).

- Turn on logging for a minimal set of topics, compress files, and rotate daily.

- Ship logs to a central bucket and index metadata (timestamp, station, robot ID, SKU).

- Add dashboards for downtime, errors, and recovery patterns.

- Pick one use case (predictive maintenance or cycle-time optimization) and run a 30-day pilot.

Safety & caveats.

- Don’t log personally identifiable information or sensitive audio/video where you don’t need it.

- Avoid logging high-rate data you will never use—start lean to keep costs predictable.

- Use role-based access and encrypt in transit and at rest.

Mini-plan example (2–3 steps).

- Week 1: enable bagging for joint states and error topics across two robots.

- Week 2: set OEE baselines and a downtime Pareto chart; choose top failure mode to attack first.

Build the data foundation: from on-robot logging to an edge-cloud pipeline

What it is and benefits.

A robust telemetry pipeline collects data at the robot, buffers it on the edge, and syncs it to a central store where it’s searchable and usable for analytics, training, and audits. The win is repeatable insight: you can reproduce a bug, visualize trends, and train models without chasing ad-hoc files.

Requirements/prerequisites.

- Middleware hooks: topics, services, and logs.

- Edge gateway (industrial PC) with container runtime, local SSD, and a message queue or uploader.

- Central storage (object store), indexing (metadata + catalog), and retention (hot → warm → cold).

- Security: certificates and permissions to encrypt and authenticate robot→edge→cloud.

Step-by-step implementation.

- Instrument ROS 2 nodes (or vendor SDKs) to publish the right topics.

- Record with rotation (e.g., ros2 bag record with compression).

- Persist on edge with a FIFO and health checks; if the network drops, you don’t lose data.

- Sync upstream on a schedule or event (e.g., end of shift) and attach metadata (station ID, SKU, software build).

- Catalog in a table with columns you can filter (date, robot, event type) and links to files.

- Dashboards: downtime events over time, top error codes, cycle-time distributions.

- Access control: give read access to ops, restricted write to engineers, admin only for deletes.

Beginner modifications & progressions.

- Start with a single cell and a curated set of topics.

- Progress to video keyframes + event-triggered bursts instead of constant full-rate streams.

- Add automated scrubbing (blur faces, redact on-screen PII) before cloud upload if cameras see people.

Metrics & cadence.

- Data health: % of robots uploading on schedule; % corrupted logs (target <1%).

- Coverage: % incidents with matching logs (target >95%).

- Latency: time from incident → searchable data (target <15 minutes on-site, <2 hours cross-site).

Safety & pitfalls.

- Over-collection is common: log smarter (events + bursts) to avoid storage surprises.

- Keep a clear retention policy to manage cost and compliance.

Mini-plan example.

- Day 1–3: implement edge buffer and nightly sync.

- Day 4–7: basic dashboard and alerts when an expected log is missing.

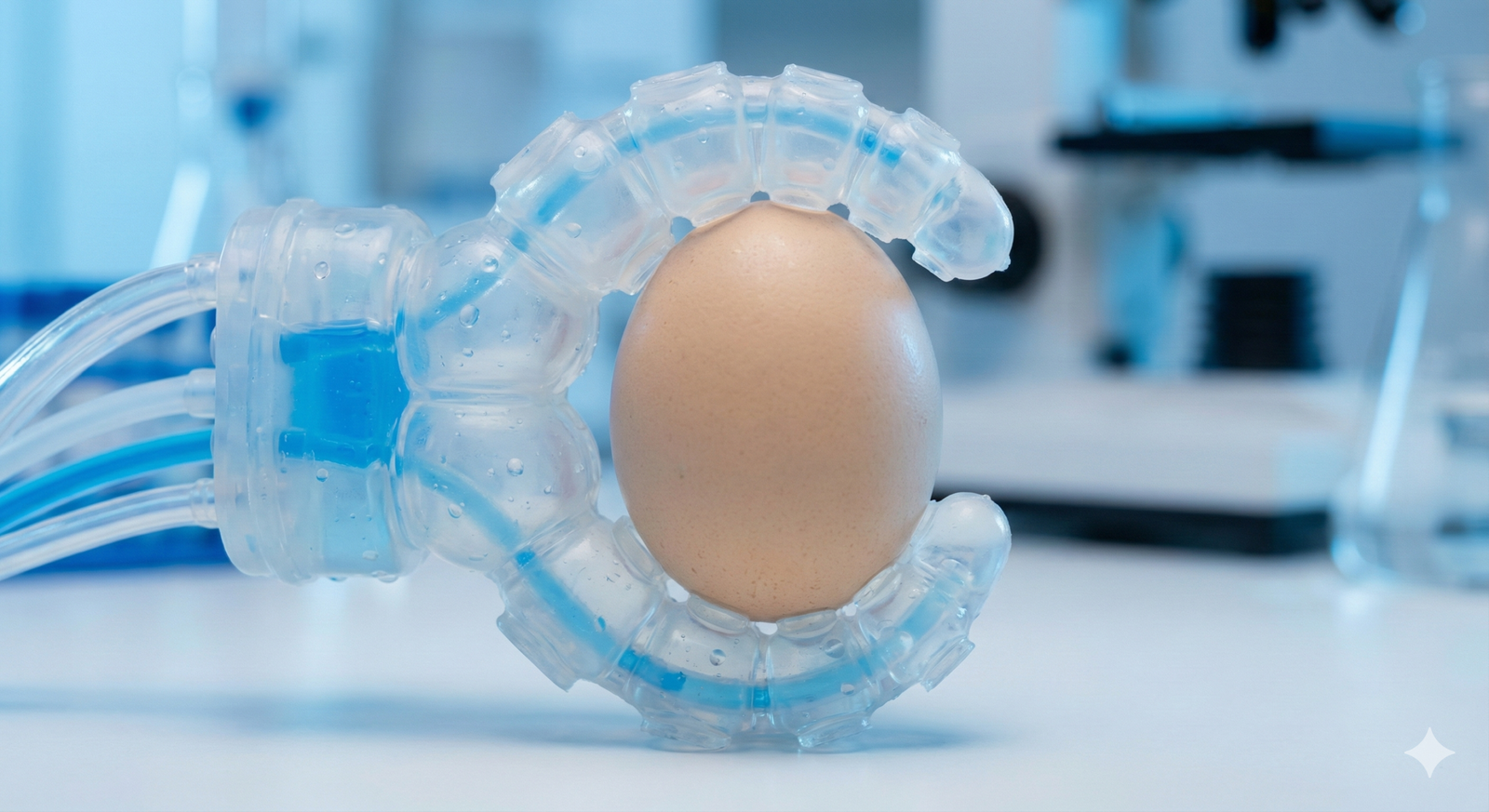

Use case #1: Predictive maintenance that actually prevents stoppages

What it is and benefits.

Predictive maintenance (PdM) uses historical failures and live telemetry (e.g., motor currents, vibration, temperature, cycle counts) to predict impending issues so you can plan service windows and avoid surprise downtime. Well-run PdM programs routinely reduce unplanned stoppages and the cost of parts swaps while improving labor utilization and OEE.

Requirements/prerequisites.

- Sensors: currents/torques, encoders, thermal, vibration; logs of faults and recoveries.

- A labeled history: “incident notebooks” linking failures to the preceding telemetry slices.

- Baseline models: simple thresholds, then anomaly detection or supervised classification.

- Maintenance workflows: tickets and parts kits aligned to predictions.

Step-by-step implementation.

- Map failure modes (e.g., axis over-current, gearbox wear, gripper mis-grip) to signals you can observe.

- Create truth sets: assemble 30–90 days of “pre-failure windows” for known faults.

- Start simple: rolling z-scores and trend alarms; alert only on persistent excursions.

- Upgrade to ML: train anomaly detectors or classifiers on labeled windows.

- Integrate with scheduling: route high-confidence alerts into maintenance tickets with serials/parts.

- Close the loop: after each intervention, log “true/false positive” and retrain monthly.

Beginner modifications.

- If you lack a deep history, begin with condition-based thresholds and consistent human labels.

- Pilot on one component that fails most often (e.g., gripper fingers or an elbow joint).

Frequency, duration, and KPIs.

- Daily retrain is not required; monthly is common until data volume grows.

- Track unplanned downtime, mean time between failure, false-alarm rate, and lead time between alert and failure.

Safety, caveats, and mistakes to avoid.

- Avoid spiky “alert spam.” Start conservative; add severity tiers (warning vs. stop-the-line).

- Predictions are not work orders; human validation decides action.

- Be careful with data leakage (e.g., including labels baked into signals).

Mini-plan example.

- Week 1: trend and threshold alerts on motor currents and temperatures.

- Week 3: first ML model for “probable gripper misalignment within 7 days” based on mis-grip recoveries.

Use case #2: Performance optimization with the metrics that matter

What it is and benefits.

Performance improvement blends operations data (cycle time, retries, changeovers) with quality (first-pass yield) and availability (downtime). The most effective umbrella metric is OEE—the product of Availability, Performance, and Quality. Big data makes each factor measurable and improvable in near real time.

Requirements/prerequisites.

- Clean definitions of run time, planned production time, ideal cycle time, total count, and good count.

- Event logging and simple lineage from SKU to cell to shift.

- Visualization that separates availability losses (stoppages), performance losses (slow cycles), and quality losses (scrap/rework).

Step-by-step implementation.

- Instrument counters: parts in/out, rejects, and reworks; measure ideal cycle per SKU.

- Compute OEE and break down the three factors per robot per shift.

- Pareto the top losses: is it waiting for input, tool wear, retries, or congestion?

- Run experiments: A/B new pick heuristics, path tweaks, or camera settings; keep one change per test.

- Roll forward: promote changes that beat the baseline for a week without regressions.

Beginner modifications.

- If you’re early, start with Performance only (cycle time) while you stabilize logging for Availability and Quality.

Cadence and KPIs.

- Weekly experiments; daily OEE review.

- KPI ladder: OEE (top), changeover loss, micro-stoppages per hour, retry rate, and first-pass yield.

Safety & caveats.

- Never “optimize” by silencing alarms or bypassing safety interlocks.

- Validate that performance tweaks don’t degrade quality or increase operator burden.

Mini-plan example.

- This week: reduce retries by tuning grasp thresholds; next week: improve changeover scripts for two SKUs.

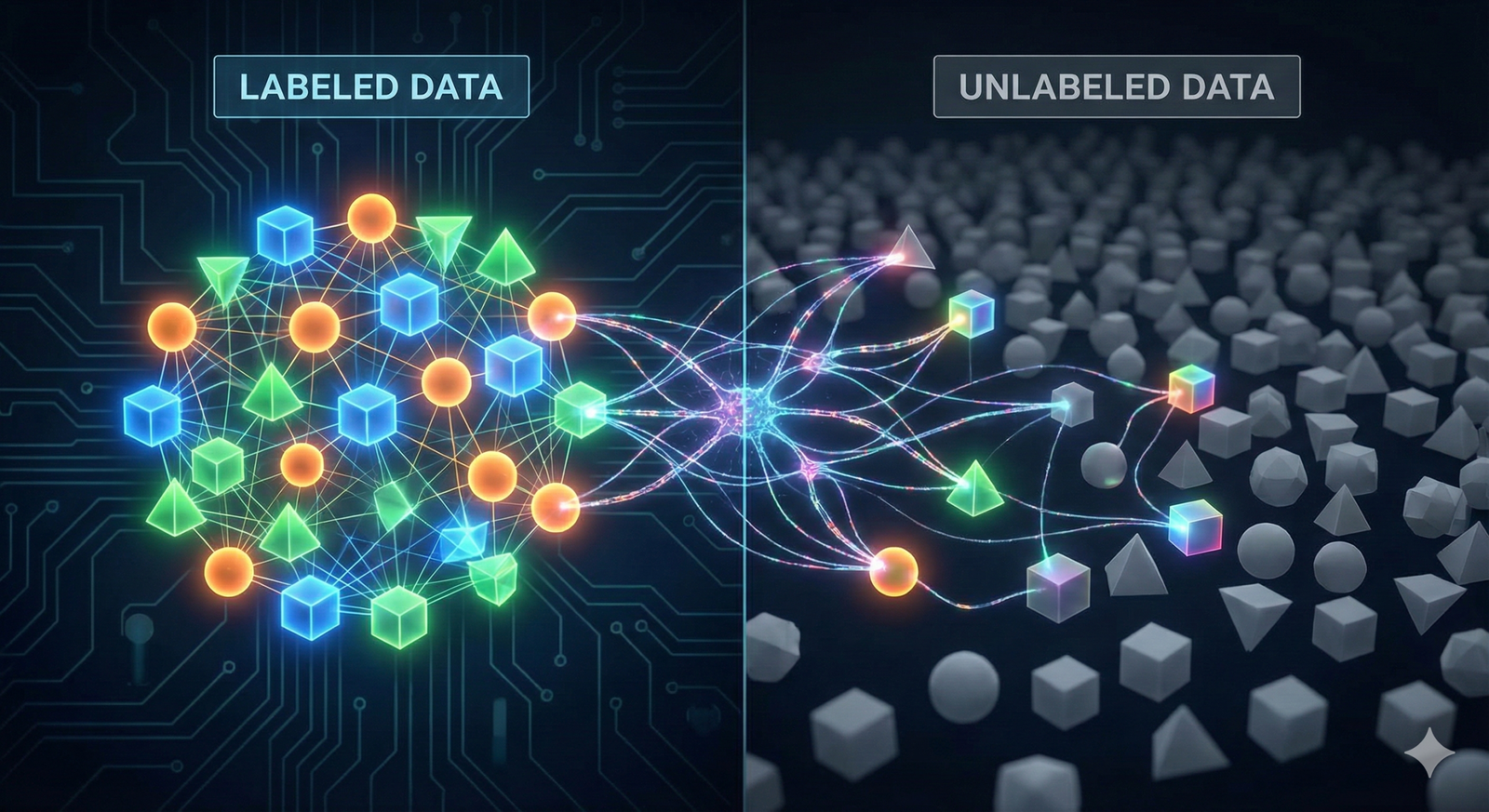

Use case #3: Fleet learning—turn every robot into a teacher

What it is and benefits.

Fleet learning aggregates demonstrations, recoveries, and outcomes from many robots to train or adapt policies that improve across the fleet. Instead of hand-coding fixes per site, you collect experience once and scale improvements everywhere. This accelerates learning and reduces time-to-stability for new deployments.

Requirements/prerequisites.

- A consistent trajectory/log format across robots (actions, observations, rewards/outcomes).

- A central dataset with metadata (embodiment, site conditions, software version).

- A mechanism to safely roll out policy updates and roll back on regressions.

Step-by-step implementation.

- Standardize data: define a schema for observations (images, proprioception), actions, and outcomes.

- Collect demonstrations: label human-guided recoveries as “gold episodes.”

- Train offline: imitation learning or batch RL on curated datasets.

- Shadow deploy: run the new policy in observe-only mode; compare to live outcomes.

- Staged rollout: enable on one robot, then one cell, then one site; monitor regressions and roll back if needed.

Beginner modifications.

- Start with imitation learning from expert recoveries before exploring reinforcement learning.

- Focus on one narrow task (e.g., grasp refinement on reflective parts).

Metrics and cadence.

- Success rate, mean actions per success, recovery frequency, near-miss count, and time-to-recover.

- Retrain cadence: monthly or after N thousand new episodes; evaluate on held-out sites.

Safety & caveats.

- Always shadow test; never deploy fleet-wide without a quick rollback path.

- Ensure embodiment differences are respected (e.g., different grippers or camera baselines).

Mini-plan example.

- Month 1: collect 500 expert recoveries on a hard SKU; train a policy that reduces grip retries.

- Month 2: staged rollout on three lines with automatic rollback on >2% regression.

Use case #4: Sim-to-real and synthetic data to cover edge cases

What it is and benefits.

Real floors rarely produce all the corner cases you need—especially safety-critical ones. Simulation (digital twins) and synthetic data let you rehearse failures, generate diverse visuals for perception, and pre-train policies that fine-tune quickly on real logs. The result: fewer surprises in production and faster time to robust behavior.

Requirements/prerequisites.

- A simulator with accurate physics and rendering.

- Importable scenes/assets that match your cells and SKUs.

- A pipeline from sim → dataset → training → validation → limited live test.

Step-by-step implementation.

- Digitize the cell and key SKUs; calibrate camera and robot frames.

- Domain randomization: vary lighting, textures, poses, distractors.

- Generate labeled data: produce images, depth, and ground truth labels at scale.

- Pre-train models in sim; fine-tune on real logs.

- Sim-in-the-loop tests for control stack changes; only then run limited on-floor pilots.

Beginner modifications.

- Start with synthetic perception data to improve detection/segmentation under hard lighting.

- Add sim-replay of real logs to test control changes safely.

Cadence and KPIs.

- Monthly re-renders for new SKUs; quarterly physics calibration.

- KPIs: sim-trained model’s real-world accuracy lift, reduction in false negatives, and time saved on labeling.

Safety & caveats.

- Sim never matches reality perfectly; keep post-deployment guardrails (velocity limits, stop conditions).

- Validate on representative real logs before rollout.

Mini-plan example.

- Create 100k synthetic images of shiny SKUs with randomized lighting; fine-tune detector; A/B on two lines for a week.

Use case #5: Foundation models, vision-language-action, and generalization

What it is and benefits.

Large-scale vision-language(-action) models trained on web-scale data and robot trajectories can generalize to new objects and instructions with fewer demos. In practice, teams use VLM/VLA backbones to parse natural-language tasks, plan high-level steps, and map scene understanding to actions—especially for long-tail, semi-structured tasks.

Requirements/prerequisites.

- A curated robot trajectory dataset tied to observations and language prompts.

- On-device or edge compute capable of running a distilled model or an efficient planner.

- Safety layers and constraints when mapping language to motion.

Step-by-step implementation.

- Collect task-language pairs (“place the smallest box on the top shelf”), with corresponding trajectories.

- Fine-tune a compact vision-language policy head that outputs waypoints or action tokens.

- Constrain actions with a safety and feasibility layer (reachability, collision checks).

- Evaluate on held-out tasks and novel objects; require parity with scripted baselines before trials.

- Shadow run in production to build trust; graduate to limited autonomy with tight guardrails.

Beginner modifications.

- Start with vision-language perception (semantic segmentation and object attributes) to assist classical planners.

- Use a hybrid: language → goal → classical motion control.

Cadence and KPIs.

- Quarterly model updates; weekly safety tests on a regression suite of tasks.

- KPIs: success on novel tasks, instruction understanding accuracy, and intervention rate.

Safety & caveats.

- Natural-language control must never bypass safety; keep hard constraints and explicit stop logic.

- Store and audit all task prompts and actions.

Mini-plan example.

- Pilot “describe then do” on a supervised line where operators approve planned actions before execution.

Data management, labeling, versioning, and governance

What it is and why it matters.

Without disciplined data management, you can’t reproduce bugs, retrain models safely, or prove why a robot did what it did. Big data pays off only if you can find, trust, and reuse what you collect.

Requirements/prerequisites.

- A catalog with datasets, versions, and lineage (source, transformations, labels, and models trained).

- Annotation tools and QA workflows for images, trajectories, and events.

- MLOps practices: experiment tracking, model registry, CI/CD for models, evaluation suites.

Step-by-step implementation.

- Define dataset versions and naming (e.g., lineA_pick_v3_2025-07-15).

- Introduce review gates: labels require two reviewers for production models.

- Track experiments: hyperparameters, data versions, metrics, and artifacts.

- Promote models via a registry; deploy behind flags; track rollback reasons.

- Audit: retain training data hashes and evaluation reports for each production model.

Beginner modifications.

- Start with lightweight spreadsheets and clear directories; graduate to managed registries later.

- Focus QA on rare but critical labels (e.g., near-misses, safety events).

Cadence & KPIs.

- Monthly audits; per-release evaluation suites.

- KPIs: % datasets with lineage, mean label disagreement rate, time to reproduce a bug.

Safety & caveats.

- Restrict access to sensitive footage; blur or redact where required.

- Never train on data you cannot legally retain; set retention rules now, not later.

Mini-plan example.

- This month: unify dataset names and write a one-page “how to add data to the catalog” SOP.

Security, safety, and compliance: make it default

What it is and benefits.

Security for robot data and control is not optional. You’re protecting people, product, and IP. The core principles: encrypted communications, least privilege, signed artifacts, safety standards, and change control tied to risk assessment.

Requirements/prerequisites.

- Encrypted robot middleware communications and identity management.

- Signed containers and models; checks in CI/CD.

- Safety standards and procedures for collaborative or human-adjacent workcells.

Step-by-step implementation.

- Turn on secure comms in your middleware; generate keys and policies.

- Enforce RBAC for logs, datasets, and model registries.

- Sign and verify artifacts in build pipelines; refuse unsigned deployments.

- Adopt safety guidance for human-robot collaboration; review collision forces and contact limits.

- Run change-control: hazard assessment for each significant software or model update.

Beginner modifications.

- If full PKI is heavy at first, start with per-robot keystores and rotate secrets quarterly.

- Begin with a minimal safety review checklist for each change.

Cadence & KPIs.

- Quarterly key rotations; monthly audit of permissions.

- KPIs: % encrypted topics, % signed deployments, number of permission violations, time to revert.

Safety & caveats.

- Security is a people problem as much as a tech problem—train operators and engineers.

- Document and test emergency stops after every major update.

Mini-plan example.

- This sprint: encrypt robot node communications and restrict log access to engineering + ops leads.

How to measure progress: your robotics performance scorecard

- OEE: availability × performance × quality; review daily and per SKU.

- Downtime: unplanned downtime hours/shift; top 3 causes.

- MTBF/MTTF: reliability of critical components.

- Success rate & retries: per task or SKU.

- Recovery time: median time from fault to good state.

- Prediction precision/recall: for maintenance alerts and failure classifiers.

- Model drift: delta between offline evaluation and live performance over time.

- Data health: coverage, freshness, and corruption rate.

Quick-start checklist (print this)

- Define top-level KPIs (OEE, downtime, MTBF) and targets.

- Choose one pilot use case (predictive maintenance or cycle-time optimization).

- Enable minimal logging (joint states, errors, event markers, downsampled images).

- Set up edge buffering and nightly sync to a central bucket.

- Build a simple dashboard for downtime and cycle time.

- Document your dataset versioning and model promotion rules.

- Encrypt robot comms and lock down access to logs.

- Run one change at a time; stage rollout with clear rollback triggers.

Troubleshooting & common pitfalls

“We turned on logging and our storage bill exploded.”

Scope creep is real. Log events + bursts, not 24/7 raw video. Use compression and retention tiers.

“Our PdM model is noisy; techs ignore it.”

Start with few, high-confidence alerts. Add severity tiers and measure false-positive rates. Close the loop with technician feedback.

“Policy updates helped on Line A but regressed Line B.”

Your embodiments or lighting differ. Enforce site-aware evaluation and shadow runs before staged rollouts.

“We can’t reproduce a bug.”

You need event markers and synchronized logs. Record recovery button presses and timestamps. Index by software build.

“Security is blocking speed.”

Automate keys and signing in CI. Bake security into your deployment pipeline so it’s invisible to users but enforced.

“Our sim results don’t match reality.”

Increase domain randomization and add real-log replay. Validate against a regression suite of on-floor logs before rollout.

A simple 4-week starter plan (tailored to most deployments)

Week 1 — Instrument & baseline

- Enable logging of joint states, error topics, and event markers on two robots.

- Compute OEE and MTBF baselines; build a downtime Pareto chart.

- Encrypt robot comms and restrict data access.

Week 2 — Pilot one improvement loop

- Choose a single failure mode (e.g., over-current on Axis 3).

- Build a threshold + trend alert; route to a ticket with robot serial and suggested check.

- Track true/false positives and technician feedback.

Week 3 — Iterate & visualize

- Add a simple anomaly model trained on labeled pre-failure windows.

- Tweak thresholds; reduce alert spam.

- Start a small sim or synthetic dataset to stress the perception model for a hard SKU.

Week 4 — Stage rollout & review

- Shadow-run the improved model on two additional robots.

- If metrics hold (reduced downtime, fewer retries), stage rollout line-wide with rollback triggers.

- Document lessons, update SOPs, and plan the next use case (e.g., cycle-time gains on changeovers).

Frequently asked questions

1) How much data do we really need to start?

Not as much as you think. Begin with events, errors, and key signals. Add bursts of high-rate data around incidents. Expand only when insights demand it.

2) What KPIs should we adopt first?

Start with OEE (and its three components) plus unplanned downtime and MTBF for critical components. Add task-level metrics like success rate and retries once your logging stabilizes.

3) How do we avoid drowning in video?

Use downsampled frames, event triggers, and short rolling buffers that dump to disk only on errors or operator flags. Compress aggressively and expire old files you don’t need.

4) Is predictive maintenance realistic for small fleets?

Yes—if you focus on the top failure mode and start with threshold-based alerts. You can graduate to ML as labels accumulate.

5) How do we keep models from regressing after deployment?

Treat models like code: version datasets, track experiments, shadow test, and stage rollouts with automatic rollback on metric regression.

6) We operate alongside people. What extra steps should we take?

Follow collaborative robot safety guidance, enforce hard limits and interlocks, log all contact events, and include safety in every change control review.

7) What’s the difference between sim data and synthetic data?

“Sim” refers to running the robot stack in a physics environment; “synthetic data” usually means rendered images or trajectories used for training. You’ll often use both: sim-in-the-loop for control tests and synthetic images to boost perception.

8) Can language models really help on the factory floor?

They can parse instructions, summarize logs, and even guide high-level planning. Keep hard safety constraints below them and require parity with scripted baselines before autonomy.

9) How do we handle sensitive footage or audio?

Log only what you need, blur or redact where possible, encrypt everything, and apply role-based access with retention rules.

10) What if we don’t use ROS 2?

The principles are the same: consistent log formats, secure comms, catalogs, and KPIs. Use your vendor’s APIs to capture equivalent signals and events.

11) How often should we retrain models?

Start with monthly or after a significant data increment (e.g., +20% new labeled episodes). Always evaluate on a held-out suite and shadow test before rollout.

12) How do we prove ROI?

Tie each improvement to OEE lift, reduced unplanned downtime, fewer interventions, or faster cycle time. Keep a before/after chart per change and a running tally of avoided downtime.

Conclusion

Robotics performance isn’t just about clever code or better hardware anymore. It’s about closing the loop with data—from on-robot logs to edge pipelines, from predictive maintenance to fleet learning, from synthetic and sim datasets to production-hardened models—while keeping security and safety as immovable guardrails. Start small, instrument wisely, measure relentlessly, and let improvements compound across your fleet.

Call to action: Pick one cell, one KPI, and one use case—and start your four-week loop today.

References

- The True Cost of Downtime 2024, Siemens, 2024. https://assets.new.siemens.com/siemens/assets/api/uuid%3A1b43afb5-2d07-47f7-9eb7-893fe7d0bc59/TCOD-2024_original.pdf

- Prediction at scale: How industry can get more value out of maintenance, McKinsey & Company, July 22, 2021. https://www.mckinsey.com/capabilities/operations/our-insights/prediction-at-scale-how-industry-can-get-more-value-out-of-maintenance

- Establishing the right analytics-based maintenance strategy, McKinsey & Company, July 19, 2021. https://www.mckinsey.com/capabilities/operations/our-insights/establishing-the-right-analytics-based-maintenance-strategy

- Predictive maintenance market: 5 highlights for 2024 and beyond, IoT Analytics, Nov 29, 2023. https://iot-analytics.com/predictive-maintenance-market/

- How AI and robotics can help prevent breakdowns in factories — and save manufacturers big bucks, Business Insider, May 2025. https://www.businessinsider.com/artificial-intelligence-robotics-predictive-maintenance-manufacturing-factory-solutions-2025-5

- Predictive Maintenance: Cutting Costs & Downtime Smartly, IIoT-World, Feb 14, 2025. https://www.iiot-world.com/predictive-analytics/predictive-maintenance/predictive-maintenance-cost-savings/

- What Is OEE (Overall Equipment Effectiveness)?, OEE.com, accessed Aug 2025. https://www.oee.com/

- OEE Calculation: Definitions, Formulas, and Examples, OEE.com, accessed Aug 2025. https://www.oee.com/calculating-oee/

- The Monthly Metric: Unscheduled Downtime, Institute for Supply Management, Aug 27, 2024. https://www.ismworld.org/supply-management-news-and-reports/news-publications/inside-supply-management-magazine/blog/2024/2024-08/the-monthly-metric-unscheduled-downtime/

- Recording and playing back data — ROS 2 Documentation, ROS 2, accessed Aug 2025. https://docs.ros.org/en/foxy/Tutorials/Beginner-CLI-Tools/Recording-And-Playing-Back-Data/Recording-And-Playing-Back-Data.html

- ROS 2 Security (Introducing ROS 2 security / SROS2), ROS 2 Docs (Rolling/Humble), last updated 2024–2025. https://docs.ros.org/en/rolling/Tutorials/Advanced/Security/Introducing-ros2-security.html

- ros2/sros2: tools to generate and distribute keys for SROS 2, GitHub repository, accessed Aug 2025. https://github.com/ros2/sros2

- ISO/TS 15066:2016 — Robots and robotic devices — Collaborative robots — Safety requirements, International Organization for Standardization, accessed Aug 2025. https://www.iso.org/standard/62996.html

- A review of the ISO 15066 standard for collaborative robot systems, Safety Science (Elsevier abstract page), 2020. https://www.sciencedirect.com/science/article/pii/S0925753520302290

1 Comment