The transition from “software-defined” to “AI-native” marks the most significant architectural shift in business history since the advent of the cloud. As of February 2026, the era of merely “using” AI tools is over. To remain competitive, organizations must now rebuild their entire operational logic around artificial intelligence. An AI-native tech organization isn’t just a company with a ChatGPT subscription; it is an entity where AI is the primary driver of decision-making, code generation, and customer interaction.

What is an AI-Native Organization?

An AI-native organization is a business designed from the ground up—or fundamentally restructured—to treat artificial intelligence as its core operating system. In this model, human employees shift from “doers” to “orchestrators.” Every process, from the way data is ingested to the way products are shipped, is optimized for machine learning efficiency. This involves a seamless loop between data acquisition, model training, and autonomous execution.

Key Takeaways for 2026

- Agentic Workflows: Moving beyond static chatbots to autonomous agents that can plan and execute multi-step tasks.

- Data Liquidity: Implementing Data Mesh and Data Fabric architectures to ensure AI has “zero-friction” access to high-quality information.

- Cultural Evolution: Shifting workforce focus from task completion to system supervision and ethical oversight.

- Infrastructure: Transitioning to hybrid-cloud environments optimized for both RAG (Retrieval-Augmented Generation) and local edge inference.

Who This Is For

This blueprint is designed for Chief Technology Officers (CTOs), Engineering Managers, Product Leads, and Founders who are responsible for steering their organizations through the mid-2020s. Whether you are leading a legacy enterprise through a digital transformation or scaling a “born-in-the-cloud” startup, these principles apply to any tech-forward entity looking to achieve AI-native status.

Safety & Ethics Disclaimer: Implementing AI-native systems involves significant data privacy, security, and ethical considerations. This blueprint should be executed in alignment with local regulations (such as the EU AI Act) and in consultation with cybersecurity and legal experts to prevent algorithmic bias and data leaks.

The Foundation: Data Mesh and Real-Time Infrastructure

In 2026, data is no longer just “the new oil”; it is the literal nervous system of the company. The biggest hurdle to becoming AI-native is “data friction”—the time and effort required to move data from a source to an AI model.

Moving Beyond the Data Swamp

Legacy organizations often suffer from “data swamps,” where unorganized information sits in silos. AI-native organizations utilize a Data Mesh approach. This means data is treated as a product, owned by the specific team that produces it (e.g., Marketing, Sales, Engineering), but made accessible through standardized, AI-readable APIs.

The Role of RAG and Vector Databases

Retrieval-Augmented Generation (RAG) has become the industry standard as of February 2026. Instead of trying to bake all company knowledge into a model’s weights (which is expensive and leads to hallucinations), AI-native firms use high-performance vector databases. This allows the AI to “look up” the latest company policies, code repositories, or customer history in real-time before generating a response.

Common Mistake: Neglecting Data Hygiene

Many leaders assume that a “smart enough” AI can clean up messy data. The opposite is true. In an AI-native environment, bad data leads to “automated chaos.” If your customer labels are inconsistent, your AI agents will make inconsistent decisions at a scale humans can’t easily correct.

The Engine: Orchestrating Agentic Workflows

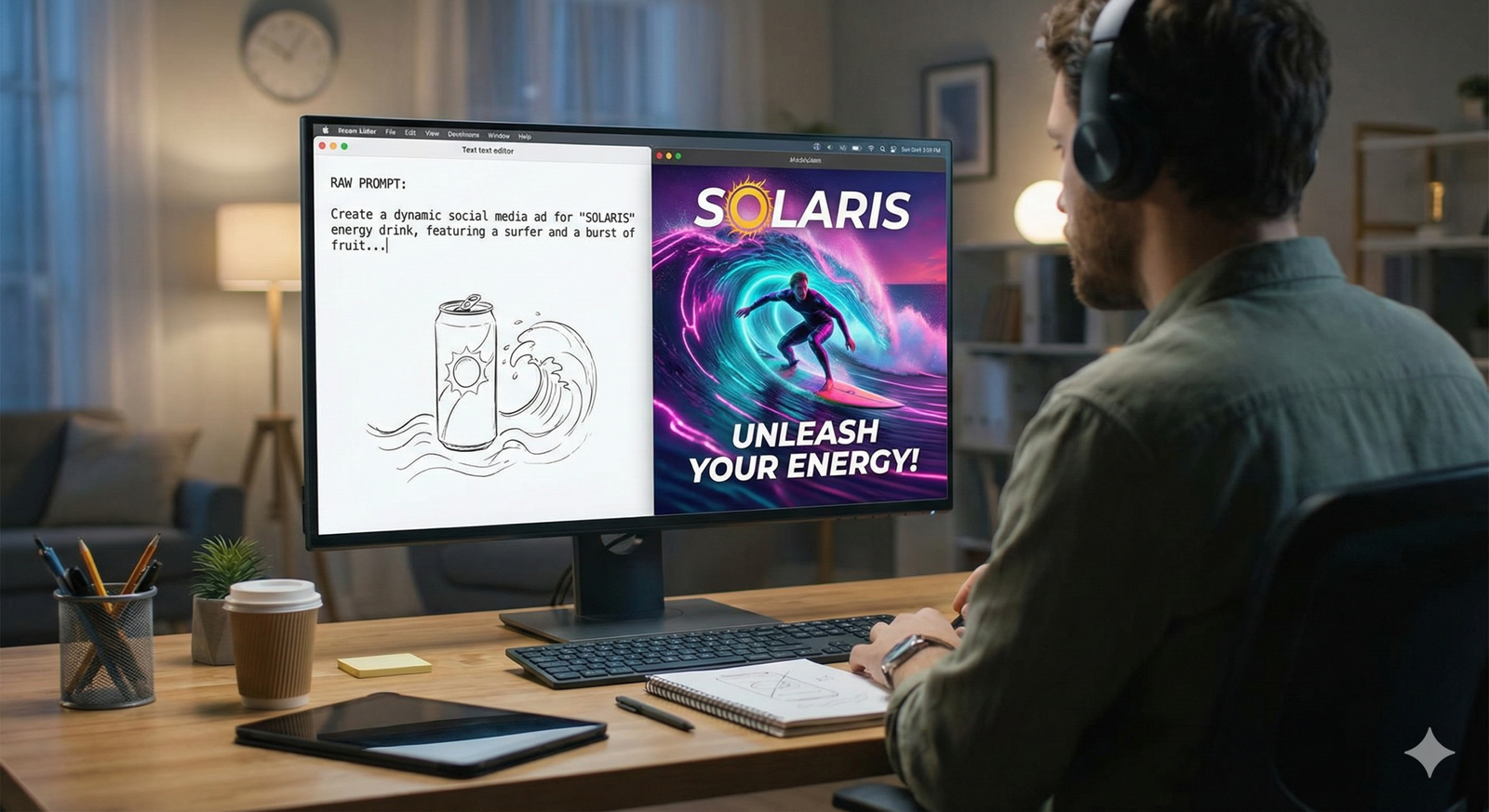

The defining characteristic of 2026’s tech landscape is the shift from “copilots” to “agents.” While a copilot suggests code, an AI Agent writes the code, runs the tests, deploys the container, and monitors the logs for errors.

The Agentic Stack

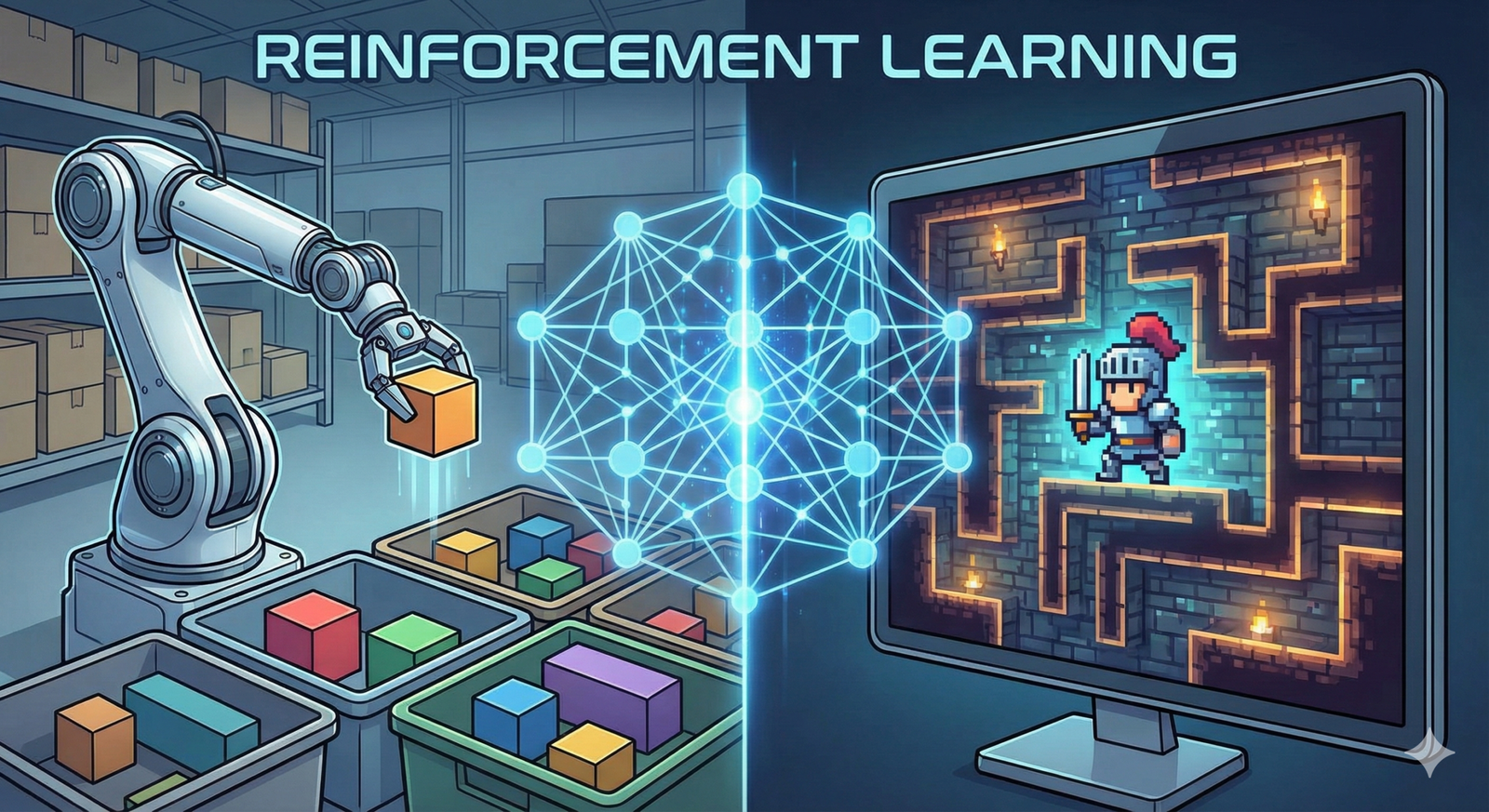

To build an AI-native engine, you must implement an orchestration layer. This layer manages how different AI models interact. For example:

- The Planner: A large language model (LLM) that breaks a user request into sub-tasks.

- The Workers: Specialized, smaller models (SLMs) optimized for specific tasks like SQL generation or Python execution.

- The Critic: An independent model that reviews the output for security vulnerabilities or logical errors.

Practical Example: Automated DevOps

In an AI-native organization, a developer describes a feature in plain English. The AI agent generates the pull request, an automated security agent audits it, and a performance-testing agent simulates 10,000 users. The human developer only steps in for final architectural approval. This reduces the “concept-to-code” time by up to 70%.

The Talent Shift: From “Coders” to “Architects”

One of the most sensitive aspects of the AI-native blueprint is the human element. The goal is not to replace the workforce but to amplify its capability. However, the skills required in 2026 are vastly different from 2022.

New Roles for the AI Era

- Agentic Orchestrators: Engineers who specialize in designing the workflows and “guardrails” for AI agents.

- AI Ethicists & Compliance Officers: Professionals who ensure the AI’s decisions align with company values and legal requirements.

- Context Engineers: A more advanced version of prompt engineering, focusing on how to feed the right metadata and business context into RAG systems.

Human-in-the-Loop (HITL) 2.0

AI-native doesn’t mean “human-free.” It means humans are strategically placed at high-leverage points. We call this Human-in-the-Loop 2.0. Instead of checking every output, humans monitor the “health” of the AI systems, intervening only when the AI’s “confidence score” drops below a certain threshold.

Governance, Security, and AI TRiSM

As of February 2026, AI security is the top priority for enterprise boards. The rise of “prompt injection” attacks and data poisoning means that an AI-native organization must be a fortress.

AI TRiSM Framework

AI Trust, Risk, and Security Management (TRiSM) is a mandatory framework for the modern tech org. It includes:

- Explainability: Can the organization explain why an AI made a specific decision? This is crucial for financial and healthcare tech.

- Model Integrity: Continuous monitoring to ensure the model hasn’t “drifted” or become biased over time.

- Privacy-Preserving AI: Using techniques like differential privacy and federated learning to train models without exposing sensitive user data.

Shadow AI: The New Shadow IT

A major risk in 2026 is “Shadow AI”—employees using unapproved, external AI tools to handle company data. An AI-native blueprint must provide internal, secure alternatives that are as easy to use as public models, ensuring that proprietary IP never leaves the corporate perimeter.

Measuring Success: AI-Native KPIs

You cannot manage what you do not measure. Traditional metrics like “Lines of Code” or “Tickets Resolved” are obsolete in an AI-native world.

Value-Driven Metrics

- Autonomous Resolution Rate: What percentage of internal or external requests were handled from start to finish by AI agents without human intervention?

- Time to Inference: How quickly can the organization move from a new piece of data to a model-driven insight?

- AI ROI (Return on Investment): Comparing the cost of GPU/API compute against the labor hours saved and the speed of market entry.

Common Mistakes When Going AI-Native

Transitioning is difficult, and many organizations stumble. Here are the most frequent pitfalls observed as of early 2026:

- “Bolt-on” AI: Trying to add AI features to a legacy product without changing the underlying architecture. This usually results in a slow, clunky user experience.

- Over-reliance on One Vendor: Putting all your eggs in one LLM provider’s basket. AI-native organizations use Model Agnosticism, allowing them to swap between providers (OpenAI, Google, Anthropic, or Open Source) based on cost and performance.

- Ignoring “AI Fatigue”: Bombarding employees with too many new tools at once. Successful organizations phase their rollout, starting with high-impact, low-risk internal automations.

- Underestimating Compute Costs: Failing to account for the “token tax.” Without optimization, the cost of running autonomous agents can spiral out of control.

Conclusion: Your Next Steps

Building an AI-native tech organization is not a weekend project; it is a multi-year strategic pivot. However, by February 2026, the gap between AI-native companies and legacy firms has become an unbridgeable chasm. The companies winning today are those that stopped treating AI as an “add-on” and started treating it as the foundation.

To begin your transition, I recommend the following three-step plan:

- Audit Your Data Infrastructure: Before touching a single AI model, ensure your data is accessible, clean, and stored in a format (like a vector database) that AI can actually use.

- Pilot One “Agentic” Workflow: Identify a single high-friction process—such as customer onboarding or bug triaging—and build an autonomous agent to handle it. Learn from the failures of this pilot before scaling.

- Invest in “Literacy,” Not Just “Tools”: Spend as much on training your people to be “AI Orchestrators” as you do on GPU credits. The best technology is useless if your team is afraid of it or doesn’t know how to direct it.

The future of tech is autonomous, integrated, and intelligent. By following this blueprint, you are ensuring your organization is the one setting the pace, rather than struggling to keep up.

FAQs

What is the difference between an AI-first and an AI-native organization?

While “AI-first” implies prioritizing AI in the product roadmap, “AI-native” means the entire internal structure, from data handling to HR and DevOps, is built to be executed by or with AI. AI-native is the operational evolution of the AI-first philosophy.

How do we handle the high cost of AI inference in 2026?

The most successful organizations use a “tiered inference” model. Simple tasks are handled by small, local, or open-source models (SLMs) which are cheap to run. Only complex, high-stakes reasoning tasks are sent to expensive, large-scale flagship models.

Does becoming AI-native mean we have to fire our junior developers?

No. However, the role of a junior developer changes. Instead of writing basic boilerplate code, they become “Junior Orchestrators,” responsible for auditing AI-generated code and learning how to prompt and bridge different agentic systems.

What is the biggest security risk for an AI-native company?

Data exfiltration via “indirect prompt injection.” This is where an AI agent reads an external email or website that contains hidden instructions, tricking the agent into sending sensitive company data to an attacker. Robust “guardrail” models are the primary defense against this.

How do we maintain a “human touch” in an AI-native company?

AI-native organizations actually have more time for human interaction because the “robotic” tasks are automated. Use that reclaimed time for high-level strategy, creative design, and deep, empathetic customer relationship building—things AI still cannot replicate.

References

- Gartner: “Top Strategic Technology Trends for 2026: AI TRiSM and Beyond.” (Official Industry Report).

- McKinsey & Company: “The Economic Potential of Generative AI: The 2026 Perspective.” (Financial Analysis).

- IEEE Xplore: “Standard for Ethically Aligned Design of Autonomous and Intelligent Systems.” (Academic Standard).

- OpenAI Technical Blog: “Scaling Agentic Workflows in the Enterprise.” (Technical Documentation).

- ISO/IEC 42001: “Information technology — Artificial intelligence — Management system.” (International Standard).

- NIST (National Institute of Standards and Technology): “AI Risk Management Framework 2.0.” (Government Policy).

- IDC: “Worldwide Artificial Intelligence Spending Guide, 2025-2026 Forecast.” (Market Research).

- Google DeepMind: “The Rise of Neuro-symbolic AI in Corporate Infrastructure.” (Technical Paper).