In the rapidly evolving landscape of enterprise technology, the traditional “break-fix” cycle is becoming a relic of the past. As of February 2026, the complexity of distributed systems and microservices has reached a point where human intervention alone can no longer keep pace with the volume of potential failure points. This is where Agentic Self-Healing enters the frame.

Agentic self-healing is a paradigm shift in software maintenance where autonomous AI agents—powered by Large Language Models (LLMs) and advanced observability tools—don’t just alert developers to a problem; they diagnose the root cause, write a fix, test it in a sandboxed environment, and deploy the remediation without direct human oversight. Unlike traditional “self-healing” scripts that rely on hard-coded “if-this-then-that” logic, agentic systems possess the reasoning capabilities to handle novel, “black swan” events that have never been seen before.

Key Takeaways

- Proactive vs. Reactive: Moves beyond simple monitoring to autonomous remediation.

- Reduced MTTR: Drastically lowers the Mean Time to Recovery by eliminating the manual triage phase.

- Technical Debt Mitigation: Addresses minor bugs and performance bottlenecks that humans often ignore due to time constraints.

- Human-in-the-Loop (HITL): Strategic oversight remains essential for high-stakes enterprise deployments.

Who This Is For

This guide is designed for Chief Technology Officers (CTOs), DevOps Architects, Site Reliability Engineers (SREs), and Software Engineering Managers who are struggling with the rising cost of technical debt and the “toil” of manual bug fixing. If your organization manages complex, high-availability enterprise applications, understanding agentic self-healing is no longer optional—it is a competitive necessity.

The Evolution of Software Maintenance: From Scripts to Agents

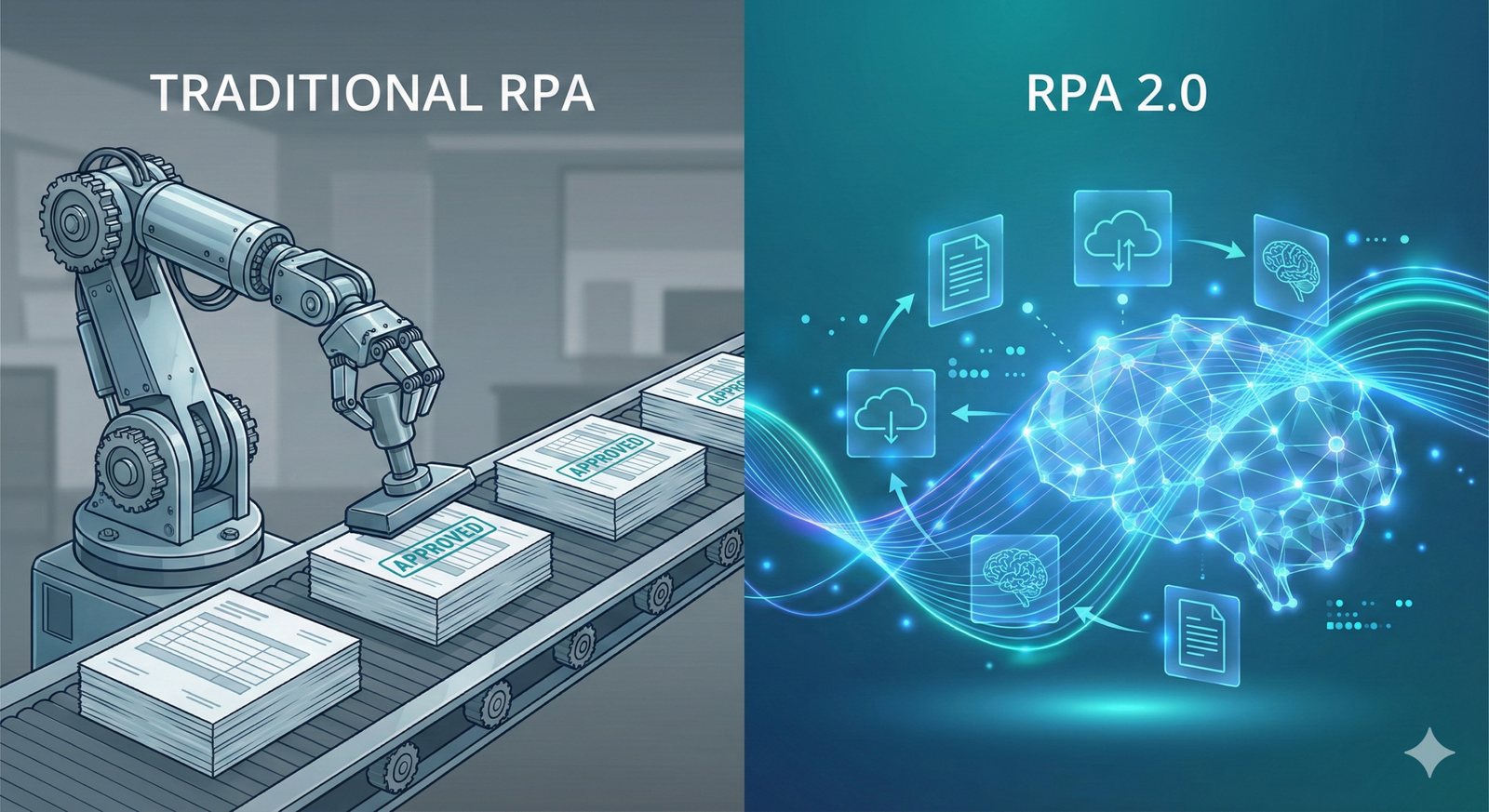

To understand where we are going, we must look at where we have been. Software maintenance has historically moved through four distinct “Ages”:

- The Age of Manual Triage: Developers waited for a user ticket or a crash log, then manually stepped through code to find the error.

- The Age of Rule-Based Automation: Systems used static scripts (e.g., “if memory > 90%, restart service”). These were efficient but brittle, unable to handle unexpected logic errors.

- The Age of ML Observability: Machine Learning models began predicting outages based on historical patterns, providing “AIOps” alerts that pointed humans in the right direction.

- The Age of Agentic Self-Healing: Current state-of-the-art systems that use reasoning agents to navigate the codebase, understand context, and execute multi-step fixes.

The “Agentic” part of the name is crucial. An “agent” in this context is an AI entity capable of goal-oriented behavior. It perceives its environment (via logs and telemetry), reasons about the best path forward (via LLMs), and takes action (via CLI tools or API calls to the codebase).

The Architecture of an Agentic Self-Healing System

A robust enterprise self-healing system is more than just a chatbot connected to a terminal. It requires a sophisticated feedback loop that mirrors the human debugging process but at machine speed.

1. The Observability Layer (The Senses)

The system must have total visibility into the application’s health. This includes logs, metrics, and traces (The Three Pillars of Observability). In an agentic setup, this layer also includes “semantic monitoring,” which identifies anomalies not just in performance, but in business logic—such as an API returning a valid but logically incorrect response.

2. The Reasoning Engine (The Brain)

When an anomaly is detected, the agent uses a reasoning framework (like Chain-of-Thought) to hypothesize why the error is occurring. By utilizing Retrieval-Augmented Generation (RAG), the agent pulls relevant snippets from the internal documentation, past Jira tickets, and the current codebase to gain context.

3. The Actuator (The Hands)

Once a fix is conceptualized, the agent utilizes a set of tools. This might include:

- Git commands to create a new branch.

- Linter and Compiler tools to ensure the fix is syntactically correct.

- Unit and Integration tests to verify the fix doesn’t break existing functionality.

4. The Validation Loop

Before a fix reaches production, the agent must prove its efficacy. It deploys the code to a “Digital Twin” or a staging environment, simulates the traffic that caused the original error, and verifies that the bug is resolved.

Core Technologies Powering Automated Remediation

As of 2026, several key technologies have converged to make agentic self-healing viable for the enterprise.

Large Language Models (LLMs) and Code Intelligence

Modern models have been trained on trillions of lines of code. They understand not just syntax, but design patterns. In an enterprise setting, “Private LLMs” are used to ensure that sensitive proprietary code never leaves the organization’s secure perimeter.

Retrieval-Augmented Generation (RAG)

An agent is only as good as its context. RAG allows the agent to “read” the entire project history. If a similar bug was fixed three years ago by a developer who has since left the company, the agent can find that historical context and apply a similar logic to the current problem.

Symbolic AI Integration

To prevent the “hallucinations” common in generative AI, enterprise systems often layer LLMs with symbolic AI (formal logic). This creates a “Guardrail” where the LLM proposes a fix, but a symbolic engine verifies that the fix adheres to strict security and architectural rules.

Strategic Benefits for the Enterprise

Implementing agentic self-healing isn’t just about saving developer time; it’s about fundamentally changing the economics of software production.

1. Eradicating “Toil”

Google’s SRE handbook defines “toil” as manual, repetitive work that provides no long-term value. Investigating 50 minor null-pointer exceptions a week is toil. By automating these, engineers can focus on feature innovation and architecture.

2. 24/7 “Follow the Sun” Maintenance

Bugs don’t wait for business hours. An agentic system can resolve a critical database deadlock at 3:00 AM on a Sunday, ensuring the system is healthy before the first human engineer even opens their laptop.

3. Managing “Legacy Debt”

Most enterprises run on codebases that are decades old. These systems are often “frozen” because no one understands how they work. Agentic systems can map these legacy environments, documenting them and fixing vulnerabilities that have been dormant for years.

| Feature | Traditional Automation | Agentic Self-Healing |

| Logic | Fixed, Pre-defined | Dynamic, Reasoning-based |

| Scope | Known failures | Unknown/Novel failures |

| Speed | Fast (seconds) | Moderate (minutes) |

| Autonomy | Low (requires script) | High (requires goal) |

| Accuracy | High for specific tasks | High with validation loops |

Implementation Roadmap: How to Adopt Agentic Systems

You cannot flip a switch and have a fully autonomous enterprise. The transition to agentic self-healing should be phased to build trust and ensure safety.

Phase 1: The Advisory Agent

In this stage, the agent monitors the system and generates “Fix Proposals.” It creates a Pull Request (PR) and pings a human developer on Slack. The human reviews the code, clicks “Approve,” and the agent handles the deployment. This builds a baseline of trust in the agent’s suggestions.

Phase 2: Low-Risk Autonomy

The agent is given “write access” to non-critical environments (Dev/Test). It is allowed to fix documentation errors, linter warnings, and minor unit test failures autonomously.

Phase 3: Production Remediation (The North Star)

The agent is integrated into the production CI/CD pipeline. It handles “low-severity” production incidents independently. For “high-severity” incidents, it performs the initial triage and prepares a “war room” dashboard for humans, significantly shortening the bridge-to-resolution.

Safety, Security, and Ethical Considerations

Disclaimer: Automated bug fixing involves granting AI agents write access to your codebase. This carries inherent risks including potential security vulnerabilities or unintended system behavior. Always implement strict sandbox environments and human-in-the-loop overrides for mission-critical systems.

The Risk of Hallucinations

LLMs can sometimes suggest “creative” solutions that are technically incorrect or inefficient. To mitigate this, enterprise agents must be integrated with Automated Test Suites. If a fix doesn’t pass 100% of the existing tests, it is automatically rejected.

Prompt Injection and Security

If an agent can write code, an attacker might try to “trick” the agent into writing a backdoor. Security protocols must ensure that agents can only pull information from trusted internal sources and that all proposed code undergoes an automated security scan (SAST/DAST) before deployment.

The “Black Box” Problem

Explainability is vital. An agent shouldn’t just fix a bug; it must provide a detailed report explaining why it chose that specific fix. This allows human engineers to audit the agent’s reasoning and ensure it aligns with corporate coding standards.

Common Mistakes in Automated Bug Fixing

Even with the best technology, implementation can fail due to organizational friction or poor strategy.

- Ignoring the “Data Quality” of Logs: If your logs are noisy or uninformative, the agent will be “blind.” Agentic healing requires high-fidelity telemetry.

- Lack of Comprehensive Testing: An agent can only be as confident as your test suite. If you have low test coverage, the agent might fix one bug while introducing two others.

- Over-Automation Too Quickly: Trying to automate complex logic fixes before the agent has mastered simple infrastructure remediation leads to catastrophic failures and loss of stakeholder trust.

- Excluding Developers from the Process: Engineers shouldn’t feel threatened by agents. They should be the “Architects” who manage the agents, shifting their role from “mechanic” to “inspector.”

Real-World Examples of Agentic Remediation

Scenario A: The Memory Leak

An enterprise Java application begins experiencing slow response times. The agent detects a “Sawtooth” pattern in memory usage.

- Agent Action: It identifies the specific class causing the leak by analyzing heap dumps.

- Fix: It notices a database connection isn’t being closed in a try-catch block. It rewrites the block using a try-with-resources statement, verifies the fix in a containerized test, and submits the PR.

Scenario B: The API Version Mismatch

After a third-party vendor updates their API, your integration starts throwing 400 Bad Request errors.

- Agent Action: The agent reads the vendor’s updated documentation (via a web-search tool) and compares it to the current integration code.

- Fix: It identifies a renamed field, updates the data mapping, and deploys the fix before users even report the issue.

Conclusion: The Future of the Autonomous Enterprise

Agentic self-healing represents the “End of the Beginning” for DevOps. We are moving away from a world where engineers are defined by their ability to find “needles in haystacks” (debugging) and toward a world where they are defined by their ability to design resilient systems that maintain themselves.

By February 2026, the gap between companies utilizing agentic systems and those relying on manual labor has widened significantly. Organizations that embrace these autonomous agents are seeing 30-40% increases in engineering velocity and a 50% reduction in downtime costs.

Next Steps for Your Organization:

- Audit your observability stack: Ensure you have the telemetry needed for an agent to reason.

- Clean your “Context”: Organize your internal documentation so RAG systems can accurately retrieve information.

- Start a Pilot: Identify a “low-stakes” service and implement an advisory agent to begin the journey toward full autonomy.

The goal isn’t to replace the developer, but to liberate them. When the software heals itself, the human can finally focus on creating what’s next.

FAQs

1. Is agentic self-healing safe for regulated industries like Finance or Healthcare?

Yes, provided there is a “Human-in-the-Loop” (HITL) requirement for production deployments. In these industries, the agent acts as a highly advanced “Assistant” that prepares the fix and provides the evidence for a human compliance officer or senior dev to sign off on.

2. How does this differ from standard AIOps?

AIOps is primarily about detection and prediction (telling you something is wrong). Agentic self-healing is about remediation (fixing what is wrong). While AIOps points to the fire, the agent picks up the fire extinguisher and puts it out.

3. Do I need a massive team of AI researchers to build this?

No. Most enterprise agentic systems are now built using orchestration frameworks (like LangGraph or CrewAI) and integrated via standard DevOps tools. The focus is more on “Agent Orchestration” than on training the underlying models.

4. What happens if the AI agent makes a mistake?

The system must have an “Automated Rollback” mechanism. If the agent deploys a fix and performance metrics degrade further, the orchestrator immediately reverts to the last known “Good State” and alerts a human engineer.

5. Can agents fix complex architectural flaws?

Generally, no. As of early 2026, agents excel at “localized” bugs (logic errors, syntax, resource management). Large-scale architectural changes still require human creativity and long-term strategic thinking.

References

- Google SRE Handbook: Practices for Building Reliable Systems. [Official Documentation]

- IEEE Xplore: Autonomous Agents in Software Engineering: A 2025 Review. [Academic Journal]

- Gartner Research: Predicts 2026: The Rise of Autonomous DevOps. [Industry Report]

- AWS Architecture Center: Implementing Self-Healing Infrastructure on Cloud-Native Systems. [Technical Whitepaper]

- GitHub Next: The Future of AI-Powered Development and Automated Remediation. [Research Blog]

- ACM Digital Library: Large Language Models for Code Generation and Repair. [Technical Paper]

- DORA Report (2025): State of DevOps: The Impact of AI on Engineering Velocity. [Statistical Study]

- NIST Security Standards: Securing AI-Generated Code in Enterprise Environments. [Government Guideline]