In the rapidly evolving landscape of artificial intelligence, the “data wall” has become a formidable barrier for developers and enterprises alike. As of February 2026, the demand for high-quality, diverse, and ethically sourced data has far outpaced the availability of human-generated content. Enter agent-generated data: a paradigm shift where autonomous AI agents are tasked with creating, refining, and labeling the very information used to train the next generation of models.

This process isn’t just about volume; it is about the creation of continuous learning loops. In these loops, an AI system generates data, evaluates its own output (or the output of a peer), identifies gaps in its knowledge, and iterates until it achieves a superior level of performance. This “data flywheel” effect is what allows modern Large Language Models (LLMs) to transcend the limitations of static datasets.

Key Takeaways

- Scalability: Agent-generated data removes the bottleneck of manual human labeling.

- Precision: Specialized “critic” agents can identify hallucinations more rapidly than human reviewers in complex technical domains.

- Efficiency: Continuous learning loops allow models to self-correct and update their knowledge base in near real-time.

- Risk Management: Without proper oversight, agent-generated data can lead to “model collapse” or the amplification of biases.

Who This Is For

This guide is designed for Machine Learning (ML) engineers, AI product managers, and enterprise data architects who are looking to move beyond traditional training methods. If you are struggling with data scarcity, high labeling costs, or model stagnation, understanding the mechanics of agent-driven synthesis is critical for your 2026 AI roadmap.

The Evolution of Data Acquisition: From Manual to Autonomous

For the better part of a decade, the gold standard for AI training was human-in-the-loop (HITL) labeling. Companies like Scale AI and Appen rose to prominence by providing armies of human workers to tag images and text. However, as models have grown more complex, human labeling has become a bottleneck for three primary reasons:

- Complexity: Humans often struggle to provide high-quality feedback on niche technical topics, such as advanced quantum physics or multi-step cryptographic code.

- Cost: Scaling a model to trillions of parameters requires a volume of data that is financially prohibitive to label manually.

- Speed: The iterative cycle of training requires data updates in days, not months.

Agent-generated data addresses these issues by utilizing “Teacher” models to guide “Student” models. As of February 2026, we have moved past simple synthetic text. We are now in the era of Multi-Agent Reasoning Loops, where diverse agents debate a topic to arrive at a ground-truth synthesis that is more accurate than any single human-written source.

Mechanics of Continuous Learning Loops

A continuous learning loop powered by agent-generated data operates as a circular ecosystem rather than a linear pipeline. To implement this effectively, you must understand the four distinct phases of the loop.

Phase 1: The Generation Phase

In this stage, a high-capacity “Generator Agent” produces content based on specific prompts or gap-analysis reports. For example, if a model is underperforming in legal reasoning for Italian contract law, the Generator Agent is tasked with synthesizing thousands of hypothetical contract scenarios, edge cases, and dispute resolutions.

Phase 2: The Evaluation (Critic) Phase

This is where the “Loop” gains its power. A separate “Critic Agent”—often a model with a different architecture or a more constrained, rule-based logic—reviews the generated data. It looks for:

- Logical Consistency: Does the conclusion follow the premises?

- Factuality: Does the data align with known external knowledge bases?

- Format Adherence: Does the data meet the technical schema required for training?

Phase 3: The Refinement Phase

If the Critic Agent identifies an error, the data is not simply discarded. It is sent back to the Generator with a “Critique Trace.” The Generator then attempts to fix the error. This Self-Correction process creates high-density reasoning chains that are incredibly valuable for training models on how to “think” rather than just predict the next word.

Phase 4: Integration and Retraining

The final, verified data is integrated into the training set. Because the data was generated to fill specific performance gaps, the resulting model update is highly targeted and efficient.

RLAIF vs. RLHF: The Shift Toward Scalable Oversight

To understand agent-generated data, one must understand the shift from Reinforcement Learning from Human Feedback (RLHF) to Reinforcement Learning from AI Feedback (RLAIF).

| Feature | RLHF (Human Feedback) | RLAIF (AI Feedback) |

| Scalability | Limited by human hours. | Nearly infinite; limited by compute. |

| Cost | High (hourly wages for experts). | Low (token costs/compute). |

| Consistency | Subjective; varies between humans. | High; based on programmed “Constitutions.” |

| Niche Expertise | Requires hiring specialists. | Can be simulated by specialized agents. |

RLAIF uses a “Constitutional AI” approach, pioneered by companies like Anthropic. An agent is given a set of principles (a Constitution) and uses those rules to label and rank data. This allows for Scalable Oversight, where a small team of humans can supervise a massive fleet of AI agents who are doing the heavy lifting of data curation.

Practical Applications of Agent-Generated Data

1. Software Engineering and Code Generation

Agent-generated data is revolutionizing how we build coding assistants. Instead of just scraping GitHub, agents can generate a piece of code, run it in a sandboxed environment, observe the error logs, fix the code, and then save the entire “problem-to-solution” journey as training data. This provides the model with “negative examples” (what not to do), which are rare in human-written code.

2. Medical Research and Healthcare

Safety Disclaimer: AI-generated medical data must be used only for model training in simulated environments. It should never replace clinical judgment or be used to diagnose patients without human professional oversight.

In healthcare, privacy laws (like HIPAA) make it difficult to access real patient data. Agents can generate Synthetic Patient Records that mirror the statistical distribution of real diseases without compromising any individual’s privacy. This allows models to train on rare disease scenarios that might only occur once in every million real-world cases.

3. Robotics and Simulation-to-Reality (Sim2Real)

In robotics, agents generate “Synthetic Experiences.” An agent in a physics engine can simulate millions of ways to pick up a fragile object. The data generated from these failures and successes is then used to train the physical robot’s neural network.

Common Mistakes in Implementing AI Data Loops

Despite the potential, many organizations stumble when first deploying autonomous data loops. Here are the most frequent pitfalls:

The “Echo Chamber” or Model Collapse

If an AI model is trained exclusively on its own unverified outputs, it begins to lose its grasp on reality. This is known as Model Collapse. Over time, the model’s probability distribution narrows, and it forgets the “long tail” of human language, eventually producing repetitive, nonsensical gibberish.

- The Fix: Always inject a percentage of “Ground Truth” human data into every training epoch and use diverse critic agents.

Ignoring Data Provenance

When data is generated by agents, it is easy to lose track of where a specific “fact” came from. If an agent hallucinates a fake historical event and that event is baked into the training loop, it becomes nearly impossible to “unlearn.”

- The Fix: Implement strict metadata tagging for every piece of agent-generated data, including the timestamp, the model version of the generator, and the specific prompt used.

Lack of Diversity in the Critic Pool

If the Generator and the Critic are the same model, the Critic will likely share the same biases and blind spots as the Generator.

- The Fix: Use an ensemble of models for evaluation. Use one model for logic, another for tone, and a third for factual verification against a trusted database (like Wikipedia or PubMed).

Ensuring Data Quality and Mitigating Hallucinations

As of 2026, the gold standard for quality control in agent-generated data is Multi-Agent Debate. In this framework, two agents are given the same data point and asked to argue for and against its validity. A third “Judge” agent listens to the debate and makes a final determination.

Verification Frameworks

To maintain high density of value, use the following verification checklist for your data loops:

- Semantic Similarity Check: Ensure the synthetic data isn’t just a slightly rephrased version of the training set.

- Cross-Reference Validation: For factual data, agents must provide a “Source Link” or a logical proof that can be verified by a deterministic script.

- Toxicity and Bias Filtering: Agents should be programmed with a “Negative Constraint” list to ensure they aren’t generating harmful or biased content during the synthesis phase.

The Ethics and Security of Autonomous Data Synthesis

As we move toward a future where the majority of the world’s data may soon be AI-generated, ethical considerations are paramount.

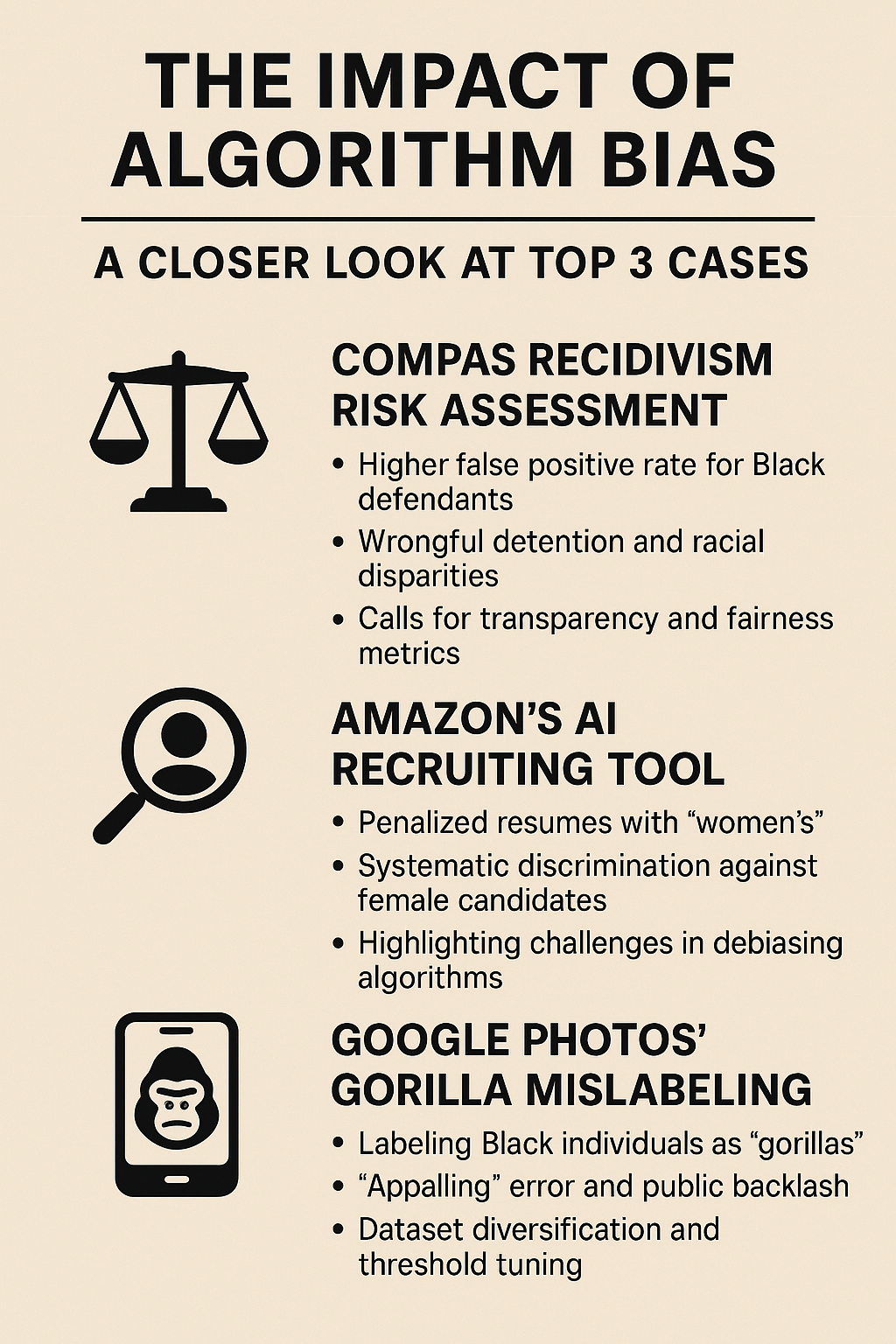

- Bias Amplification: If a model has a 1% bias toward a certain demographic, and it generates data based on that bias, the next model might have a 5% bias. This compounding effect can lead to systemic discrimination.

- Data Poisoning: Malicious actors could potentially “poison” an organization’s learning loop by injecting subtly flawed data that an automated critic might miss but which creates “backdoors” in the model’s logic.

- Intellectual Property: Who owns the copyright to data generated by an agent? As of current 2026 legal interpretations, AI-generated content generally lacks human authorship for copyright protection, creating a complex landscape for proprietary training sets.

The Future: Self-Improving AI Systems

We are standing on the precipice of Recursive Self-Improvement. This is the point where an AI system can analyze its own source code, identify inefficiencies, generate data to test new architectures, and effectively “upgrade” itself.

While we are not yet at a point of runaway intelligence, the continuous learning loop is the foundation of this progress. By 2027, expect to see Multi-Modal Loops where an AI generates a video, a second AI critiques the physics of that video, and a third AI uses that feedback to write better simulation code.

The organizations that master the balance between autonomous generation and rigorous, agent-led oversight will be the ones that lead the next decade of innovation.

Conclusion

Agent-generated data is no longer a futuristic concept; it is the primary engine driving the AI industry forward as of February 2026. By shifting from static, human-labeled datasets to dynamic, continuous learning loops, developers can build models that are more accurate, more specialized, and significantly more scalable.

However, the power of these loops comes with the responsibility of rigorous verification. To succeed, you must avoid the pitfalls of model collapse and bias amplification by implementing multi-agent debate protocols and maintaining strict data provenance. The goal is not just to create more data, but to create better data—data that captures the nuance of reasoning and the precision of expert knowledge.

Your Next Steps:

- Audit your current data pipeline: Identify which sections are currently bottle-necked by human labeling.

- Pilot a Critic-Agent: Before generating new data, deploy an agent to evaluate your existing data for quality.

- Implement a small-scale RLAIF loop: Start with a specific domain (like customer support FAQs) and observe how the model improves over three iterative cycles of agent-led refinement.

FAQs

What is the difference between synthetic data and agent-generated data?

While all agent-generated data is synthetic, not all synthetic data is agent-generated. Traditional synthetic data is often created using simple statistical distributions or templates. Agent-generated data involves a reasoning process where an AI “thinks” through the context to create complex, high-fidelity information.

Can agent-generated data lead to model collapse?

Yes. If a model only learns from its own output without external grounding or diverse feedback, it can enter a feedback loop where it becomes repetitive and loses accuracy. This is why “critic” agents and human-in-the-loop samples are essential.

Is agent-generated data legal for training?

As of 2026, using agent-generated data for internal model training is widely accepted. However, transparency is required when that data is used in commercial products, especially in regulated industries like finance and healthcare.

How do you measure the quality of agent-generated data?

Quality is typically measured through “Gold Standard” testing. A small subset of the agent-generated data is manually reviewed by human experts. If the agent’s evaluation matches the human’s evaluation above a certain threshold (e.g., 95%), the loop is considered reliable.

Will agents eventually replace human data scientists?

No. Agents will shift the role of the data scientist from “data labeler” to “architect of the loop.” Humans will focus on setting the “Constitution,” defining the goals, and handling the most complex ethical edge cases.

References

- Anthropic Research (2022). Constitutional AI: Harmlessness from AI Feedback. (Foundation for RLAIF).

- OpenAI Technical Report (2024). Scaling Laws for Synthetic Data in Large Language Models. 3. Stanford Institute for Human-Centered AI (HAI). The 2025 AI Index Report: The Rise of Autonomous Data Curation.

- DeepMind (2023). Self-Instruct: Aligning Language Models with Self-Generated Instructions.

- Journal of Machine Learning Research (2025). Mitigating Model Collapse in Recursive Training Loops.

- MIT Technology Review (Feb 2026). How Synthetic Data Solved the 2025 Data Drought.

- IEEE Xplore. Multi-Agent Reasoning Systems for Verifiable Data Synthesis.

- NVIDIA Research. Sim2Real: Using Synthetic Environments to Train Physical Robots.

- Microsoft Research. Orca 2: Teaching Small Language Models How to Reason.

- Legal AI Review (2026). Copyright and Ownership of Autonomous AI Outputs.